Bin Weng

Towards Best Practice of Interpreting Deep Learning Models for EEG-based Brain Computer Interfaces

Feb 18, 2022

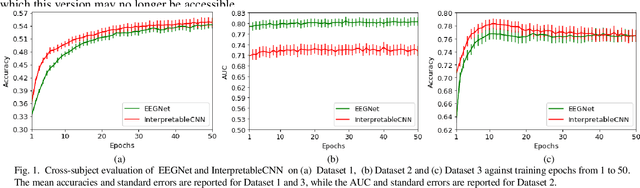

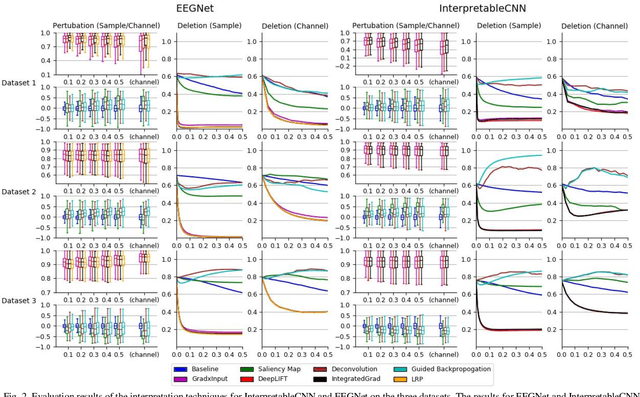

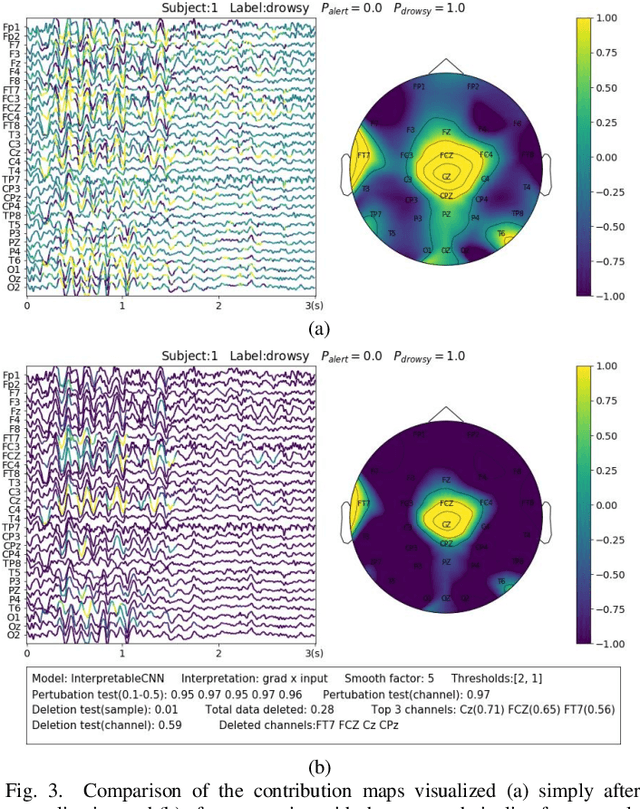

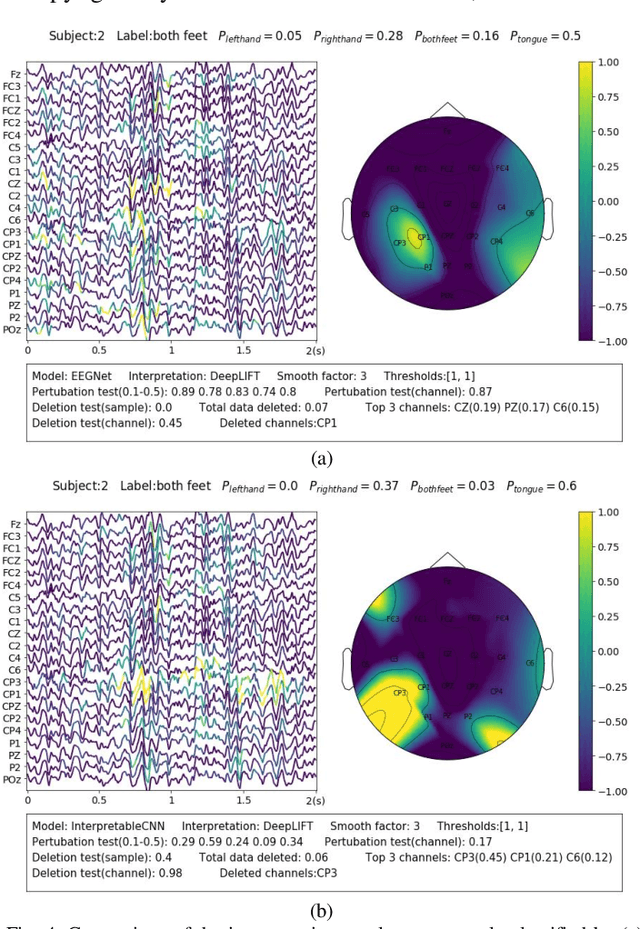

Abstract:Understanding deep learning models is important for EEG-based brain-computer interface (BCI), since it not only can boost trust of end users but also potentially shed light on reasons that cause a model to fail. However, deep learning interpretability has not yet raised wide attention in this field. It remains unknown how reliably existing interpretation techniques can be used and to which extent they can reflect the model decisions. In order to fill this research gap, we conduct the first quantitative evaluation and explore the best practice of interpreting deep learning models designed for EEG-based BCI. We design metrics and test seven well-known interpretation techniques on benchmark deep learning models. Results show that methods of GradientInput, DeepLIFT, integrated gradient, and layer-wise relevance propagation (LRP) have similar and better performance than saliency map, deconvolution and guided backpropagation methods for interpreting the model decisions. In addition, we propose a set of processing steps that allow the interpretation results to be visualized in an understandable and trusted way. Finally, we illustrate with samples on how deep learning interpretability can benefit the domain of EEG-based BCI. Our work presents a promising direction of introducing deep learning interpretability to EEG-based BCI.

A Person Re-identification Data Augmentation Method with Adversarial Defense Effect

Feb 10, 2021

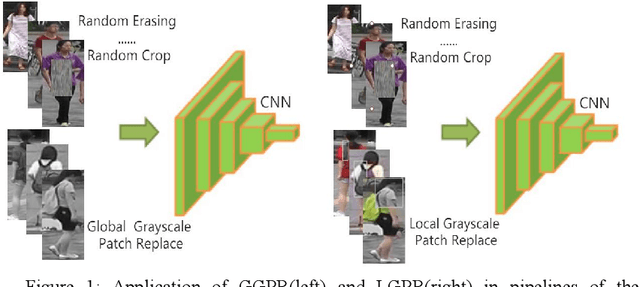

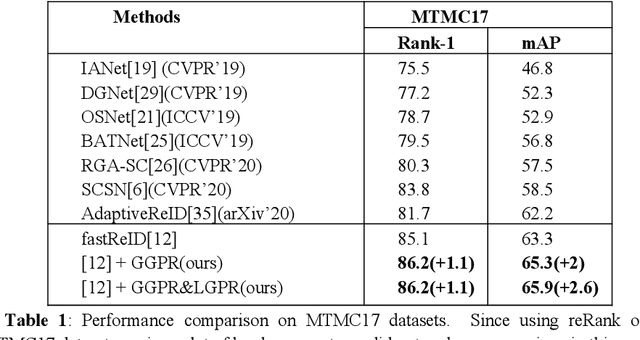

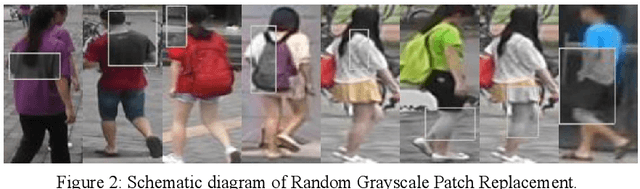

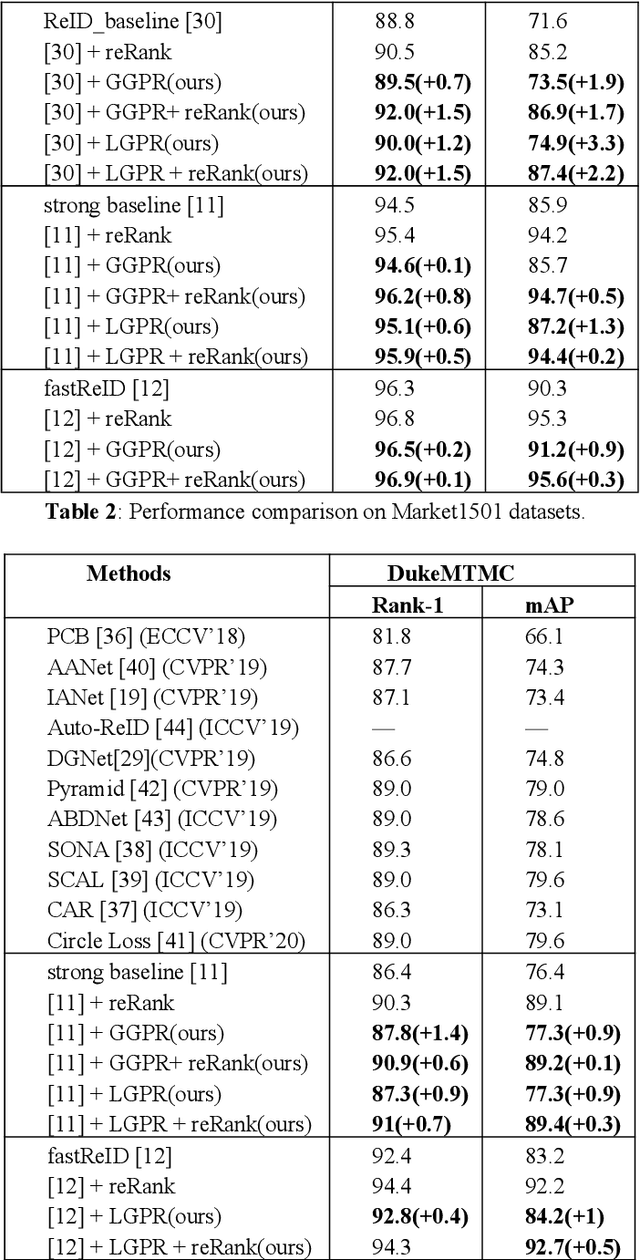

Abstract:The security of the Person Re-identification(ReID) model plays a decisive role in the application of ReID. However, deep neural networks have been shown to be vulnerable, and adding undetectable adversarial perturbations to clean images can trick deep neural networks that perform well in clean images. We propose a ReID multi-modal data augmentation method with adversarial defense effect: 1) Grayscale Patch Replacement, it consists of Local Grayscale Patch Replacement(LGPR) and Global Grayscale Patch Replacement(GGPR). This method can not only improve the accuracy of the model, but also help the model defend against adversarial examples; 2) Multi-Modal Defense, it integrates three homogeneous modal images of visible, grayscale and sketch, and further strengthens the defense ability of the model. These methods fuse different modalities of homogeneous images to enrich the input sample variety, the variaty of samples will reduce the over-fitting of the ReID model to color variations and make the adversarial space of the dataset that the attack method can find difficult to align, thus the accuracy of model is improved, and the attack effect is greatly reduced. The more modal homogeneous images are fused, the stronger the defense capabilities is . The proposed method performs well on multiple datasets, and successfully defends the attack of MS-SSIM proposed by CVPR2020 against ReID [10], and increases the accuracy by 467 times(0.2% to 93.3%).The code is available at https://github.com/finger-monkey/ReID_Adversarial_Defense.

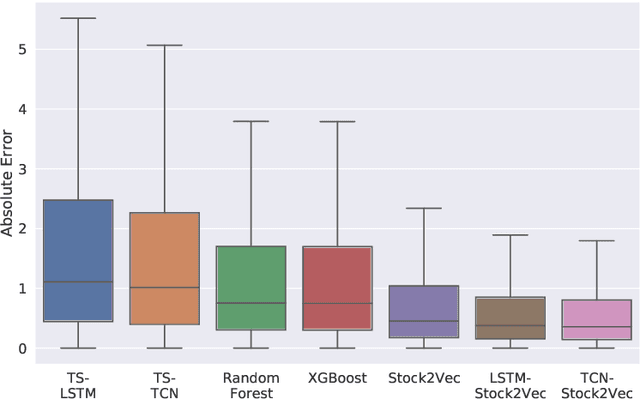

Stock2Vec: A Hybrid Deep Learning Framework for Stock Market Prediction with Representation Learning and Temporal Convolutional Network

Sep 29, 2020

Abstract:We have proposed to develop a global hybrid deep learning framework to predict the daily prices in the stock market. With representation learning, we derived an embedding called Stock2Vec, which gives us insight for the relationship among different stocks, while the temporal convolutional layers are used for automatically capturing effective temporal patterns both within and across series. Evaluated on S&P 500, our hybrid framework integrates both advantages and achieves better performance on the stock price prediction task than several popular benchmarked models.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge