Atsushi Shirafuji

Refactoring Programs Using Large Language Models with Few-Shot Examples

Nov 20, 2023

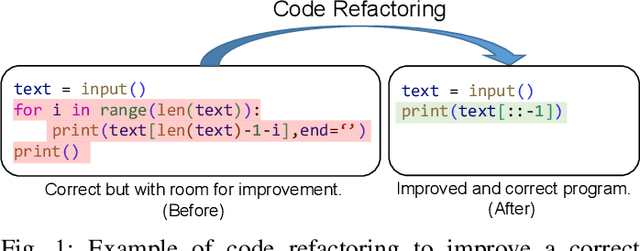

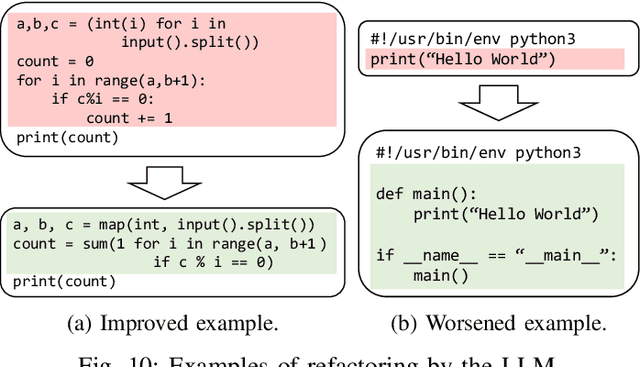

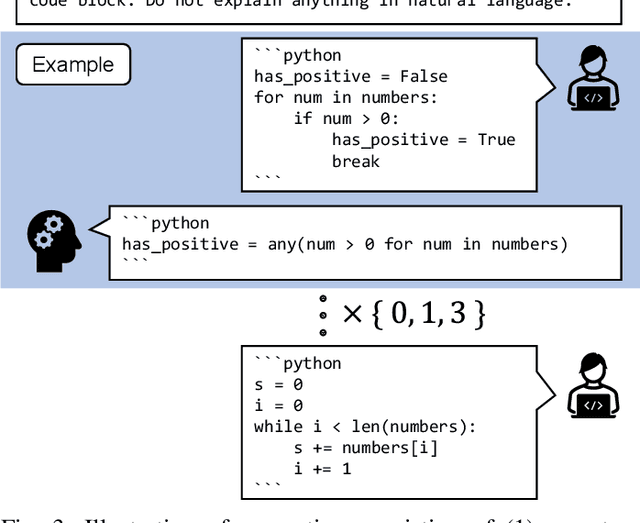

Abstract:A less complex and more straightforward program is a crucial factor that enhances its maintainability and makes writing secure and bug-free programs easier. However, due to its heavy workload and the risks of breaking the working programs, programmers are reluctant to do code refactoring, and thus, it also causes the loss of potential learning experiences. To mitigate this, we demonstrate the application of using a large language model (LLM), GPT-3.5, to suggest less complex versions of the user-written Python program, aiming to encourage users to learn how to write better programs. We propose a method to leverage the prompting with few-shot examples of the LLM by selecting the best-suited code refactoring examples for each target programming problem based on the prior evaluation of prompting with the one-shot example. The quantitative evaluation shows that 95.68% of programs can be refactored by generating 10 candidates each, resulting in a 17.35% reduction in the average cyclomatic complexity and a 25.84% decrease in the average number of lines after filtering only generated programs that are semantically correct. Furthermore, the qualitative evaluation shows outstanding capability in code formatting, while unnecessary behaviors such as deleting or translating comments are also observed.

Rule-Based Error Classification for Analyzing Differences in Frequent Errors

Nov 01, 2023

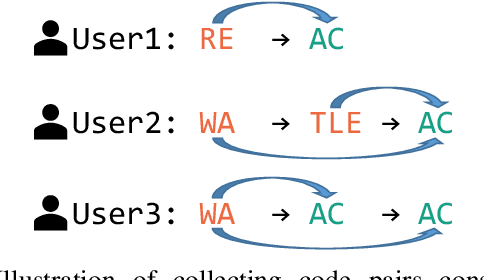

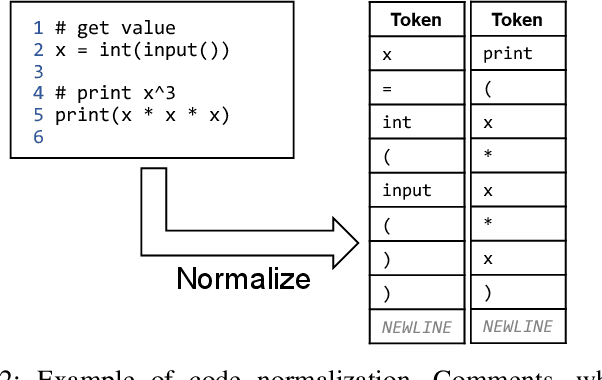

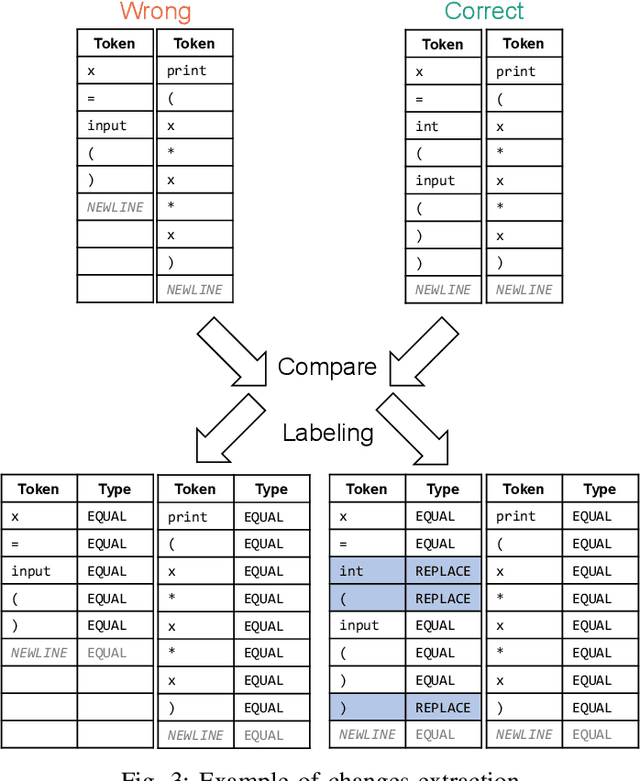

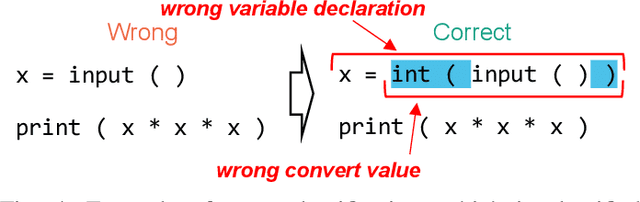

Abstract:Finding and fixing errors is a time-consuming task not only for novice programmers but also for expert programmers. Prior work has identified frequent error patterns among various levels of programmers. However, the differences in the tendencies between novices and experts have yet to be revealed. From the knowledge of the frequent errors in each level of programmers, instructors will be able to provide helpful advice for each level of learners. In this paper, we propose a rule-based error classification tool to classify errors in code pairs consisting of wrong and correct programs. We classify errors for 95,631 code pairs and identify 3.47 errors on average, which are submitted by various levels of programmers on an online judge system. The classified errors are used to analyze the differences in frequent errors between novice and expert programmers. The analyzed results show that, as for the same introductory problems, errors made by novices are due to the lack of knowledge in programming, and the mistakes are considered an essential part of the learning process. On the other hand, errors made by experts are due to misunderstandings caused by the carelessness of reading problems or the challenges of solving problems differently than usual. The proposed tool can be used to create error-labeled datasets and for further code-related educational research.

Program Repair with Minimal Edits Using CodeT5

Sep 26, 2023Abstract:Programmers often struggle to identify and fix bugs in their programs. In recent years, many language models (LMs) have been proposed to fix erroneous programs and support error recovery. However, the LMs tend to generate solutions that differ from the original input programs. This leads to potential comprehension difficulties for users. In this paper, we propose an approach to suggest a correct program with minimal repair edits using CodeT5. We fine-tune a pre-trained CodeT5 on code pairs of wrong and correct programs and evaluate its performance with several baseline models. The experimental results show that the fine-tuned CodeT5 achieves a pass@100 of 91.95% and an average edit distance of the most similar correct program of 6.84, which indicates that at least one correct program can be suggested by generating 100 candidate programs. We demonstrate the effectiveness of LMs in suggesting program repair with minimal edits for solving introductory programming problems.

Deduplicating and Ranking Solution Programs for Suggesting Reference Solutions

Jul 16, 2023

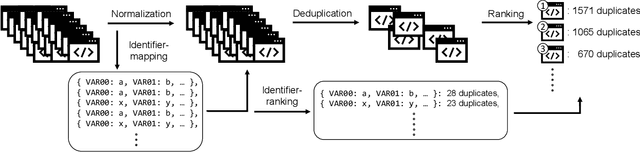

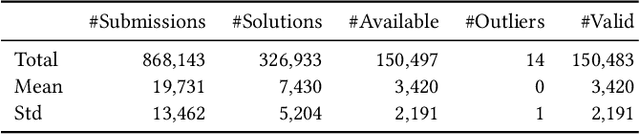

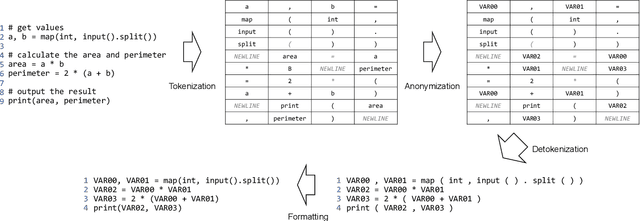

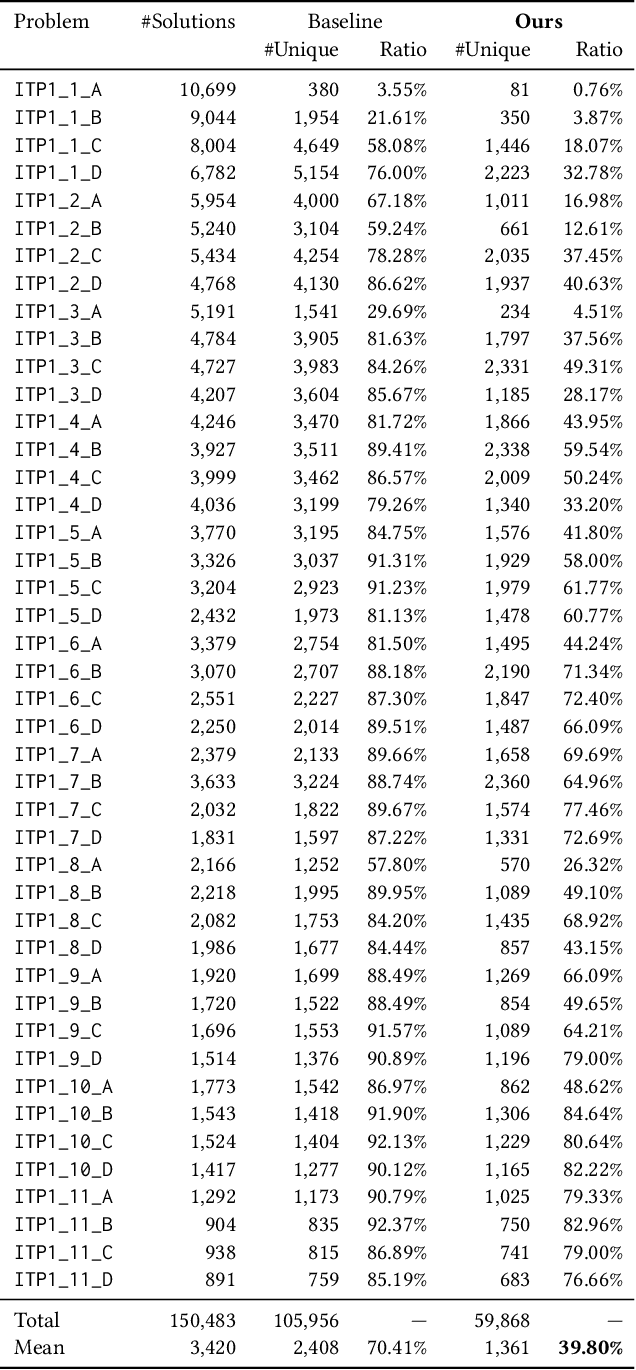

Abstract:Referring to the solution programs written by the other users is helpful for learners in programming education. However, current online judge systems just list all solution programs submitted by users for references, and the programs are sorted based on the submission date and time, execution time, or user rating, ignoring to what extent the program can be a reference. In addition, users struggle to refer to a variety of solution approaches since there are too many duplicated and near-duplicated programs. To motivate the learners to refer to various solutions to learn the better solution approaches, in this paper, we propose an approach to deduplicate and rank common solution programs in each programming problem. Based on the hypothesis that the more duplicated programs adopt a more common approach and can be a reference, we remove the near-duplicated solution programs and rank the unique programs based on the duplicate count. The experiments on the solution programs submitted to a real-world online judge system demonstrate that the number of programs is reduced by 60.20%, whereas the baseline only reduces by 29.59% after the deduplication, meaning that the users only need to refer to 39.80% of programs on average. Furthermore, our analysis shows that top-10 ranked programs cover 29.95% of programs on average, indicating that the users can grasp 29.95% of solution approaches by referring to only 10 programs. The proposed approach shows the potential of reducing the learners' burden of referring to too many solutions and motivating them to learn a variety of better approaches.

Exploring the Robustness of Large Language Models for Solving Programming Problems

Jun 26, 2023

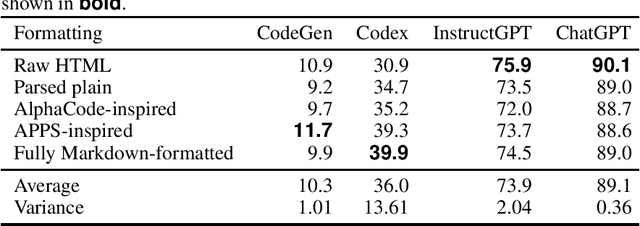

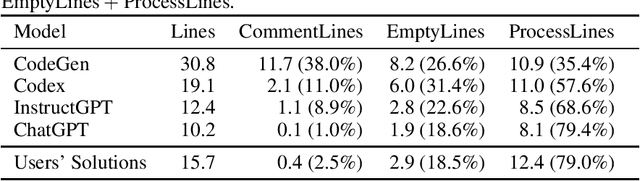

Abstract:Using large language models (LLMs) for source code has recently gained attention. LLMs, such as Transformer-based models like Codex and ChatGPT, have been shown to be highly capable of solving a wide range of programming problems. However, the extent to which LLMs understand problem descriptions and generate programs accordingly or just retrieve source code from the most relevant problem in training data based on superficial cues has not been discovered yet. To explore this research question, we conduct experiments to understand the robustness of several popular LLMs, CodeGen and GPT-3.5 series models, capable of tackling code generation tasks in introductory programming problems. Our experimental results show that CodeGen and Codex are sensitive to the superficial modifications of problem descriptions and significantly impact code generation performance. Furthermore, we observe that Codex relies on variable names, as randomized variables decrease the solved rate significantly. However, the state-of-the-art (SOTA) models, such as InstructGPT and ChatGPT, show higher robustness to superficial modifications and have an outstanding capability for solving programming problems. This highlights the fact that slight modifications to the prompts given to the LLMs can greatly affect code generation performance, and careful formatting of prompts is essential for high-quality code generation, while the SOTA models are becoming more robust to perturbations.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge