Arvind Easwaran

Disentangled and Distilled Encoder for Out-of-Distribution Reasoning with Rademacher Guarantees

Dec 11, 2025

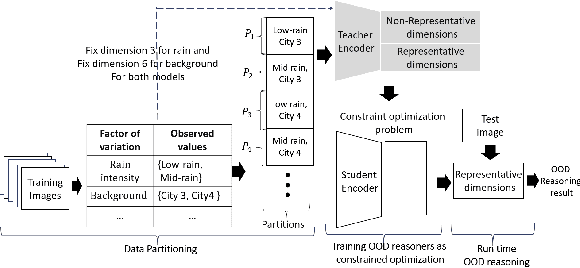

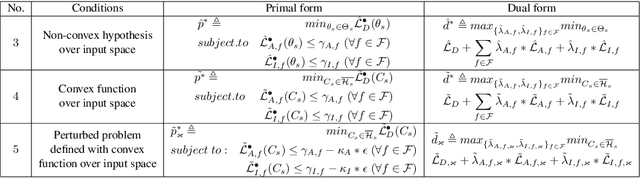

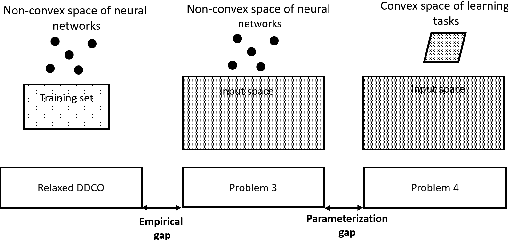

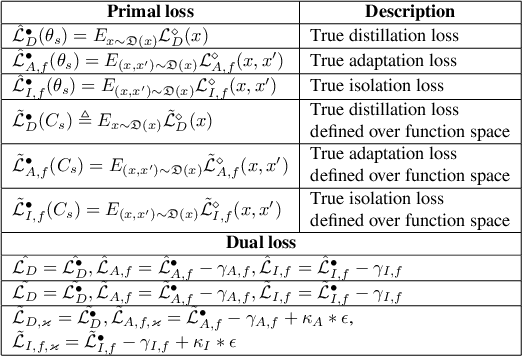

Abstract:Recently, the disentangled latent space of a variational autoencoder (VAE) has been used to reason about multi-label out-of-distribution (OOD) test samples that are derived from different distributions than training samples. Disentangled latent space means having one-to-many maps between latent dimensions and generative factors or important characteristics of an image. This paper proposes a disentangled distilled encoder (DDE) framework to decrease the OOD reasoner size for deployment on resource-constrained devices while preserving disentanglement. DDE formalizes student-teacher distillation for model compression as a constrained optimization problem while preserving disentanglement with disentanglement constraints. Theoretical guarantees for disentanglement during distillation based on Rademacher complexity are established. The approach is evaluated empirically by deploying the compressed model on an NVIDIA

DesCartes Builder: A Tool to Develop Machine-Learning Based Digital Twins

Aug 25, 2025Abstract:Digital twins (DTs) are increasingly utilized to monitor, manage, and optimize complex systems across various domains, including civil engineering. A core requirement for an effective DT is to act as a fast, accurate, and maintainable surrogate of its physical counterpart, the physical twin (PT). To this end, machine learning (ML) is frequently employed to (i) construct real-time DT prototypes using efficient reduced-order models (ROMs) derived from high-fidelity simulations of the PT's nominal behavior, and (ii) specialize these prototypes into DT instances by leveraging historical sensor data from the target PT. Despite the broad applicability of ML, its use in DT engineering remains largely ad hoc. Indeed, while conventional ML pipelines often train a single model for a specific task, DTs typically require multiple, task- and domain-dependent models. Thus, a more structured approach is required to design DTs. In this paper, we introduce DesCartes Builder, an open-source tool to enable the systematic engineering of ML-based pipelines for real-time DT prototypes and DT instances. The tool leverages an open and flexible visual data flow paradigm to facilitate the specification, composition, and reuse of ML models. It also integrates a library of parameterizable core operations and ML algorithms tailored for DT design. We demonstrate the effectiveness and usability of DesCartes Builder through a civil engineering use case involving the design of a real-time DT prototype to predict the plastic strain of a structure.

Improving Reinforcement Learning Sample-Efficiency using Local Approximation

Jul 16, 2025Abstract:In this study, we derive Probably Approximately Correct (PAC) bounds on the asymptotic sample-complexity for RL within the infinite-horizon Markov Decision Process (MDP) setting that are sharper than those in existing literature. The premise of our study is twofold: firstly, the further two states are from each other, transition-wise, the less relevant the value of the first state is when learning the $\epsilon$-optimal value of the second; secondly, the amount of 'effort', sample-complexity-wise, expended in learning the $\epsilon$-optimal value of a state is independent of the number of samples required to learn the $\epsilon$-optimal value of a second state that is a sufficient number of transitions away from the first. Inversely, states within each other's vicinity have values that are dependent on each other and will require a similar number of samples to learn. By approximating the original MDP using smaller MDPs constructed using subsets of the original's state-space, we are able to reduce the sample-complexity by a logarithmic factor to $O(SA \log A)$ timesteps, where $S$ and $A$ are the state and action space sizes. We are able to extend these results to an infinite-horizon, model-free setting by constructing a PAC-MDP algorithm with the aforementioned sample-complexity. We conclude with showing how significant the improvement is by comparing our algorithm against prior work in an experimental setting.

CRLLK: Constrained Reinforcement Learning for Lane Keeping in Autonomous Driving

Mar 28, 2025

Abstract:Lane keeping in autonomous driving systems requires scenario-specific weight tuning for different objectives. We formulate lane-keeping as a constrained reinforcement learning problem, where weight coefficients are automatically learned along with the policy, eliminating the need for scenario-specific tuning. Empirically, our approach outperforms traditional RL in efficiency and reliability. Additionally, real-world demonstrations validate its practical value for real-world autonomous driving.

Guaranteeing Out-Of-Distribution Detection in Deep RL via Transition Estimation

Mar 07, 2025Abstract:An issue concerning the use of deep reinforcement learning (RL) agents is whether they can be trusted to perform reliably when deployed, as training environments may not reflect real-life environments. Anticipating instances outside their training scope, learning-enabled systems are often equipped with out-of-distribution (OOD) detectors that alert when a trained system encounters a state it does not recognize or in which it exhibits uncertainty. There exists limited work conducted on the problem of OOD detection within RL, with prior studies being unable to achieve a consensus on the definition of OOD execution within the context of RL. By framing our problem using a Markov Decision Process, we assume there is a transition distribution mapping each state-action pair to another state with some probability. Based on this, we consider the following definition of OOD execution within RL: A transition is OOD if its probability during real-life deployment differs from the transition distribution encountered during training. As such, we utilize conditional variational autoencoders (CVAE) to approximate the transition dynamics of the training environment and implement a conformity-based detector using reconstruction loss that is able to guarantee OOD detection with a pre-determined confidence level. We evaluate our detector by adapting existing benchmarks and compare it with existing OOD detection models for RL.

Adaptive Hierarchical Graph Cut for Multi-granularity Out-of-distribution Detection

Dec 20, 2024Abstract:This paper focuses on a significant yet challenging task: out-of-distribution detection (OOD detection), which aims to distinguish and reject test samples with semantic shifts, so as to prevent models trained on in-distribution (ID) data from producing unreliable predictions. Although previous works have made decent success, they are ineffective for real-world challenging applications since these methods simply regard all unlabeled data as OOD data and ignore the case that different datasets have different label granularity. For example, "cat" on CIFAR-10 and "tabby cat" on Tiny-ImageNet share the same semantics but have different labels due to various label granularity. To this end, in this paper, we propose a novel Adaptive Hierarchical Graph Cut network (AHGC) to deeply explore the semantic relationship between different images. Specifically, we construct a hierarchical KNN graph to evaluate the similarities between different images based on the cosine similarity. Based on the linkage and density information of the graph, we cut the graph into multiple subgraphs to integrate these semantics-similar samples. If the labeled percentage in a subgraph is larger than a threshold, we will assign the label with the highest percentage to unlabeled images. To further improve the model generalization, we augment each image into two augmentation versions, and maximize the similarity between the two versions. Finally, we leverage the similarity score for OOD detection. Extensive experiments on two challenging benchmarks (CIFAR- 10 and CIFAR-100) illustrate that in representative cases, AHGC outperforms state-of-the-art OOD detection methods by 81.24% on CIFAR-100 and by 40.47% on CIFAR-10 in terms of "FPR95", which shows the effectiveness of our AHGC.

Your Data Is Not Perfect: Towards Cross-Domain Out-of-Distribution Detection in Class-Imbalanced Data

Dec 09, 2024Abstract:Previous OOD detection systems only focus on the semantic gap between ID and OOD samples. Besides the semantic gap, we are faced with two additional gaps: the domain gap between source and target domains, and the class-imbalance gap between different classes. In fact, similar objects from different domains should belong to the same class. In this paper, we introduce a realistic yet challenging setting: class-imbalanced cross-domain OOD detection (CCOD), which contains a well-labeled (but usually small) source set for training and conducts OOD detection on an unlabeled (but usually larger) target set for testing. We do not assume that the target domain contains only OOD classes or that it is class-balanced: the distribution among classes of the target dataset need not be the same as the source dataset. To tackle this challenging setting with an OOD detection system, we propose a novel uncertainty-aware adaptive semantic alignment (UASA) network based on a prototype-based alignment strategy. Specifically, we first build label-driven prototypes in the source domain and utilize these prototypes for target classification to close the domain gap. Rather than utilizing fixed thresholds for OOD detection, we generate adaptive sample-wise thresholds to handle the semantic gap. Finally, we conduct uncertainty-aware clustering to group semantically similar target samples to relieve the class-imbalance gap. Extensive experiments on three challenging benchmarks demonstrate that our proposed UASA outperforms state-of-the-art methods by a large margin.

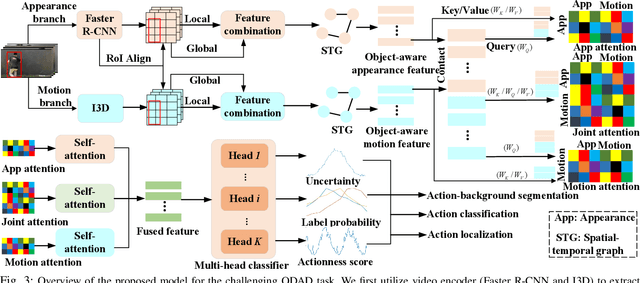

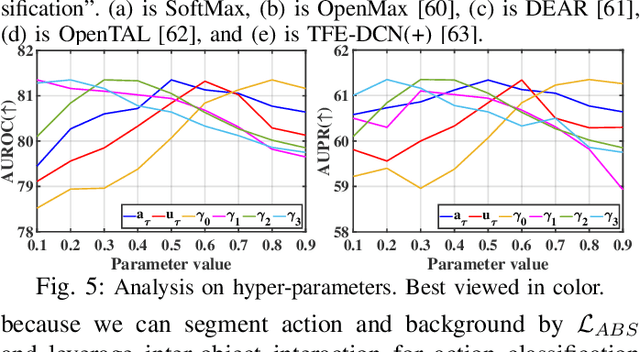

Uncertainty-Guided Appearance-Motion Association Network for Out-of-Distribution Action Detection

Sep 16, 2024

Abstract:Out-of-distribution (OOD) detection targets to detect and reject test samples with semantic shifts, to prevent models trained on in-distribution (ID) dataset from producing unreliable predictions. Existing works only extract the appearance features on image datasets, and cannot handle dynamic multimedia scenarios with much motion information. Therefore, we target a more realistic and challenging OOD detection task: OOD action detection (ODAD). Given an untrimmed video, ODAD first classifies the ID actions and recognizes the OOD actions, and then localizes ID and OOD actions. To this end, in this paper, we propose a novel Uncertainty-Guided Appearance-Motion Association Network (UAAN), which explores both appearance features and motion contexts to reason spatial-temporal inter-object interaction for ODAD.Firstly, we design separate appearance and motion branches to extract corresponding appearance-oriented and motion-aspect object representations. In each branch, we construct a spatial-temporal graph to reason appearance-guided and motion-driven inter-object interaction. Then, we design an appearance-motion attention module to fuse the appearance and motion features for final action detection. Experimental results on two challenging datasets show that UAAN beats state-of-the-art methods by a significant margin, illustrating its effectiveness.

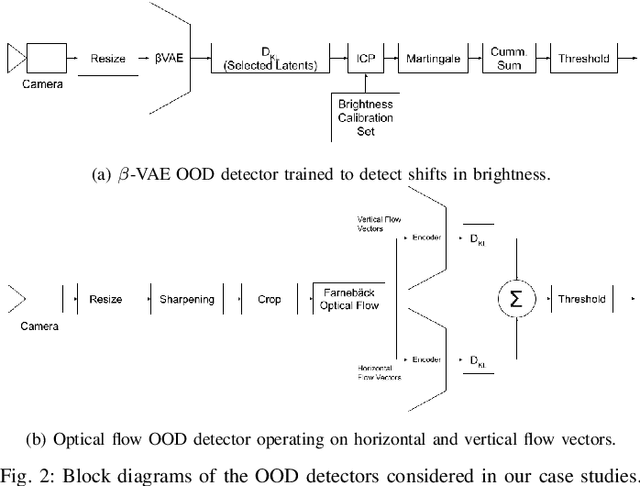

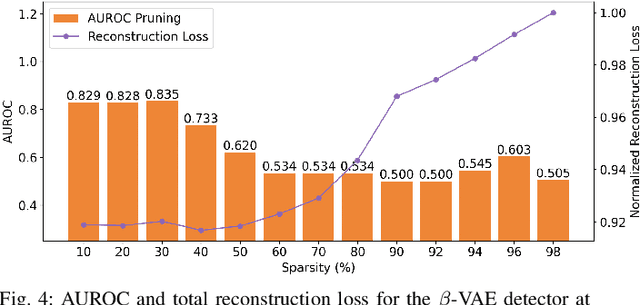

Compressing VAE-Based Out-of-Distribution Detectors for Embedded Deployment

Sep 02, 2024

Abstract:Out-of-distribution (OOD) detectors can act as safety monitors in embedded cyber-physical systems by identifying samples outside a machine learning model's training distribution to prevent potentially unsafe actions. However, OOD detectors are often implemented using deep neural networks, which makes it difficult to meet real-time deadlines on embedded systems with memory and power constraints. We consider the class of variational autoencoder (VAE) based OOD detectors where OOD detection is performed in latent space, and apply quantization, pruning, and knowledge distillation. These techniques have been explored for other deep models, but no work has considered their combined effect on latent space OOD detection. While these techniques increase the VAE's test loss, this does not correspond to a proportional decrease in OOD detection performance and we leverage this to develop lean OOD detectors capable of real-time inference on embedded CPUs and GPUs. We propose a design methodology that combines all three compression techniques and yields a significant decrease in memory and execution time while maintaining AUROC for a given OOD detector. We demonstrate this methodology with two existing OOD detectors on a Jetson Nano and reduce GPU and CPU inference time by 20% and 28% respectively while keeping AUROC within 5% of the baseline.

Vanilla Gradient Descent for Oblique Decision Trees

Aug 22, 2024Abstract:Decision Trees (DTs) constitute one of the major highly non-linear AI models, valued, e.g., for their efficiency on tabular data. Learning accurate DTs is, however, complicated, especially for oblique DTs, and does take a significant training time. Further, DTs suffer from overfitting, e.g., they proverbially "do not generalize" in regression tasks. Recently, some works proposed ways to make (oblique) DTs differentiable. This enables highly efficient gradient-descent algorithms to be used to learn DTs. It also enables generalizing capabilities by learning regressors at the leaves simultaneously with the decisions in the tree. Prior approaches to making DTs differentiable rely either on probabilistic approximations at the tree's internal nodes (soft DTs) or on approximations in gradient computation at the internal node (quantized gradient descent). In this work, we propose DTSemNet, a novel semantically equivalent and invertible encoding for (hard, oblique) DTs as Neural Networks (NNs), that uses standard vanilla gradient descent. Experiments across various classification and regression benchmarks show that oblique DTs learned using DTSemNet are more accurate than oblique DTs of similar size learned using state-of-the-art techniques. Further, DT training time is significantly reduced. We also experimentally demonstrate that DTSemNet can learn DT policies as efficiently as NN policies in the Reinforcement Learning (RL) setup with physical inputs (dimensions $\leq32$). The code is available at {\color{blue}\textit{\url{https://github.com/CPS-research-group/dtsemnet}}}.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge