Arthur Feeney

Mondrian: Transformer Operators via Domain Decomposition

Jun 09, 2025Abstract:Operator learning enables data-driven modeling of partial differential equations (PDEs) by learning mappings between function spaces. However, scaling transformer-based operator models to high-resolution, multiscale domains remains a challenge due to the quadratic cost of attention and its coupling to discretization. We introduce \textbf{Mondrian}, transformer operators that decompose a domain into non-overlapping subdomains and apply attention over sequences of subdomain-restricted functions. Leveraging principles from domain decomposition, Mondrian decouples attention from discretization. Within each subdomain, it replaces standard layers with expressive neural operators, and attention across subdomains is computed via softmax-based inner products over functions. The formulation naturally extends to hierarchical windowed and neighborhood attention, supporting both local and global interactions. Mondrian achieves strong performance on Allen-Cahn and Navier-Stokes PDEs, demonstrating resolution scaling without retraining. These results highlight the promise of domain-decomposed attention for scalable and general-purpose neural operators.

Breaking Boundaries: Distributed Domain Decomposition with Scalable Physics-Informed Neural PDE Solvers

Aug 28, 2023Abstract:Mosaic Flow is a novel domain decomposition method designed to scale physics-informed neural PDE solvers to large domains. Its unique approach leverages pre-trained networks on small domains to solve partial differential equations on large domains purely through inference, resulting in high reusability. This paper presents an end-to-end parallelization of Mosaic Flow, combining data parallel training and domain parallelism for inference on large-scale problems. By optimizing the network architecture and data parallel training, we significantly reduce the training time for learning the Laplacian operator to minutes on 32 GPUs. Moreover, our distributed domain decomposition algorithm enables scalable inferences for solving the Laplace equation on domains 4096 times larger than the training domain, demonstrating strong scaling while maintaining accuracy on 32 GPUs. The reusability of Mosaic Flow, combined with the improved performance achieved through the distributed-memory algorithms, makes it a promising tool for modeling complex physical phenomena and accelerating scientific discovery.

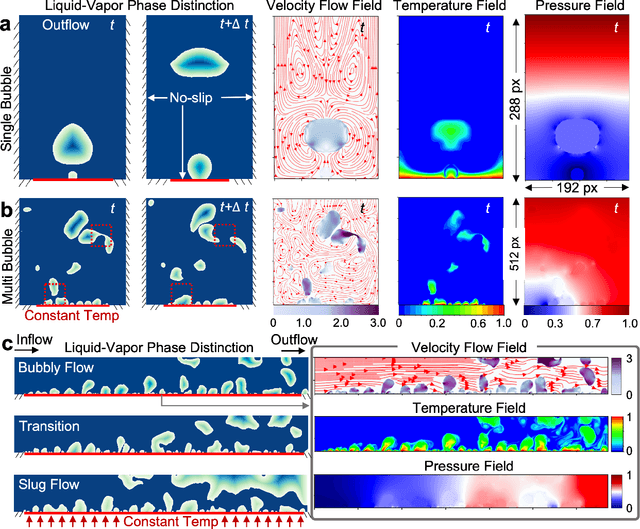

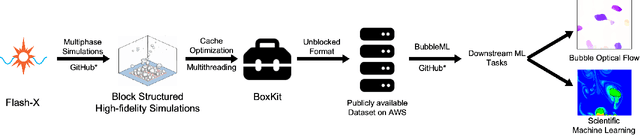

BubbleML: A Multi-Physics Dataset and Benchmarks for Machine Learning

Jul 27, 2023

Abstract:In the field of phase change phenomena, the lack of accessible and diverse datasets suitable for machine learning (ML) training poses a significant challenge. Existing experimental datasets are often restricted, with limited availability and sparse ground truth data, impeding our understanding of this complex multi-physics phenomena. To bridge this gap, we present the BubbleML Dataset(https://github.com/HPCForge/BubbleML) which leverages physics-driven simulations to provide accurate ground truth information for various boiling scenarios, encompassing nucleate pool boiling, flow boiling, and sub-cooled boiling. This extensive dataset covers a wide range of parameters, including varying gravity conditions, flow rates, sub-cooling levels, and wall superheat, comprising 51 simulations. BubbleML is validated against experimental observations and trends, establishing it as an invaluable resource for ML research. Furthermore, we showcase its potential to facilitate exploration of diverse downstream tasks by introducing two benchmarks: (a) optical flow analysis to capture bubble dynamics, and (b) operator networks for learning temperature dynamics. The BubbleML dataset and its benchmarks serve as a catalyst for advancements in ML-driven research on multi-physics phase change phenomena, enabling the development and comparison of state-of-the-art techniques and models.

Relation Matters in Sampling: A Scalable Multi-Relational Graph Neural Network for Drug-Drug Interaction Prediction

May 28, 2021

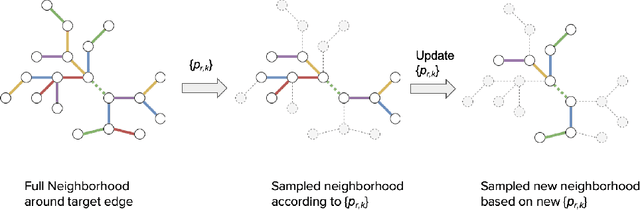

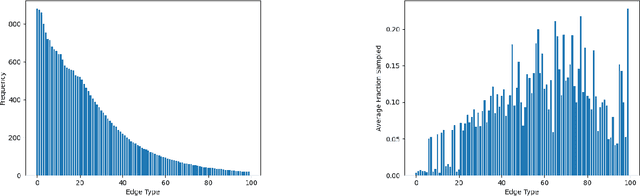

Abstract:Sampling is an established technique to scale graph neural networks to large graphs. Current approaches however assume the graphs to be homogeneous in terms of relations and ignore relation types, critically important in biomedical graphs. Multi-relational graphs contain various types of relations that usually come with variable frequency and have different importance for the problem at hand. We propose an approach to modeling the importance of relation types for neighborhood sampling in graph neural networks and show that we can learn the right balance: relation-type probabilities that reflect both frequency and importance. Our experiments on drug-drug interaction prediction show that state-of-the-art graph neural networks profit from relation-dependent sampling in terms of both accuracy and efficiency.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge