Arseny Skryagin

Answer Set Networks: Casting Answer Set Programming into Deep Learning

Dec 19, 2024

Abstract:Although Answer Set Programming (ASP) allows constraining neural-symbolic (NeSy) systems, its employment is hindered by the prohibitive costs of computing stable models and the CPU-bound nature of state-of-the-art solvers. To this end, we propose Answer Set Networks (ASN), a NeSy solver. Based on Graph Neural Networks (GNN), ASNs are a scalable approach to ASP-based Deep Probabilistic Logic Programming (DPPL). Specifically, we show how to translate ASPs into ASNs and demonstrate how ASNs can efficiently solve the encoded problem by leveraging GPU's batching and parallelization capabilities. Our experimental evaluations demonstrate that ASNs outperform state-of-the-art CPU-bound NeSy systems on multiple tasks. Simultaneously, we make the following two contributions based on the strengths of ASNs. Namely, we are the first to show the finetuning of Large Language Models (LLM) with DPPLs, employing ASNs to guide the training with logic. Further, we show the "constitutional navigation" of drones, i.e., encoding public aviation laws in an ASN for routing Unmanned Aerial Vehicles in uncertain environments.

Graph Neural Networks Need Cluster-Normalize-Activate Modules

Dec 05, 2024Abstract:Graph Neural Networks (GNNs) are non-Euclidean deep learning models for graph-structured data. Despite their successful and diverse applications, oversmoothing prohibits deep architectures due to node features converging to a single fixed point. This severely limits their potential to solve complex tasks. To counteract this tendency, we propose a plug-and-play module consisting of three steps: Cluster-Normalize-Activate (CNA). By applying CNA modules, GNNs search and form super nodes in each layer, which are normalized and activated individually. We demonstrate in node classification and property prediction tasks that CNA significantly improves the accuracy over the state-of-the-art. Particularly, CNA reaches 94.18% and 95.75% accuracy on Cora and CiteSeer, respectively. It further benefits GNNs in regression tasks as well, reducing the mean squared error compared to all baselines. At the same time, GNNs with CNA require substantially fewer learnable parameters than competing architectures.

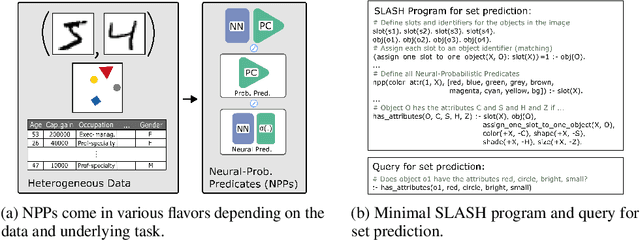

Scalable Neural-Probabilistic Answer Set Programming

Jun 14, 2023Abstract:The goal of combining the robustness of neural networks and the expressiveness of symbolic methods has rekindled the interest in Neuro-Symbolic AI. Deep Probabilistic Programming Languages (DPPLs) have been developed for probabilistic logic programming to be carried out via the probability estimations of deep neural networks. However, recent SOTA DPPL approaches allow only for limited conditional probabilistic queries and do not offer the power of true joint probability estimation. In our work, we propose an easy integration of tractable probabilistic inference within a DPPL. To this end, we introduce SLASH, a novel DPPL that consists of Neural-Probabilistic Predicates (NPPs) and a logic program, united via answer set programming (ASP). NPPs are a novel design principle allowing for combining all deep model types and combinations thereof to be represented as a single probabilistic predicate. In this context, we introduce a novel $+/-$ notation for answering various types of probabilistic queries by adjusting the atom notations of a predicate. To scale well, we show how to prune the stochastically insignificant parts of the (ground) program, speeding up reasoning without sacrificing the predictive performance. We evaluate SLASH on a variety of different tasks, including the benchmark task of MNIST addition and Visual Question Answering (VQA).

SLASH: Embracing Probabilistic Circuits into Neural Answer Set Programming

Nov 01, 2021

Abstract:The goal of combining the robustness of neural networks and the expressivity of symbolic methods has rekindled the interest in neuro-symbolic AI. Recent advancements in neuro-symbolic AI often consider specifically-tailored architectures consisting of disjoint neural and symbolic components, and thus do not exhibit desired gains that can be achieved by integrating them into a unifying framework. We introduce SLASH -- a novel deep probabilistic programming language (DPPL). At its core, SLASH consists of Neural-Probabilistic Predicates (NPPs) and logical programs which are united via answer set programming. The probability estimates resulting from NPPs act as the binding element between the logical program and raw input data, thereby allowing SLASH to answer task-dependent logical queries. This allows SLASH to elegantly integrate the symbolic and neural components in a unified framework. We evaluate SLASH on the benchmark data of MNIST addition as well as novel tasks for DPPLs such as missing data prediction and set prediction with state-of-the-art performance, thereby showing the effectiveness and generality of our method.

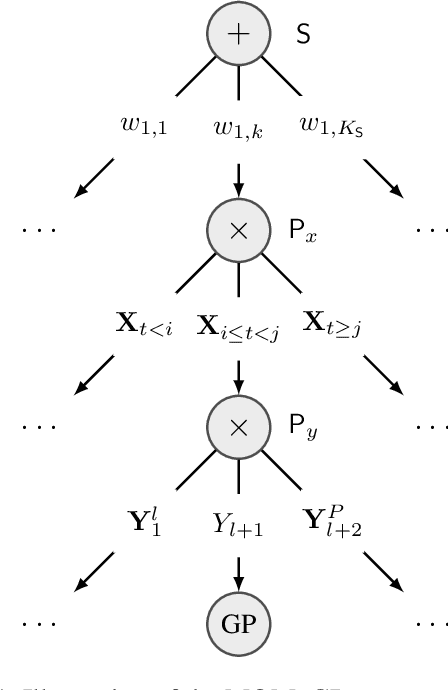

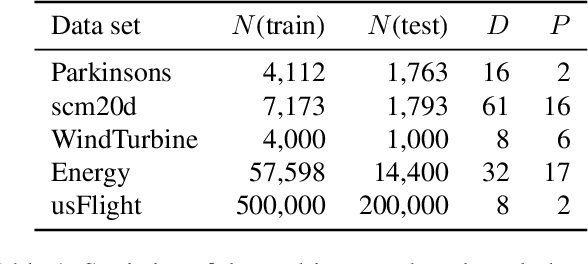

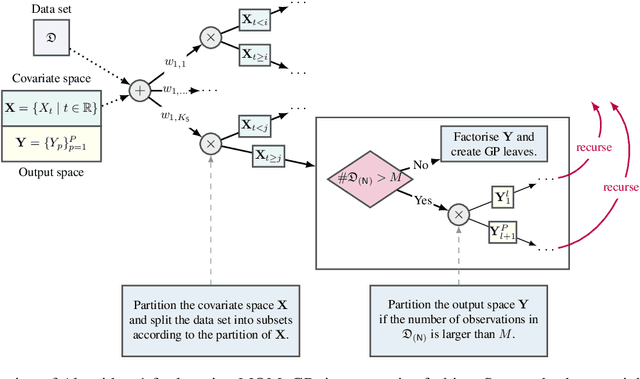

Leveraging Probabilistic Circuits for Nonparametric Multi-Output Regression

Jun 16, 2021

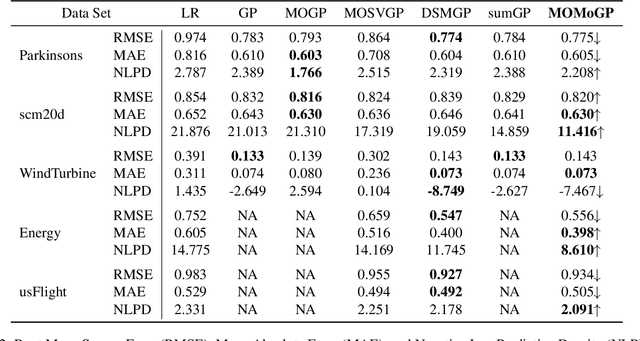

Abstract:Inspired by recent advances in the field of expert-based approximations of Gaussian processes (GPs), we present an expert-based approach to large-scale multi-output regression using single-output GP experts. Employing a deeply structured mixture of single-output GPs encoded via a probabilistic circuit allows us to capture correlations between multiple output dimensions accurately. By recursively partitioning the covariate space and the output space, posterior inference in our model reduces to inference on single-output GP experts, which only need to be conditioned on a small subset of the observations. We show that inference can be performed exactly and efficiently in our model, that it can capture correlations between output dimensions and, hence, often outperforms approaches that do not incorporate inter-output correlations, as demonstrated on several data sets in terms of the negative log predictive density.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge