Anirvan Dutta

Adaptive Manipulation Potential and Haptic Estimation for Tool-Mediated Interaction

Mar 11, 2026Abstract:Achieving human-level dexterity in contact-rich, tool-mediated manipulation remains a significant challenge due to visual occlusion and the underdetermined nature of haptic sensing. This paper introduces a parameterized Equilibrium Manifold (EM) as a unified representation for tool-mediated interaction, and develops a closed-loop framework that integrates haptic estimation, online planning, and adaptive stiffness control. We establish a physical-geometric duality using an adaptive manipulation potential incorporating a differentiable contact model, which induces the manifold's geometric structure and ensures that complex physical interactions are encapsulated as continuous operations on the EM. Within this framework, we reformulate haptic estimation as a manifold parameter estimation problem. Specifically, a hybrid inference strategy (haptic SLAM) is employed in which discrete object shapes are classified via particle filtering, while the continuous object pose is estimated using analytical gradients for efficient optimization. By continuously updating the parameters of the manipulation potential, the framework dynamically reshapes the induced EM to guide online trajectory replanning and implement uncertainty-aware impedance control, thereby closing the perception-action loop. The system is validated through simulation and over 260 real-world screw-loosening trials. Experimental results demonstrate robust identification and manipulation success in standard scenarios while maintaining accurate tracking. Furthermore, ablation studies confirm that haptic SLAM and uncertainty-aware stiffness modulation outperform fixed impedance baselines, effectively preventing jamming during tight tolerance interactions.

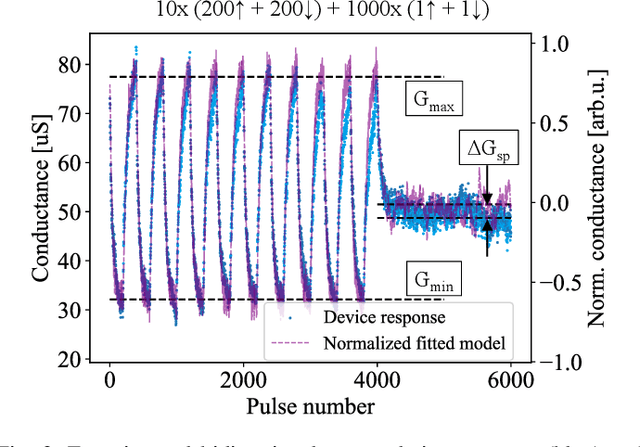

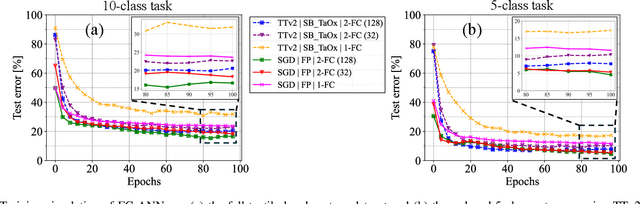

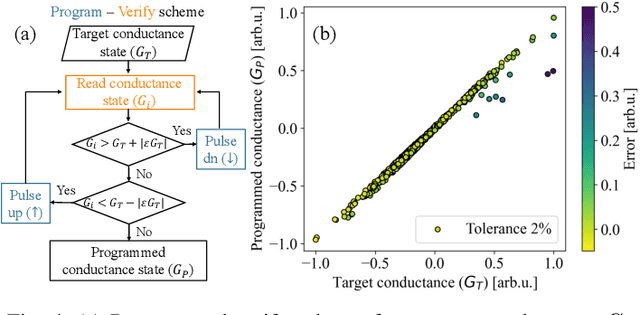

Edge Training and Inference with Analog ReRAM Technology for Hand Gesture Recognition

Feb 25, 2025

Abstract:Tactile hand gesture recognition is a crucial task for user control in the automotive sector, where Human-Machine Interactions (HMI) demand low latency and high energy efficiency. This study addresses the challenges of power-constrained edge training and inference by utilizing analog Resistive Random Access Memory (ReRAM) technology in conjunction with a real tactile hand gesture dataset. By optimizing the input space through a feature engineering strategy, we avoid relying on large-scale crossbar arrays, making the system more suitable for edge deployment. Through realistic hardware-aware simulations that account for device non-idealities derived from experimental data, we demonstrate the functionalities of our analog ReRAM-based analog in-memory computing for on-chip training, utilizing the state-of-the-art Tiki-Taka algorithm. Furthermore, we validate the classification accuracy of approximately 91.4% for post-deployment inference of hand gestures. The results highlight the potential of analog ReRAM technology and crossbar architecture with fully parallelized matrix computations for real-time HMI systems at the Edge.

Predictive Visuo-Tactile Interactive Perception Framework for Object Properties Inference

Nov 13, 2024Abstract:Interactive exploration of the unknown physical properties of objects such as stiffness, mass, center of mass, friction coefficient, and shape is crucial for autonomous robotic systems operating continuously in unstructured environments. Precise identification of these properties is essential to manipulate objects in a stable and controlled way, and is also required to anticipate the outcomes of (prehensile or non-prehensile) manipulation actions such as pushing, pulling, lifting, etc. Our study focuses on autonomously inferring the physical properties of a diverse set of various homogeneous, heterogeneous, and articulated objects utilizing a robotic system equipped with vision and tactile sensors. We propose a novel predictive perception framework for identifying object properties of the diverse objects by leveraging versatile exploratory actions: non-prehensile pushing and prehensile pulling. As part of the framework, we propose a novel active shape perception to seamlessly initiate exploration. Our innovative dual differentiable filtering with Graph Neural Networks learns the object-robot interaction and performs consistent inference of indirectly observable time-invariant object properties. In addition, we formulate a $N$-step information gain approach to actively select the most informative actions for efficient learning and inference. Extensive real-robot experiments with planar objects show that our predictive perception framework results in better performance than the state-of-the-art baseline and demonstrate our framework in three major applications for i) object tracking, ii) goal-driven task, and iii) change in environment detection.

Advancements in Tactile Hand Gesture Recognition for Enhanced Human-Machine Interaction

May 27, 2024

Abstract:Motivated by the growing interest in enhancing intuitive physical Human-Machine Interaction (HRI/HVI), this study aims to propose a robust tactile hand gesture recognition system. We performed a comprehensive evaluation of different hand gesture recognition approaches for a large area tactile sensing interface (touch interface) constructed from conductive textiles. Our evaluation encompassed traditional feature engineering methods, as well as contemporary deep learning techniques capable of real-time interpretation of a range of hand gestures, accommodating variations in hand sizes, movement velocities, applied pressure levels, and interaction points. Our extensive analysis of the various methods makes a significant contribution to tactile-based gesture recognition in the field of human-machine interaction.

Visuo-Tactile based Predictive Cross Modal Perception for Object Exploration in Robotics

May 23, 2024Abstract:Autonomously exploring the unknown physical properties of novel objects such as stiffness, mass, center of mass, friction coefficient, and shape is crucial for autonomous robotic systems operating continuously in unstructured environments. We introduce a novel visuo-tactile based predictive cross-modal perception framework where initial visual observations (shape) aid in obtaining an initial prior over the object properties (mass). The initial prior improves the efficiency of the object property estimation, which is autonomously inferred via interactive non-prehensile pushing and using a dual filtering approach. The inferred properties are then used to enhance the predictive capability of the cross-modal function efficiently by using a human-inspired `surprise' formulation. We evaluated our proposed framework in the real-robotic scenario, demonstrating superior performance.

Push to know! -- Visuo-Tactile based Active Object Parameter Inference with Dual Differentiable Filtering

Aug 02, 2023

Abstract:For robotic systems to interact with objects in dynamic environments, it is essential to perceive the physical properties of the objects such as shape, friction coefficient, mass, center of mass, and inertia. This not only eases selecting manipulation action but also ensures the task is performed as desired. However, estimating the physical properties of especially novel objects is a challenging problem, using either vision or tactile sensing. In this work, we propose a novel framework to estimate key object parameters using non-prehensile manipulation using vision and tactile sensing. Our proposed active dual differentiable filtering (ADDF) approach as part of our framework learns the object-robot interaction during non-prehensile object push to infer the object's parameters. Our proposed method enables the robotic system to employ vision and tactile information to interactively explore a novel object via non-prehensile object push. The novel proposed N-step active formulation within the differentiable filtering facilitates efficient learning of the object-robot interaction model and during inference by selecting the next best exploratory push actions (where to push? and how to push?). We extensively evaluated our framework in simulation and real-robotic scenarios, yielding superior performance to the state-of-the-art baseline.

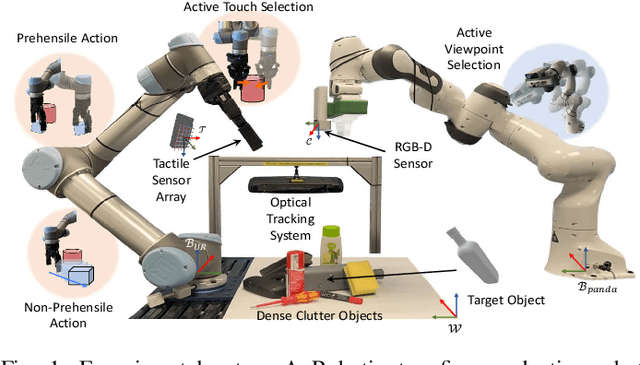

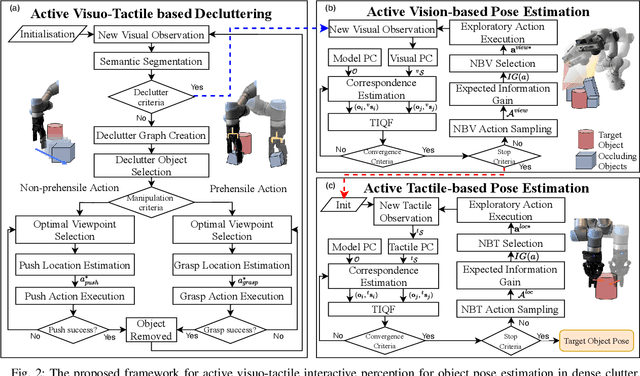

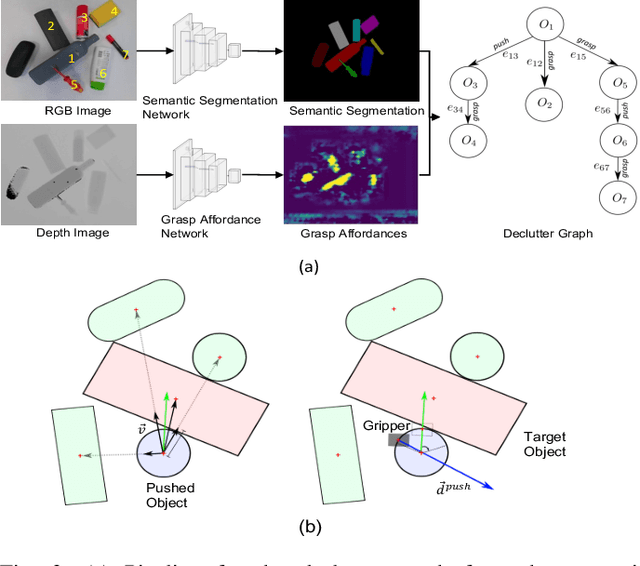

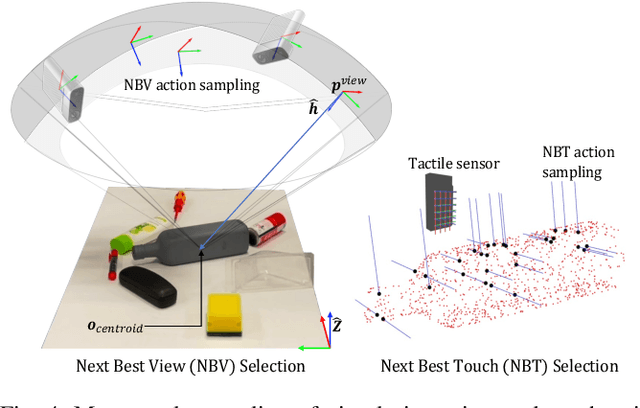

Active Visuo-Tactile Interactive Robotic Perception for Accurate Object Pose Estimation in Dense Clutter

Feb 04, 2022

Abstract:This work presents a novel active visuo-tactile based framework for robotic systems to accurately estimate pose of objects in dense cluttered environments. The scene representation is derived using a novel declutter graph (DG) which describes the relationship among objects in the scene for decluttering by leveraging semantic segmentation and grasp affordances networks. The graph formulation allows robots to efficiently declutter the workspace by autonomously selecting the next best object to remove and the optimal action (prehensile or non-prehensile) to perform. Furthermore, we propose a novel translation-invariant Quaternion filter (TIQF) for active vision and active tactile based pose estimation. Both active visual and active tactile points are selected by maximizing the expected information gain. We evaluate our proposed framework on a system with two robots coordinating on randomized scenes of dense cluttered objects and perform ablation studies with static vision and active vision based estimation prior and post decluttering as baselines. Our proposed active visuo-tactile interactive perception framework shows upto 36% improvement in pose accuracy compared to the active vision baseline.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge