Active Visuo-Tactile Interactive Robotic Perception for Accurate Object Pose Estimation in Dense Clutter

Paper and Code

Feb 04, 2022

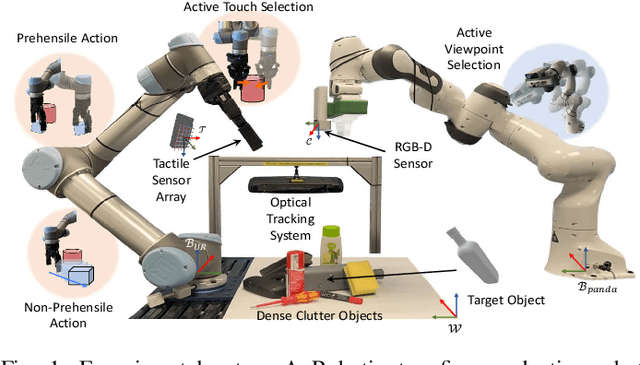

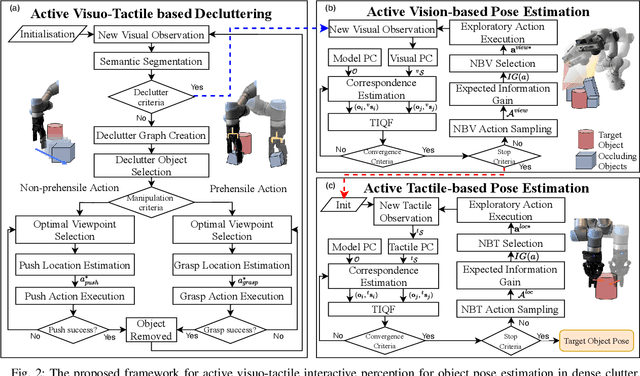

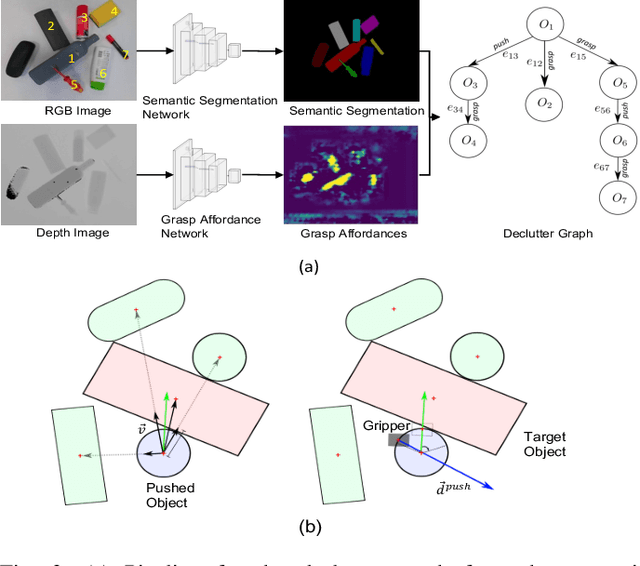

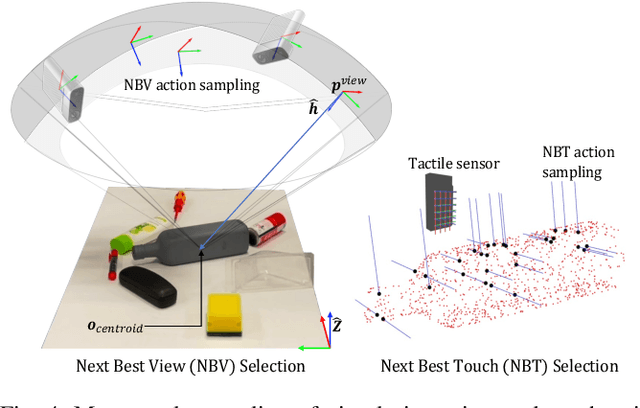

This work presents a novel active visuo-tactile based framework for robotic systems to accurately estimate pose of objects in dense cluttered environments. The scene representation is derived using a novel declutter graph (DG) which describes the relationship among objects in the scene for decluttering by leveraging semantic segmentation and grasp affordances networks. The graph formulation allows robots to efficiently declutter the workspace by autonomously selecting the next best object to remove and the optimal action (prehensile or non-prehensile) to perform. Furthermore, we propose a novel translation-invariant Quaternion filter (TIQF) for active vision and active tactile based pose estimation. Both active visual and active tactile points are selected by maximizing the expected information gain. We evaluate our proposed framework on a system with two robots coordinating on randomized scenes of dense cluttered objects and perform ablation studies with static vision and active vision based estimation prior and post decluttering as baselines. Our proposed active visuo-tactile interactive perception framework shows upto 36% improvement in pose accuracy compared to the active vision baseline.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge