Aniketh Ramesh

An Exploratory Study on Human-Robot Interaction using Semantics-based Situational Awareness

Jul 23, 2025Abstract:In this paper, we investigate the impact of high-level semantics (evaluation of the environment) on Human-Robot Teams (HRT) and Human-Robot Interaction (HRI) in the context of mobile robot deployments. Although semantics has been widely researched in AI, how high-level semantics can benefit the HRT paradigm is underexplored, often fuzzy, and intractable. We applied a semantics-based framework that could reveal different indicators of the environment (i.e. how much semantic information exists) in a mock-up disaster response mission. In such missions, semantics are crucial as the HRT should handle complex situations and respond quickly with correct decisions, where humans might have a high workload and stress. Especially when human operators need to shift their attention between robots and other tasks, they will struggle to build Situational Awareness (SA) quickly. The experiment suggests that the presented semantics: 1) alleviate the perceived workload of human operators; 2) increase the operator's trust in the SA; and 3) help to reduce the reaction time in switching the level of autonomy when needed. Additionally, we find that participants with higher trust in the system are encouraged by high-level semantics to use teleoperation mode more.

A Framework for Semantics-based Situational Awareness during Mobile Robot Deployments

Feb 19, 2025Abstract:Deployment of robots into hazardous environments typically involves a ``Human-Robot Teaming'' (HRT) paradigm, in which a human supervisor interacts with a remotely operating robot inside the hazardous zone. Situational Awareness (SA) is vital for enabling HRT, to support navigation, planning, and decision-making. This paper explores issues of higher-level ``semantic'' information and understanding in SA. In semi-autonomous, or variable-autonomy paradigms, different types of semantic information may be important, in different ways, for both the human operator and an autonomous agent controlling the robot. We propose a generalizable framework for acquiring and combining multiple modalities of semantic-level SA during remote deployments of mobile robots. We demonstrate the framework with an example application of search and rescue (SAR) in disaster response robotics. We propose a set of ``environment semantic indicators" that can reflect a variety of different types of semantic information, e.g. indicators of risk, or signs of human activity, as the robot encounters different scenes. Based on these indicators, we propose a metric to describe the overall situation of the environment called ``Situational Semantic Richness (SSR)". This metric combines multiple semantic indicators to summarise the overall situation. The SSR indicates if an information-rich and complex situation has been encountered, which may require advanced reasoning for robots and humans and hence the attention of the expert human operator. The framework is tested on a Jackal robot in a mock-up disaster response environment. Experimental results demonstrate that the proposed semantic indicators are sensitive to changes in different modalities of semantic information in different scenes, and the SSR metric reflects overall semantic changes in the situations encountered.

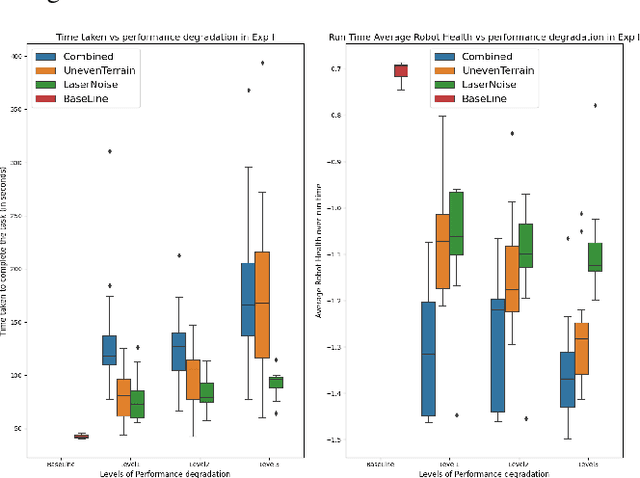

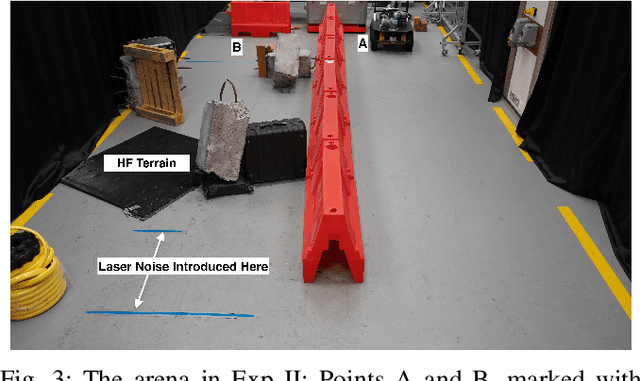

Robot Health Indicator: A Visual Cue to Improve Level of Autonomy Switching Systems

Mar 12, 2023Abstract:Using different Levels of Autonomy (LoA), a human operator can vary the extent of control they have over a robot's actions. LoAs enable operators to mitigate a robot's performance degradation or limitations in the its autonomous capabilities. However, LoA regulation and other tasks may often overload an operator's cognitive abilities. Inspired by video game user interfaces, we study if adding a 'Robot Health Bar' to the robot control UI can reduce the cognitive demand and perceptual effort required for LoA regulation while promoting trust and transparency. This Health Bar uses the robot vitals and robot health framework to quantify and present runtime performance degradation in robots. Results from our pilot study indicate that when using a health bar, operators used to manual control more to minimise the risk of robot failure during high performance degradation. It also gave us insights and lessons to inform subsequent experiments on human-robot teaming.

A Hierarchical Variable Autonomy Mixed-Initiative Framework for Human-Robot Teaming in Mobile Robotics

Nov 25, 2022

Abstract:This paper presents a Mixed-Initiative (MI) framework for addressing the problem of control authority transfer between a remote human operator and an AI agent when cooperatively controlling a mobile robot. Our Hierarchical Expert-guided Mixed-Initiative Control Switcher (HierEMICS) leverages information on the human operator's state and intent. The control switching policies are based on a criticality hierarchy. An experimental evaluation was conducted in a high-fidelity simulated disaster response and remote inspection scenario, comparing HierEMICS with a state-of-the-art Expert-guided Mixed-Initiative Control Switcher (EMICS) in the context of mobile robot navigation. Results suggest that HierEMICS reduces conflicts for control between the human and the AI agent, which is a fundamental challenge in both the MI control paradigm and also in the related shared control paradigm. Additionally, we provide statistically significant evidence of improved, navigational safety (i.e., fewer collisions), LOA switching efficiency, and conflict for control reduction.

Robot Vitals and Robot Health: Towards Systematically Quantifying Runtime Performance Degradation in Robots Under Adverse Conditions

Jul 04, 2022

Abstract:This paper addresses the problem of automatically detecting and quantifying performance degradation in remote mobile robots during task execution. A robot may encounter a variety of uncertainties and adversities during task execution, which can impair its ability to carry out tasks effectively and cause its performance to degrade. Such situations can be mitigated or averted by timely detection and intervention (e.g., by a remote human supervisor taking over control in teleoperation mode). Inspired by patient triaging systems in hospitals, we introduce the framework of "robot vitals" for estimating overall "robot health". A robot's vitals are a set of indicators that estimate the extent of performance degradation faced by a robot at a given point in time. Robot health is a metric that combines robot vitals into a single scalar value estimate of performance degradation. Experiments, both in simulation and on a real mobile robot, demonstrate that the proposed robot vitals and robot health can be used effectively to estimate robot performance degradation during runtime.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge