Tianshu Ruan

An Exploratory Study on Human-Robot Interaction using Semantics-based Situational Awareness

Jul 23, 2025Abstract:In this paper, we investigate the impact of high-level semantics (evaluation of the environment) on Human-Robot Teams (HRT) and Human-Robot Interaction (HRI) in the context of mobile robot deployments. Although semantics has been widely researched in AI, how high-level semantics can benefit the HRT paradigm is underexplored, often fuzzy, and intractable. We applied a semantics-based framework that could reveal different indicators of the environment (i.e. how much semantic information exists) in a mock-up disaster response mission. In such missions, semantics are crucial as the HRT should handle complex situations and respond quickly with correct decisions, where humans might have a high workload and stress. Especially when human operators need to shift their attention between robots and other tasks, they will struggle to build Situational Awareness (SA) quickly. The experiment suggests that the presented semantics: 1) alleviate the perceived workload of human operators; 2) increase the operator's trust in the SA; and 3) help to reduce the reaction time in switching the level of autonomy when needed. Additionally, we find that participants with higher trust in the system are encouraged by high-level semantics to use teleoperation mode more.

A Framework for Semantics-based Situational Awareness during Mobile Robot Deployments

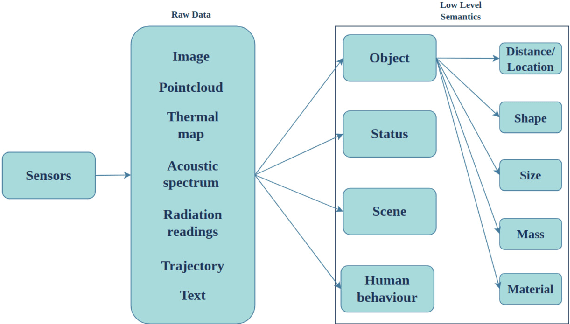

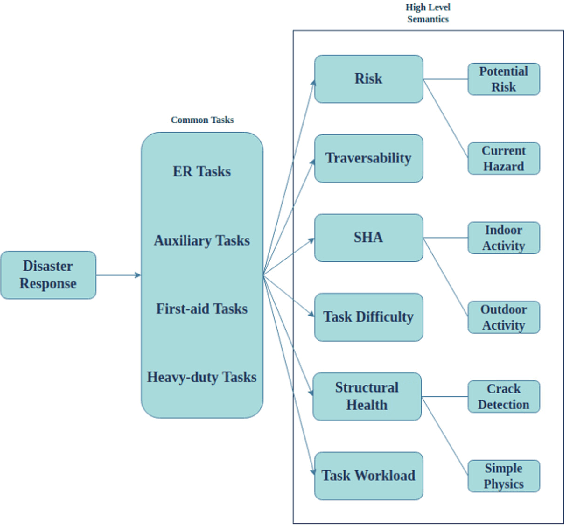

Feb 19, 2025Abstract:Deployment of robots into hazardous environments typically involves a ``Human-Robot Teaming'' (HRT) paradigm, in which a human supervisor interacts with a remotely operating robot inside the hazardous zone. Situational Awareness (SA) is vital for enabling HRT, to support navigation, planning, and decision-making. This paper explores issues of higher-level ``semantic'' information and understanding in SA. In semi-autonomous, or variable-autonomy paradigms, different types of semantic information may be important, in different ways, for both the human operator and an autonomous agent controlling the robot. We propose a generalizable framework for acquiring and combining multiple modalities of semantic-level SA during remote deployments of mobile robots. We demonstrate the framework with an example application of search and rescue (SAR) in disaster response robotics. We propose a set of ``environment semantic indicators" that can reflect a variety of different types of semantic information, e.g. indicators of risk, or signs of human activity, as the robot encounters different scenes. Based on these indicators, we propose a metric to describe the overall situation of the environment called ``Situational Semantic Richness (SSR)". This metric combines multiple semantic indicators to summarise the overall situation. The SSR indicates if an information-rich and complex situation has been encountered, which may require advanced reasoning for robots and humans and hence the attention of the expert human operator. The framework is tested on a Jackal robot in a mock-up disaster response environment. Experimental results demonstrate that the proposed semantic indicators are sensitive to changes in different modalities of semantic information in different scenes, and the SSR metric reflects overall semantic changes in the situations encountered.

A Hierarchical Variable Autonomy Mixed-Initiative Framework for Human-Robot Teaming in Mobile Robotics

Nov 25, 2022

Abstract:This paper presents a Mixed-Initiative (MI) framework for addressing the problem of control authority transfer between a remote human operator and an AI agent when cooperatively controlling a mobile robot. Our Hierarchical Expert-guided Mixed-Initiative Control Switcher (HierEMICS) leverages information on the human operator's state and intent. The control switching policies are based on a criticality hierarchy. An experimental evaluation was conducted in a high-fidelity simulated disaster response and remote inspection scenario, comparing HierEMICS with a state-of-the-art Expert-guided Mixed-Initiative Control Switcher (EMICS) in the context of mobile robot navigation. Results suggest that HierEMICS reduces conflicts for control between the human and the AI agent, which is a fundamental challenge in both the MI control paradigm and also in the related shared control paradigm. Additionally, we provide statistically significant evidence of improved, navigational safety (i.e., fewer collisions), LOA switching efficiency, and conflict for control reduction.

A Taxonomy of Semantic Information in Robot-Assisted Disaster Response

Sep 30, 2022

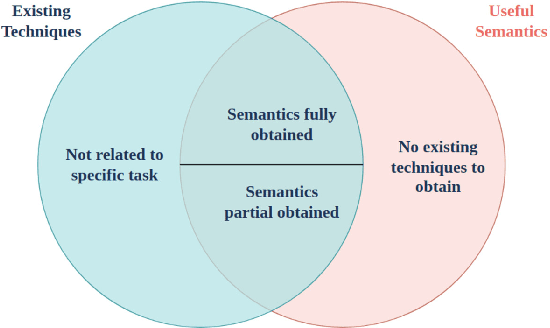

Abstract:This paper proposes a taxonomy of semantic information in robot-assisted disaster response. Robots are increasingly being used in hazardous environment industries and emergency response teams to perform various tasks. Operational decision-making in such applications requires a complex semantic understanding of environments that are remote from the human operator. Low-level sensory data from the robot is transformed into perception and informative cognition. Currently, such cognition is predominantly performed by a human expert, who monitors remote sensor data such as robot video feeds. This engenders a need for AI-generated semantic understanding capabilities on the robot itself. Current work on semantics and AI lies towards the relatively academic end of the research spectrum, hence relatively removed from the practical realities of first responder teams. We aim for this paper to be a step towards bridging this divide. We first review common robot tasks in disaster response and the types of information such robots must collect. We then organize the types of semantic features and understanding that may be useful in disaster operations into a taxonomy of semantic information. We also briefly review the current state-of-the-art semantic understanding techniques. We highlight potential synergies, but we also identify gaps that need to be bridged to apply these ideas. We aim to stimulate the research that is needed to adapt, robustify, and implement state-of-the-art AI semantics methods in the challenging conditions of disasters and first responder scenarios.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge