Adam Hare

On Extending NLP Techniques from the Categorical to the Latent Space: KL Divergence, Zipf's Law, and Similarity Search

Dec 02, 2020

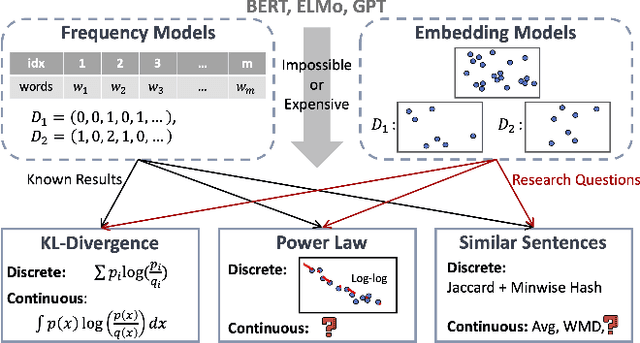

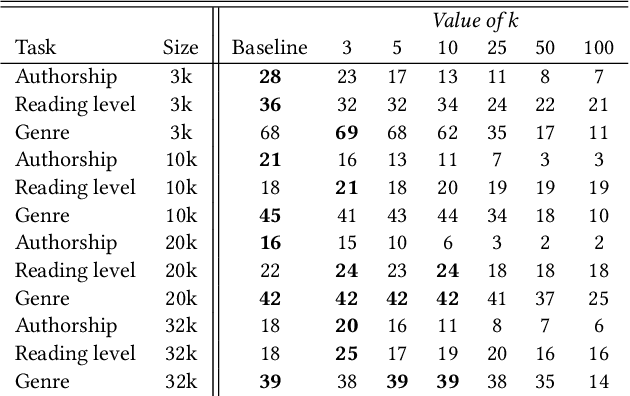

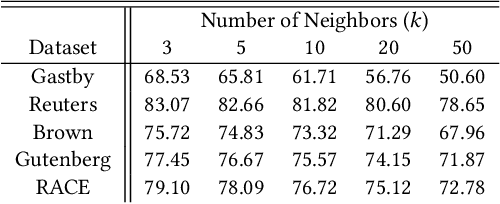

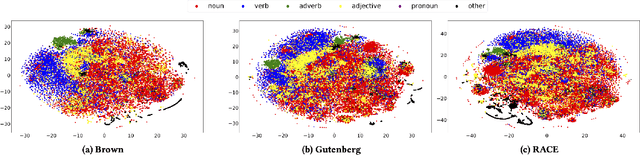

Abstract:Despite the recent successes of deep learning in natural language processing (NLP), there remains widespread usage of and demand for techniques that do not rely on machine learning. The advantage of these techniques is their interpretability and low cost when compared to frequently opaque and expensive machine learning models. Although they may not be be as performant in all cases, they are often sufficient for common and relatively simple problems. In this paper, we aim to modernize these older methods while retaining their advantages by extending approaches from categorical or bag-of-words representations to word embeddings representations in the latent space. First, we show that entropy and Kullback-Leibler divergence can be efficiently estimated using word embeddings and use this estimation to compare text across several categories. Next, we recast the heavy-tailed distribution known as Zipf's law that is frequently observed in the categorical space to the latent space. Finally, we look to improve the Jaccard similarity measure for sentence suggestion by introducing a new method of identifying similar sentences based on the set cover problem. We compare the performance of this algorithm against several baselines including Word Mover's Distance and the Levenshtein distance.

BATS: A Spectral Biclustering Approach to Single Document Topic Modeling and Segmentation

Aug 05, 2020

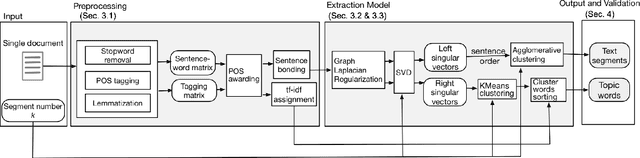

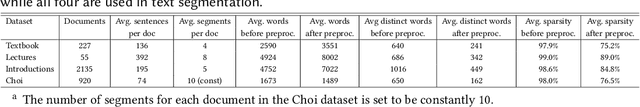

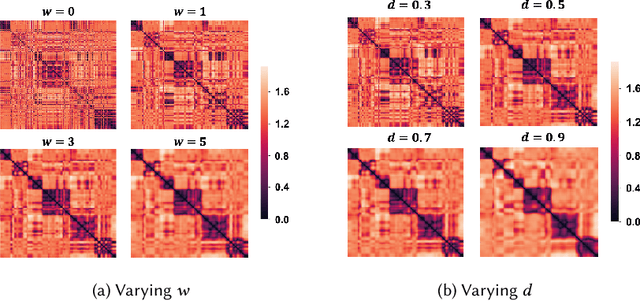

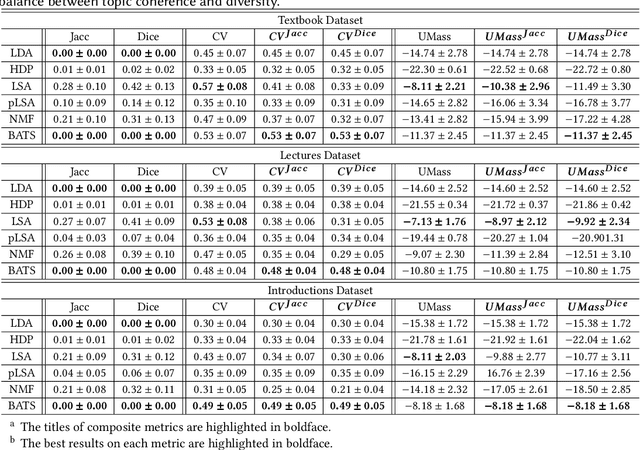

Abstract:Existing topic modeling and text segmentation methodologies generally require large datasets for training, limiting their capabilities when only small collections of text are available. In this work, we reexamine the inter-related problems of "topic identification" and "text segmentation" for sparse document learning, when there is a single new text of interest. In developing a methodology to handle single documents, we face two major challenges. First is sparse information: with access to only one document, we cannot train traditional topic models or deep learning algorithms. Second is significant noise: a considerable portion of words in any single document will produce only noise and not help discern topics or segments. To tackle these issues, we design an unsupervised, computationally efficient methodology called BATS: Biclustering Approach to Topic modeling and Segmentation. BATS leverages three key ideas to simultaneously identify topics and segment text: (i) a new mechanism that uses word order information to reduce sample complexity, (ii) a statistically sound graph-based biclustering technique that identifies latent structures of words and sentences, and (iii) a collection of effective heuristics that remove noise words and award important words to further improve performance. Experiments on four datasets show that our approach outperforms several state-of-the-art baselines when considering topic coherence, topic diversity, segmentation, and runtime comparison metrics.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge