Stereo Dense Scene Reconstruction and Accurate Laparoscope Localization for Learning-Based Navigation in Robot-Assisted Surgery

Paper and Code

Oct 08, 2021

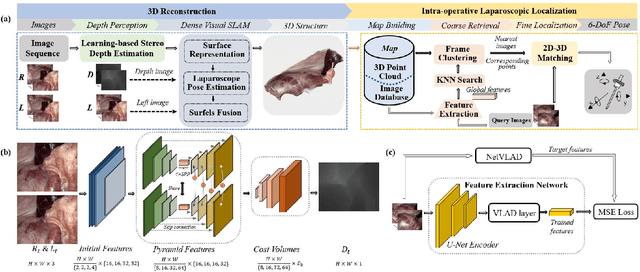

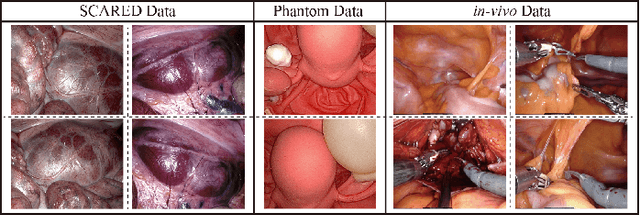

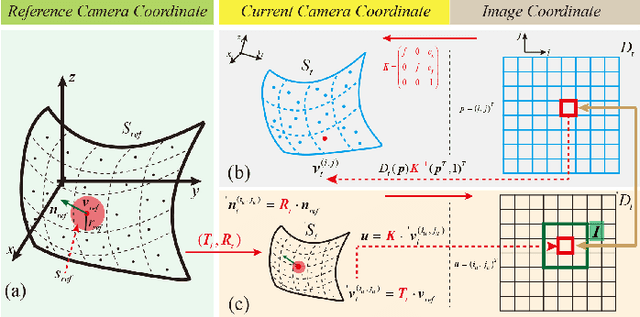

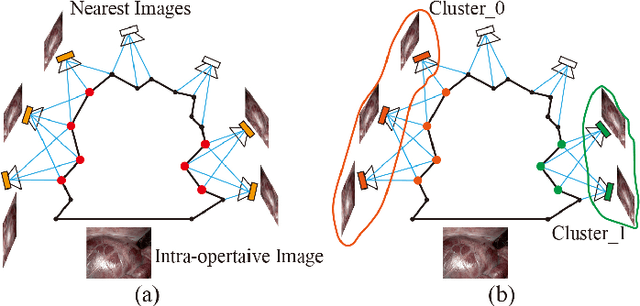

The computation of anatomical information and laparoscope position is a fundamental block of robot-assisted surgical navigation in Minimally Invasive Surgery (MIS). Recovering a dense 3D structure of surgical scene using visual cues remains a challenge, and the online laparoscopic tracking mostly relies on external sensors, which increases system complexity. In this paper, we propose a learning-driven framework, in which an image-guided laparoscopic localization with 3D reconstructions of complex anatomical structures is hereby achieved. To reconstruct the 3D structure of the whole surgical environment, we first fine-tune a learning-based stereoscopic depth perception method, which is robust to the texture-less and variant soft tissues, for depth estimation. Then, we develop a dense visual reconstruction algorithm to represent the scene by surfels, estimate the laparoscope pose and fuse the depth data into a unified reference coordinate for tissue reconstruction. To estimate poses of new laparoscope views, we realize a coarse-to-fine localization method, which incorporates our reconstructed 3D model. We evaluate the reconstruction method and the localization module on three datasets, namely, the stereo correspondence and reconstruction of endoscopic data (SCARED), the ex-vivo phantom and tissue data collected with Universal Robot (UR) and Karl Storz Laparoscope, and the in-vivo DaVinci robotic surgery dataset. Extensive experiments have been conducted to prove the superior performance of our method in 3D anatomy reconstruction and laparoscopic localization, which demonstrates its potential implementation to surgical navigation system.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge