Rethinking Data Augmentation in Knowledge Distillation for Object Detection

Paper and Code

Sep 20, 2022

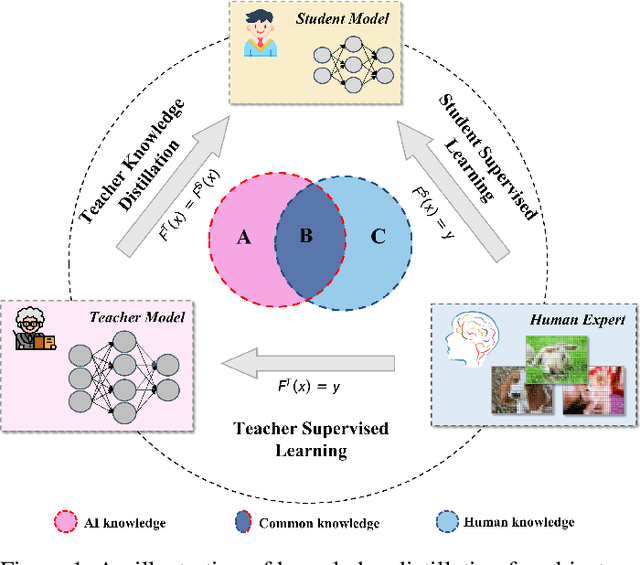

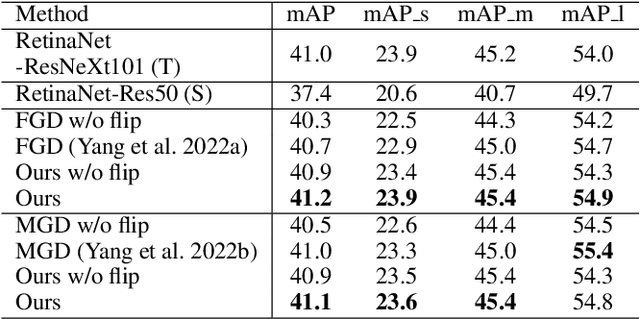

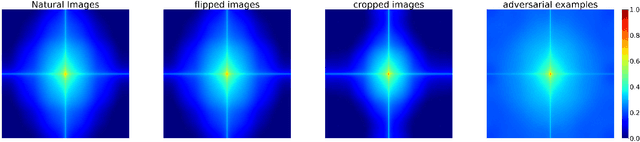

Knowledge distillation (KD) has shown its effectiveness for object detection, where it trains a compact object detector under the supervision of both AI knowledge (teacher detector) and human knowledge (human expert). However, existing studies treat the AI knowledge and human knowledge consistently and adopt a uniform data augmentation strategy during learning, which would lead to the biased learning of multi-scale objects and insufficient learning for the teacher detector causing unsatisfactory distillation performance. To tackle these problems, we propose the sample-specific data augmentation and adversarial feature augmentation. Firstly, to mitigate the impact incurred by multi-scale objects, we propose an adaptive data augmentation based on our observations from the Fourier perspective. Secondly, we propose a feature augmentation method based on adversarial examples for better mimicking AI knowledge to make up for the insufficient information mining of the teacher detector. Furthermore, our proposed method is unified and easily extended to other KD methods. Extensive experiments demonstrate the effectiveness of our framework and improve the performance of state-of-the-art methods in one-stage and two-stage detectors, bringing at most 0.5 mAP gains.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge