Prototype-to-Style: Dialogue Generation with Style-Aware Editing on Retrieval Memory

Paper and Code

Apr 05, 2020

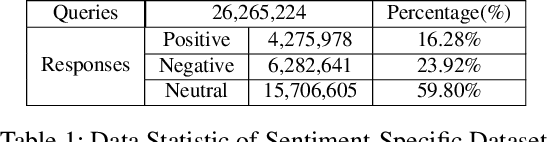

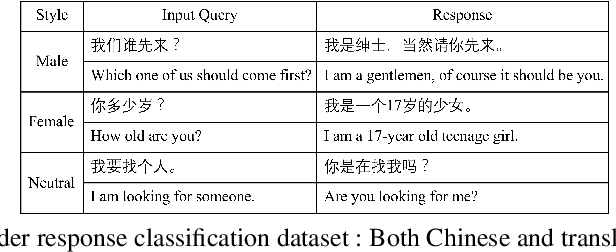

The ability of a dialog system to express prespecified language style during conversations has a direct, positive impact on its usability and on user satisfaction. We introduce a new prototype-to-style (PS) framework to tackle the challenge of stylistic dialogue generation. The framework uses an Information Retrieval (IR) system and extracts a response prototype from the retrieved response. A stylistic response generator then takes the prototype and the desired language style as model input to obtain a high-quality and stylistic response. To effectively train the proposed model, we propose a new style-aware learning objective as well as a de-noising learning strategy. Results on three benchmark datasets from two languages demonstrate that the proposed approach significantly outperforms existing baselines in both in-domain and cross-domain evaluations

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge