Intriguing Property of GAN for Remote Sensing Image Generation

Paper and Code

Mar 09, 2023

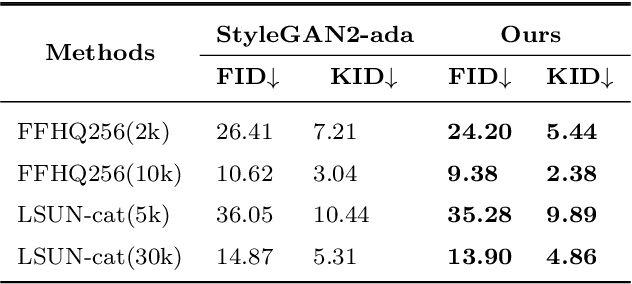

Generative adversarial networks (GANs) have achieved remarkable progress in the natural image field. However, when applying GANs in the remote sensing (RS) image generation task, we discover an extraordinary phenomenon: the GAN model is more sensitive to the size of training data for RS image generation than for natural image generation. In other words, the generation quality of RS images will change significantly with the number of training categories or samples per category. In this paper, we first analyze this phenomenon from two kinds of toy experiments and conclude that the amount of feature information contained in the GAN model decreases with reduced training data. Based on this discovery, we propose two innovative adjustment schemes, namely Uniformity Regularization (UR) and Entropy Regularization (ER), to increase the information learned by the GAN model at the distributional and sample levels, respectively. We theoretically and empirically demonstrate the effectiveness and versatility of our methods. Extensive experiments on the NWPU-RESISC45 and PatternNet datasets show that our methods outperform the well-established models on RS image generation tasks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge