Hyper-Meta Reinforcement Learning with Sparse Reward

Paper and Code

Feb 11, 2020

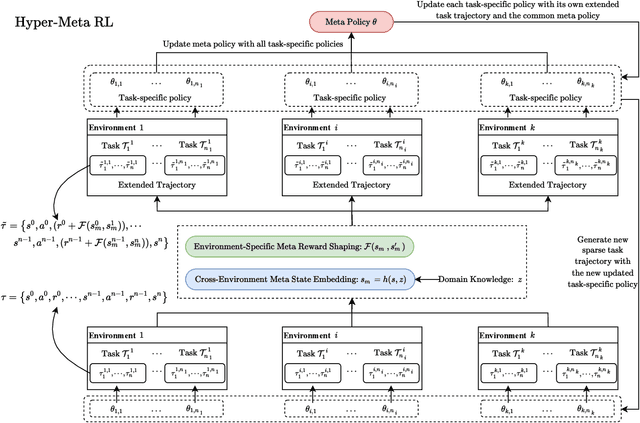

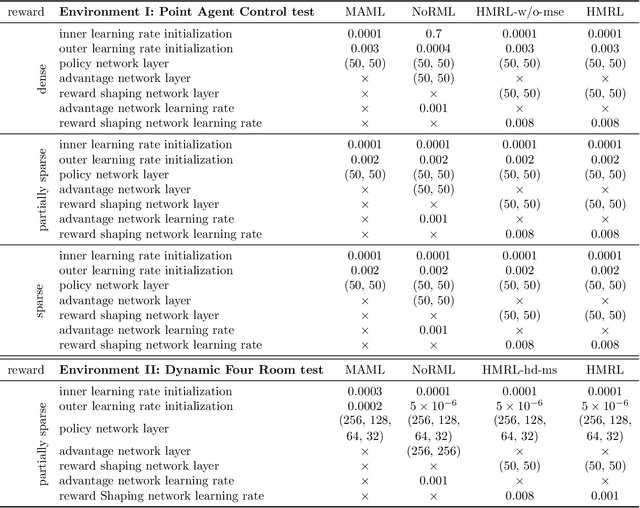

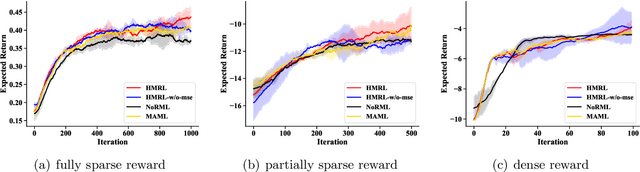

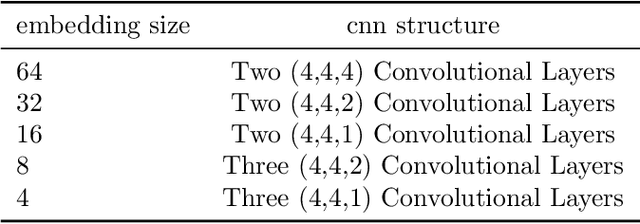

Despite their success, existing meta reinforcement learning methods still have difficulty in learning a meta policy effectively for RL problems with sparse reward. To this end, we develop a novel meta reinforcement learning framework, Hyper-Meta RL (HMRL), for sparse reward RL problems. It consists of meta state embedding, meta reward shaping and meta policy learning modules: The cross-environment meta state embedding module constructs a common meta state space to adapt to different environments; The meta state based environment-specific meta reward shaping effectively extends the original sparse reward trajectory by cross-environmental knowledge complementarity; As a consequence, the meta policy then achieves better generalization and efficiency with the shaped meta reward. Experiments with sparse reward show the superiority of HMRL on both transferability and policy learning efficiency.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge