Autoencoders with Intrinsic Dimension Constraints for Learning Low Dimensional Image Representations

Paper and Code

Apr 16, 2023

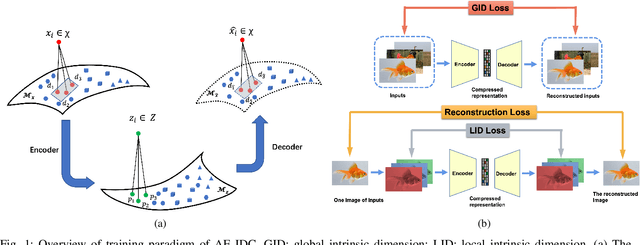

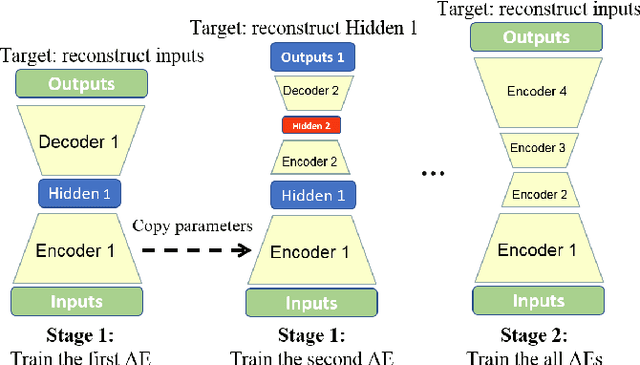

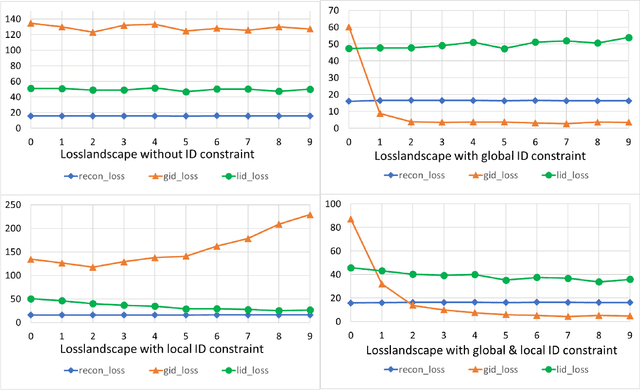

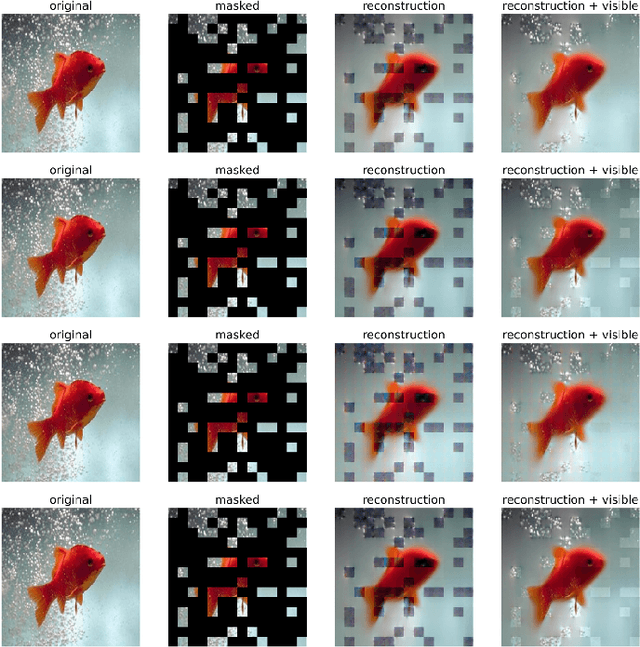

Autoencoders have achieved great success in various computer vision applications. The autoencoder learns appropriate low dimensional image representations through the self-supervised paradigm, i.e., reconstruction. Existing studies mainly focus on the minimizing the reconstruction error on pixel level of image, while ignoring the preservation of Intrinsic Dimension (ID), which is a fundamental geometric property of data representations in Deep Neural Networks (DNNs). Motivated by the important role of ID, in this paper, we propose a novel deep representation learning approach with autoencoder, which incorporates regularization of the global and local ID constraints into the reconstruction of data representations. This approach not only preserves the global manifold structure of the whole dataset, but also maintains the local manifold structure of the feature maps of each point, which makes the learned low-dimensional features more discriminant and improves the performance of the downstream algorithms. To our best knowledge, existing works are rare and limited on exploiting both global and local ID invariant properties on the regularization of autoencoders. Numerical experimental results on benchmark datasets (Extended Yale B, Caltech101 and ImageNet) show that the resulting regularized learning models achieve better discriminative representations for downstream tasks including image classification and clustering.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge