Artificial Agents Learn Flexible Visual Representations by Playing a Hiding Game

Paper and Code

Dec 18, 2019

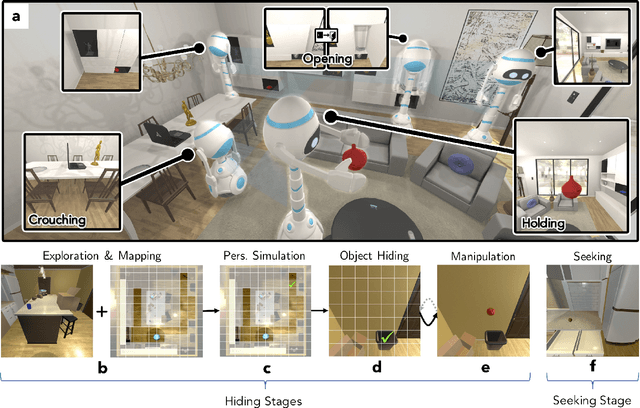

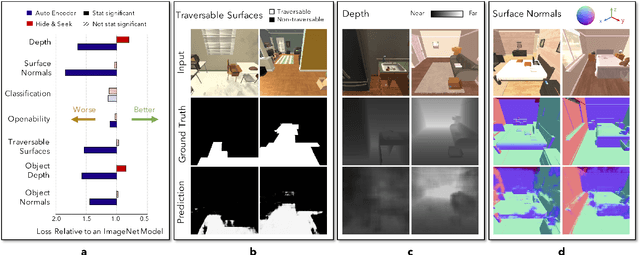

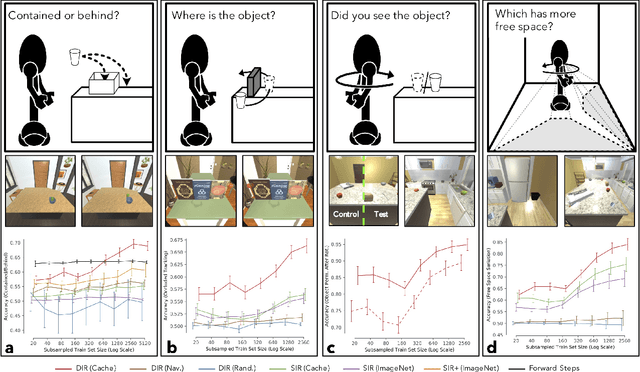

The ubiquity of embodied gameplay, observed in a wide variety of animal species including turtles and ravens, has led researchers to question what advantages play provides to the animals engaged in it. Mounting evidence suggests that play is critical in developing the neural flexibility for creative problem solving, socialization, and can improve the plasticity of the medial prefrontal cortex. Comparatively little is known regarding the impact of gameplay upon embodied artificial agents. While recent work has produced artificial agents proficient in abstract games, the environments these agents act within are far removed the real world and thus these agents provide little insight into the advantages of embodied play. Hiding games have arisen in multiple cultures and species, and provide a rich ground for studying the impact of embodied gameplay on representation learning in the context of perspective taking, secret keeping, and false belief understanding. Here we are the first to show that embodied adversarial reinforcement learning agents playing cache, a variant of hide-and-seek, in a high fidelity, interactive, environment, learn representations of their observations encoding information such as occlusion, object permanence, free space, and containment; on par with representations learnt by the most popular modern paradigm for visual representation learning which requires large datasets independently labeled for each new task. Our representations are enhanced by intent and memory, through interaction and play, moving closer to biologically motivated learning strategies. These results serve as a model for studying how facets of vision and perspective taking develop through play, provide an experimental framework for assessing what is learned by artificial agents, and suggest that representation learning should move from static datasets and towards experiential, interactive, learning.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge