Zhi-Hui Zhan

A New Scope and Domain Measure Comparison Method for Global Convergence Analysis in Evolutionary Computation

May 07, 2025Abstract:Convergence analysis is a fundamental research topic in evolutionary computation (EC). The commonly used analysis method models the EC algorithm as a homogeneous Markov chain for analysis, which is not always suitable for different EC variants, and also sometimes causes misuse and confusion due to their complex process. In this article, we categorize the existing researches on convergence analysis in EC algorithms into stable convergence and global convergence, and then prove that the conditions for these two convergence properties are somehow mutually exclusive. Inspired by this proof, we propose a new scope and domain measure comparison (SDMC) method for analyzing the global convergence of EC algorithms and provide a rigorous proof of its necessity and sufficiency as an alternative condition. Unlike traditional methods, the SDMC method is straightforward, bypasses Markov chain modeling, and minimizes errors from misapplication as it only focuses on the measure of the algorithm's search scope. We apply SDMC to two algorithm types that are unsuitable for traditional methods, confirming its effectiveness in global convergence analysis. Furthermore, we apply the SDMC method to explore the gene targeting mechanism's impact on the global convergence in large-scale global optimization, deriving insights into how to design EC algorithms that guarantee global convergence and exploring how theoretical analysis can guide EC algorithm design.

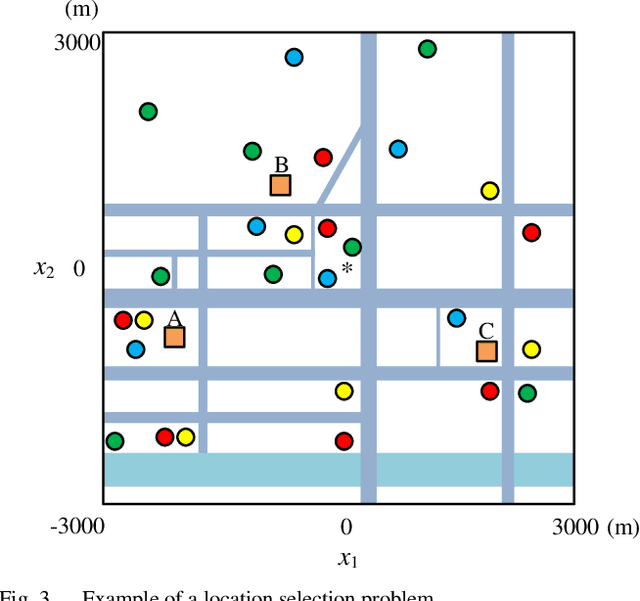

A Performance Investigation of Multimodal Multiobjective Optimization Algorithms in Solving Two Types of Real-World Problems

Dec 04, 2024

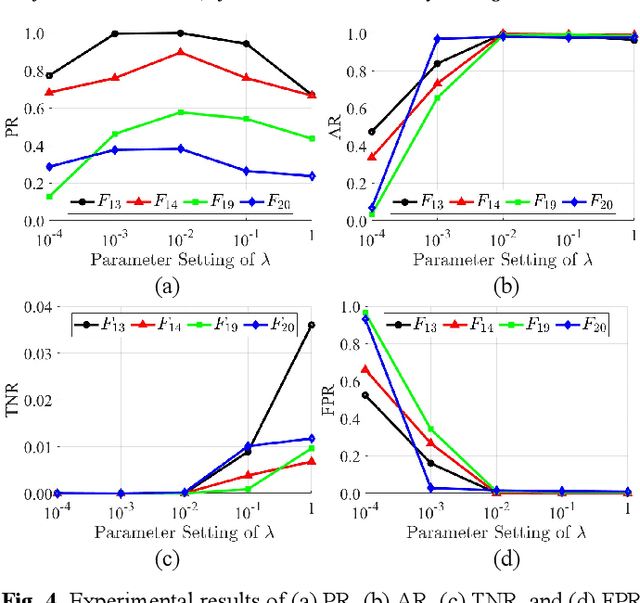

Abstract:In recent years, multimodal multiobjective optimization algorithms (MMOAs) based on evolutionary computation have been widely studied. However, existing MMOAs are mainly tested on benchmark function sets such as the 2019 IEEE Congress on Evolutionary Computation test suite (CEC 2019), and their performance on real-world problems is neglected. In this paper, two types of real-world multimodal multiobjective optimization problems in feature selection and location selection respectively are formulated. Moreover, four real-world datasets of Guangzhou, China are constructed for location selection. An investigation is conducted to evaluate the performance of seven existing MMOAs in solving these two types of real-world problems. An analysis of the experimental results explores the characteristics of the tested MMOAs, providing insights for selecting suitable MMOAs in real-world applications.

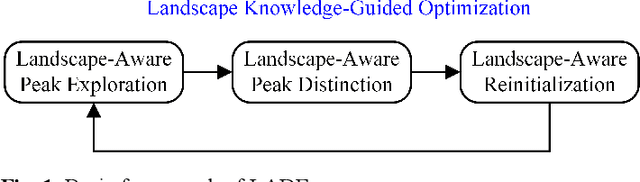

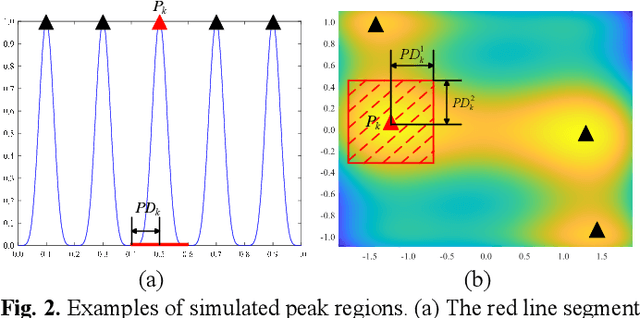

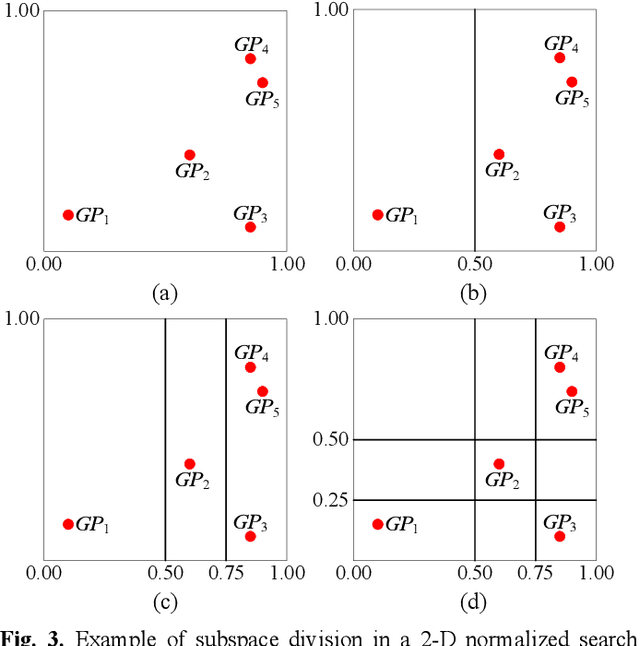

A Landscape-Aware Differential Evolution for Multimodal Optimization Problems

Aug 05, 2024

Abstract:How to simultaneously locate multiple global peaks and achieve certain accuracy on the found peaks are two key challenges in solving multimodal optimization problems (MMOPs). In this paper, a landscape-aware differential evolution (LADE) algorithm is proposed for MMOPs, which utilizes landscape knowledge to maintain sufficient diversity and provide efficient search guidance. In detail, the landscape knowledge is efficiently utilized in the following three aspects. First, a landscape-aware peak exploration helps each individual evolve adaptively to locate a peak and simulates the regions of the found peaks according to search history to avoid an individual locating a found peak. Second, a landscape-aware peak distinction distinguishes whether an individual locates a new global peak, a new local peak, or a found peak. Accuracy refinement can thus only be conducted on the global peaks to enhance the search efficiency. Third, a landscape-aware reinitialization specifies the initial position of an individual adaptively according to the distribution of the found peaks, which helps explore more peaks. The experiments are conducted on 20 widely-used benchmark MMOPs. Experimental results show that LADE obtains generally better or competitive performance compared with seven well-performed algorithms proposed recently and four winner algorithms in the IEEE CEC competitions for multimodal optimization.

Learning to Transfer for Evolutionary Multitasking

Jun 20, 2024

Abstract:Evolutionary multitasking (EMT) is an emerging approach for solving multitask optimization problems (MTOPs) and has garnered considerable research interest. The implicit EMT is a significant research branch that utilizes evolution operators to enable knowledge transfer (KT) between tasks. However, current approaches in implicit EMT face challenges in adaptability, due to the use of a limited number of evolution operators and insufficient utilization of evolutionary states for performing KT. This results in suboptimal exploitation of implicit KT's potential to tackle a variety of MTOPs. To overcome these limitations, we propose a novel Learning to Transfer (L2T) framework to automatically discover efficient KT policies for the MTOPs at hand. Our framework conceptualizes the KT process as a learning agent's sequence of strategic decisions within the EMT process. We propose an action formulation for deciding when and how to transfer, a state representation with informative features of evolution states, a reward formulation concerning convergence and transfer efficiency gain, and the environment for the agent to interact with MTOPs. We employ an actor-critic network structure for the agent and learn it via proximal policy optimization. This learned agent can be integrated with various evolutionary algorithms, enhancing their ability to address a range of new MTOPs. Comprehensive empirical studies on both synthetic and real-world MTOPs, encompassing diverse inter-task relationships, function classes, and task distributions are conducted to validate the proposed L2T framework. The results show a marked improvement in the adaptability and performance of implicit EMT when solving a wide spectrum of unseen MTOPs.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge