Zheyang Shen

Operator-informed score matching for Markov diffusion models

Jun 13, 2024Abstract:Diffusion models are typically trained using score matching, yet score matching is agnostic to the particular forward process that defines the model. This paper argues that Markov diffusion models enjoy an advantage over other types of diffusion model, as their associated operators can be exploited to improve the training process. In particular, (i) there exists an explicit formal solution to the forward process as a sequence of time-dependent kernel mean embeddings; and (ii) the derivation of score-matching and related estimators can be streamlined. Building upon (i), we propose Riemannian diffusion kernel smoothing, which ameliorates the need for neural score approximation, at least in the low-dimensional context; Building upon (ii), we propose operator-informed score matching, a variance reduction technique that is straightforward to implement in both low- and high-dimensional diffusion modeling and is demonstrated to improve score matching in an empirical proof-of-concept.

De-randomizing MCMC dynamics with the diffusion Stein operator

Oct 07, 2021

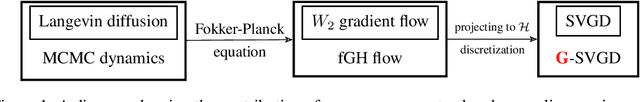

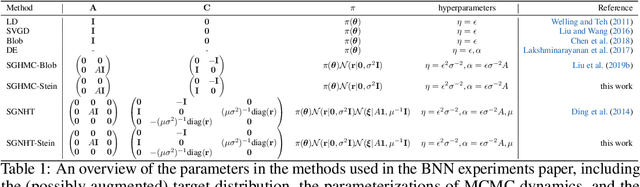

Abstract:Approximate Bayesian inference estimates descriptors of an intractable target distribution - in essence, an optimization problem within a family of distributions. For example, Langevin dynamics (LD) extracts asymptotically exact samples from a diffusion process because the time evolution of its marginal distributions constitutes a curve that minimizes the KL-divergence via steepest descent in the Wasserstein space. Parallel to LD, Stein variational gradient descent (SVGD) similarly minimizes the KL, albeit endowed with a novel Stein-Wasserstein distance, by deterministically transporting a set of particle samples, thus de-randomizes the stochastic diffusion process. We propose de-randomized kernel-based particle samplers to all diffusion-based samplers known as MCMC dynamics. Following previous work in interpreting MCMC dynamics, we equip the Stein-Wasserstein space with a fiber-Riemannian Poisson structure, with the capacity of characterizing a fiber-gradient Hamiltonian flow that simulates MCMC dynamics. Such dynamics discretizes into generalized SVGD (GSVGD), a Stein-type deterministic particle sampler, with particle updates coinciding with applying the diffusion Stein operator to a kernel function. We demonstrate empirically that GSVGD can de-randomize complex MCMC dynamics, which combine the advantages of auxiliary momentum variables and Riemannian structure, while maintaining the high sample quality from an interacting particle system.

Rethinking Sparse Gaussian Processes: Bayesian Approaches to Inducing-Variable Approximations

Mar 09, 2020

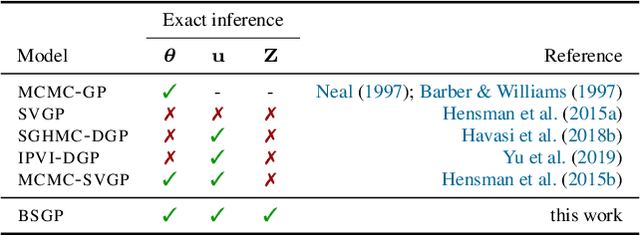

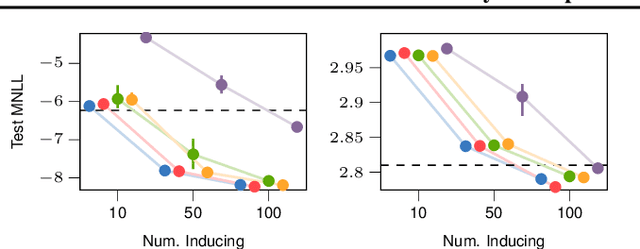

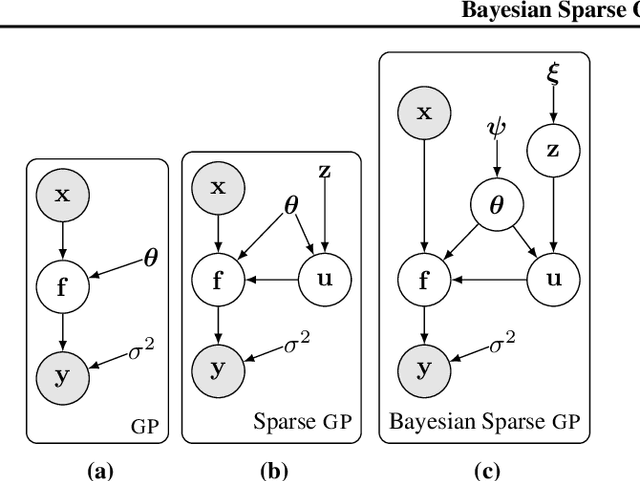

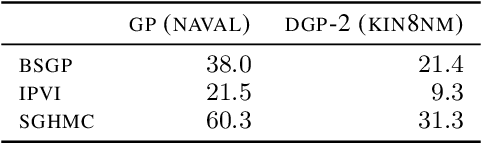

Abstract:Variational inference techniques based on inducing variables provide an elegant framework for scalable posterior estimation in Gaussian process (GP) models. Most previous works treat the locations of the inducing variables, i.e. the inducing inputs, as variational hyperparameters, and these are then optimized together with GP covariance hyper-parameters. While some approaches point to the benefits of a Bayesian treatment of GP hyper-parameters, this has been largely overlooked for the inducing inputs. In this work, we show that treating both inducing locations and GP hyper-parameters in a Bayesian way, by inferring their full posterior, further significantly improves performance. Based on stochastic gradient Hamiltonian Monte Carlo, we develop a fully Bayesian approach to scalable GP and deep GP models, and demonstrate its competitive performance through an extensive experimental campaign across several regression and classification problems.

Learning spectrograms with convolutional spectral kernels

May 23, 2019

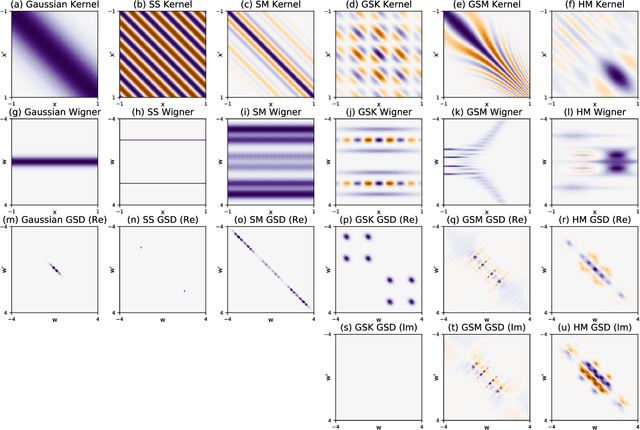

Abstract:We introduce the convolutional spectral kernel (CSK), a novel family of interpretable and non-stationary kernels derived from the convolution of two imaginary radial basis functions. We propose the input-frequency spectrogram as a novel tool to analyze nonparametric kernels as well as the kernels of deep Gaussian processes (DGPs). Observing through the lens of the spectrogram, we shed light on the interpretability of deep models, along with useful insights for effective inference. We also present scalable variational and stochastic Hamiltonian Monte Carlo inference to learn rich, yet interpretable frequency patterns from data using DGPs constructed via covariance functions. Empirically we show on simulated and real-world datasets that CSK extracts meaningful non-stationary periodicities.

Harmonizable mixture kernels with variational Fourier features

Oct 11, 2018

Abstract:The expressive power of Gaussian processes depends heavily on the choice of kernel. In this work we propose the novel harmonizable mixture kernel (HMK), a family of expressive, interpretable, non-stationary kernels derived from mixture models on the generalized spectral representation. As a theoretically sound treatment of non-stationary kernels, HMK supports harmonizable covariances, a wide subset of kernels including all stationary and many non-stationary covariances. We also propose variational Fourier features, an inter-domain sparse GP inference framework that offers a representative set of 'inducing frequencies'. We show that harmonizable mixture kernels interpolate between local patterns, and that variational Fourier features offers a robust kernel learning framework for the new kernel family.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge