Zhengdao Li

DeCo-DETR: Decoupled Cognition DETR for efficient Open-Vocabulary Object Detection

Apr 03, 2026Abstract:Open-vocabulary Object Detection (OVOD) enables models to recognize objects beyond predefined categories, but existing approaches remain limited in practical deployment. On the one hand, multimodal designs often incur substantial computational overhead due to their reliance on text encoders at inference time. On the other hand, tightly coupled training objectives introduce a trade-off between closed-set detection accuracy and open-world generalization. Thus, we propose Decoupled Cognition DETR (DeCo-DETR), a vision-centric framework that addresses these challenges through a unified decoupling paradigm. Instead of depending on online text encoding, DeCo-DETR constructs a hierarchical semantic prototype space from region-level descriptions generated by pre-trained LVLMs and aligned via CLIP, enabling efficient and reusable semantic representation. Building upon this representation, the framework further disentangles semantic reasoning from localization through a decoupled training strategy, which separates alignment and detection into parallel optimization streams. Extensive experiments on standard OVOD benchmarks demonstrate that DeCo-DETR achieves competitive zero-shot detection performance while significantly improving inference efficiency. These results highlight the effectiveness of decoupling semantic cognition from detection, offering a practical direction for scalable OVOD systems.

Improved GNSS Positioning in Urban Environments Using a Logistic Error Model

Mar 17, 2026Abstract:A Gaussian error assumption is commonly adopted in the pseudorange measurement model for global navigation satellite system (GNSS) positioning, which leads to the conventional least squares (LS) estimator. In urban environments, however, multipath and non-line-of-sight (NLOS) receptions produce heavy-tailed pseudorange errors that are not well represented by the Gaussian model. This study models urban GNSS pseudorange errors using a logistic distribution and derives the corresponding maximum likelihood estimator, termed the Least Quasi-Log-Cosh (LQLC) estimator. The resulting estimation problem is solved efficiently using an iteratively reweighted least squares (IRLS) algorithm. Experiments in light, medium, and deep urban environments show that LQLC consistently outperforms LS, reducing the three-dimensional (3D) root mean square error (RMSE) by approximately 11%-31% and the 3D error standard deviation (STD) by approximately 27%-61%. A controlled scale-mismatch analysis further shows that LQLC is more sensitive to severe underestimation than to overestimation of the logistic scale, indicating that the practical tuning requirement is to avoid overly small scale values rather than to achieve exact scale matching. In addition, the computational cost remains compatible with real-time positioning. These results indicate that logistic modeling provides a simple and practical alternative to Gaussian-based urban GNSS positioning.

Rethinking the Effectiveness of Graph Classification Datasets in Benchmarks for Assessing GNNs

Jul 06, 2024Abstract:Graph classification benchmarks, vital for assessing and developing graph neural networks (GNNs), have recently been scrutinized, as simple methods like MLPs have demonstrated comparable performance. This leads to an important question: Do these benchmarks effectively distinguish the advancements of GNNs over other methodologies? If so, how do we quantitatively measure this effectiveness? In response, we first propose an empirical protocol based on a fair benchmarking framework to investigate the performance discrepancy between simple methods and GNNs. We further propose a novel metric to quantify the dataset effectiveness by considering both dataset complexity and model performance. To the best of our knowledge, our work is the first to thoroughly study and provide an explicit definition for dataset effectiveness in the graph learning area. Through testing across 16 real-world datasets, we found our metric to align with existing studies and intuitive assumptions. Finally, we explore the causes behind the low effectiveness of certain datasets by investigating the correlation between intrinsic graph properties and class labels, and we developed a novel technique supporting the correlation-controllable synthetic dataset generation. Our findings shed light on the current understanding of benchmark datasets, and our new platform could fuel the future evolution of graph classification benchmarks.

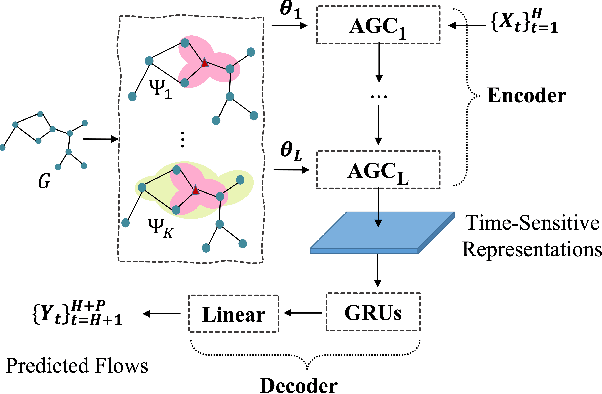

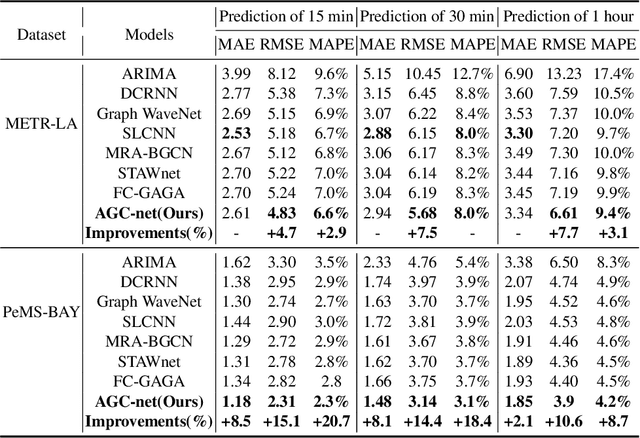

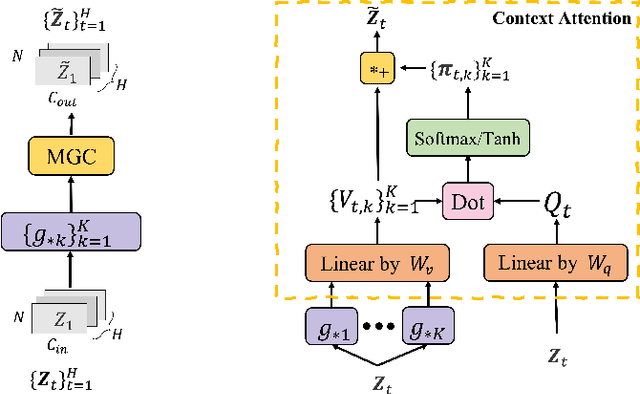

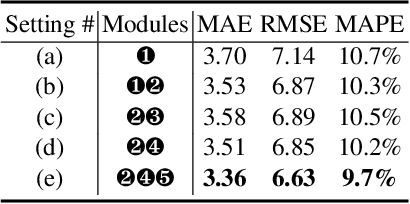

Adaptive Graph Convolution Networks for Traffic Flow Forecasting

Jul 07, 2023

Abstract:Traffic flow forecasting is a highly challenging task due to the dynamic spatial-temporal road conditions. Graph neural networks (GNN) has been widely applied in this task. However, most of these GNNs ignore the effects of time-varying road conditions due to the fixed range of the convolution receptive field. In this paper, we propose a novel Adaptive Graph Convolution Networks (AGC-net) to address this issue in GNN. The AGC-net is constructed by the Adaptive Graph Convolution (AGC) based on a novel context attention mechanism, which consists of a set of graph wavelets with various learnable scales. The AGC transforms the spatial graph representations into time-sensitive features considering the temporal context. Moreover, a shifted graph convolution kernel is designed to enhance the AGC, which attempts to correct the deviations caused by inaccurate topology. Experimental results on two public traffic datasets demonstrate the effectiveness of the AGC-net\footnote{Code is available at: https://github.com/zhengdaoli/AGC-net} which outperforms other baseline models significantly.

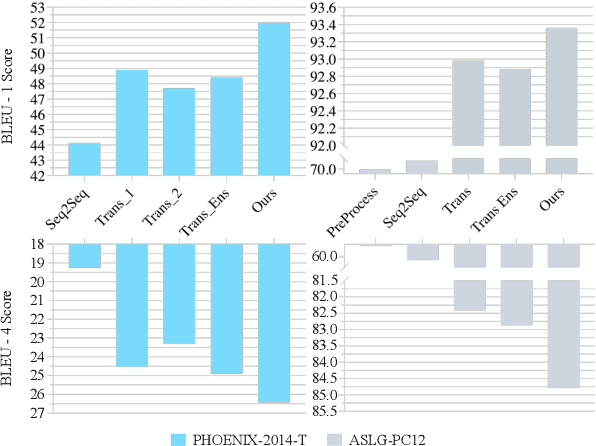

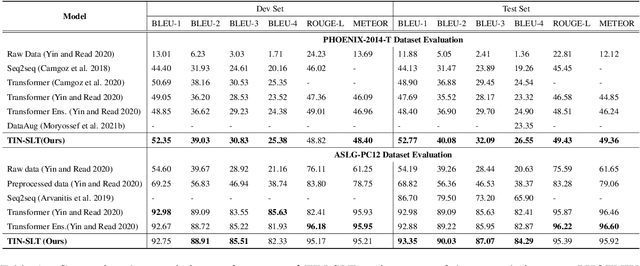

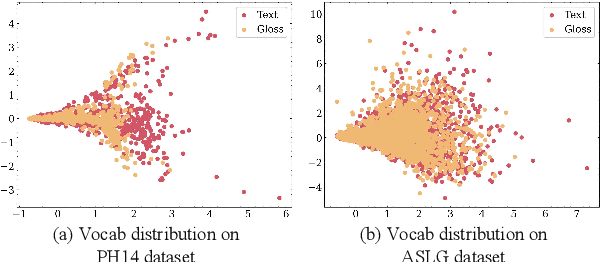

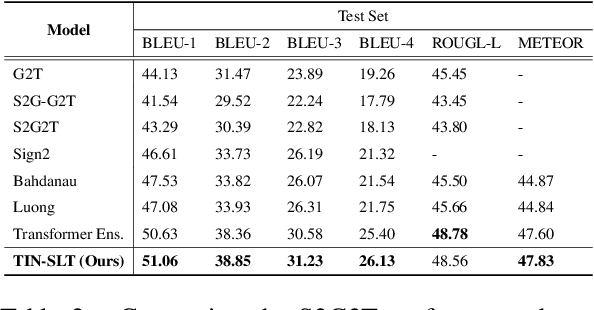

Explore More Guidance: A Task-aware Instruction Network for Sign Language Translation Enhanced with Data Augmentation

Apr 12, 2022

Abstract:Sign language recognition and translation first uses a recognition module to generate glosses from sign language videos and then employs a translation module to translate glosses into spoken sentences. Most existing works focus on the recognition step, while paying less attention to sign language translation. In this work, we propose a task-aware instruction network, namely TIN-SLT, for sign language translation, by introducing the instruction module and the learning-based feature fuse strategy into a Transformer network. In this way, the pre-trained model's language ability can be well explored and utilized to further boost the translation performance. Moreover, by exploring the representation space of sign language glosses and target spoken language, we propose a multi-level data augmentation scheme to adjust the data distribution of the training set. We conduct extensive experiments on two challenging benchmark datasets, PHOENIX-2014-T and ASLG-PC12, on which our method outperforms former best solutions by 1.65 and 1.42 in terms of BLEU-4. Our code is published at https://github.com/yongcaoplus/TIN-SLT.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge