Zhefan Ye

GRIP: Generative Robust Inference and Perception for Semantic Robot Manipulation in Adversarial Environments

Mar 20, 2019

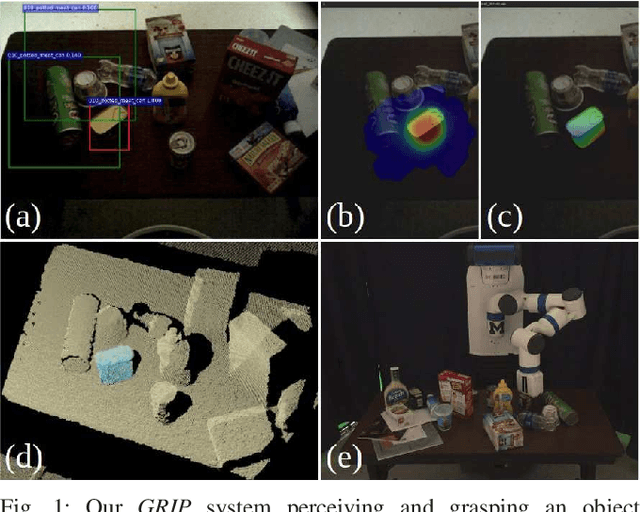

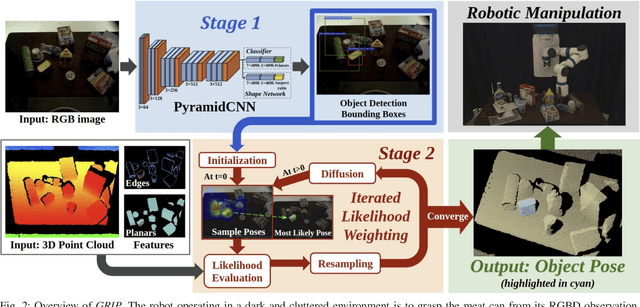

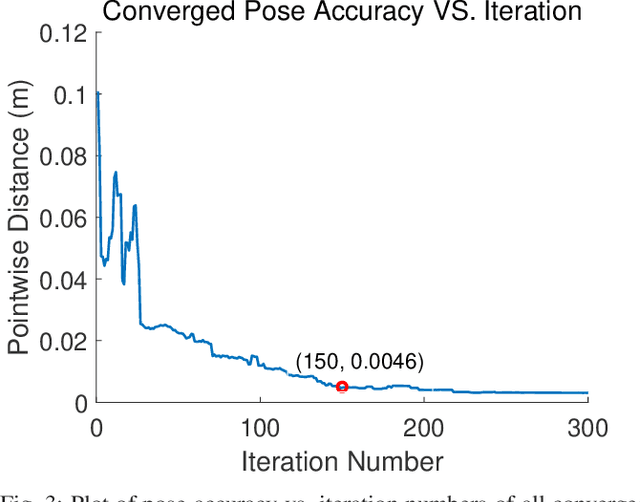

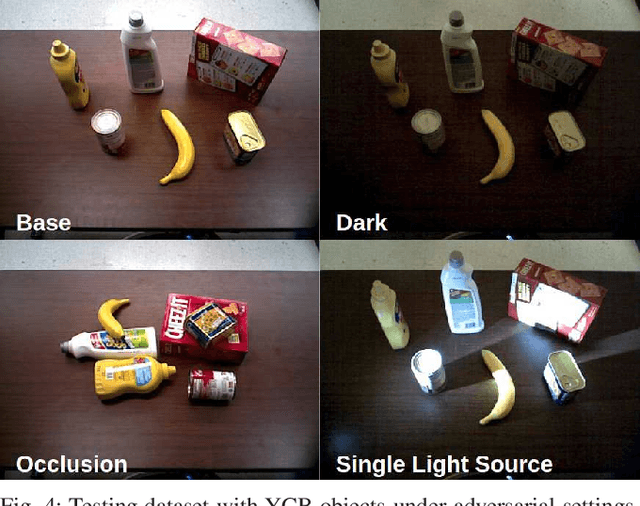

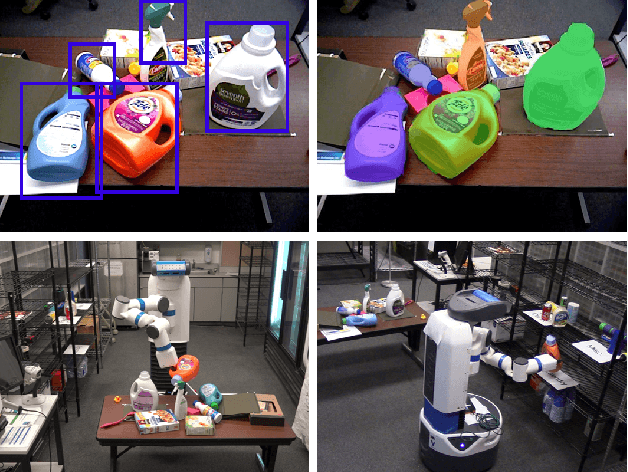

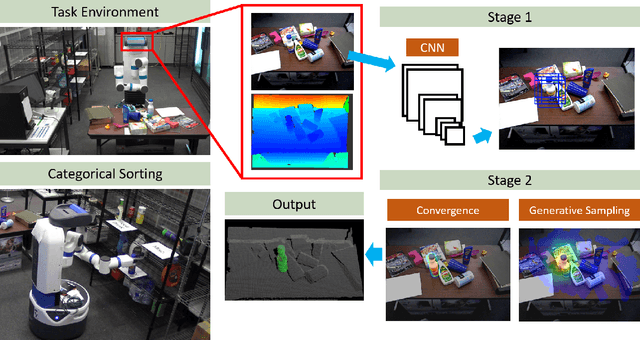

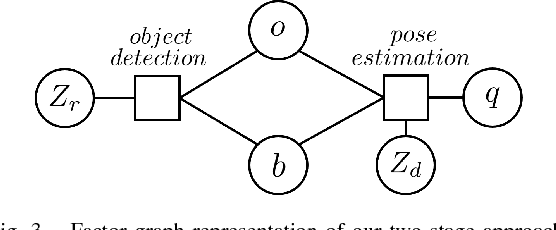

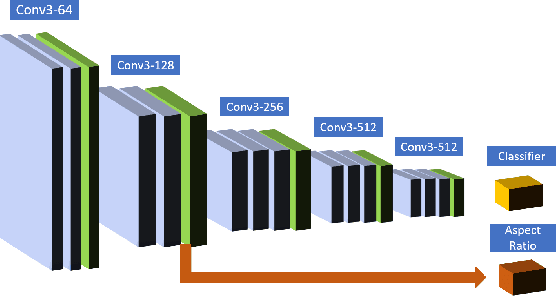

Abstract:Recent advancements have led to a proliferation of machine learning systems used to assist humans in a wide range of tasks. However, we are still far from accurate, reliable, and resource-efficient operations of these systems. For robot perception, convolutional neural networks (CNNs) for object detection and pose estimation are recently coming into widespread use. However, neural networks are known to suffer overfitting during training process and are less robust within unseen conditions, which are especially vulnerable to {\em adversarial scenarios}. In this work, we propose {\em Generative Robust Inference and Perception (GRIP)} as a two-stage object detection and pose estimation system that aims to combine relative strengths of discriminative CNNs and generative inference methods to achieve robust estimation. Our results show that a second stage of sample-based generative inference is able to recover from false object detection by CNNs, and produce robust estimations in adversarial conditions. We demonstrate the efficacy of {\em GRIP} robustness through comparison with state-of-the-art learning-based pose estimators and pick-and-place manipulation in dark and cluttered environments.

Never Mind the Bounding Boxes, Here's the SAND Filters

Aug 15, 2018

Abstract:Perception is the main bottleneck to perform autonomous mobile manipulation tasks, especially in cluttered and unstructured environment. In this paper, we propose a novel two-stage paradigm that leverage both CNN object prior and generative sampling to perform object detection and 6D pose estimation. Our two-stage approach builds upon both CNN and generative sampling-based local search method to achieve sampling the network density, or SAND filter. We show the quantitative results that SAND effectively improve object detection result by reducing false positive and false negative recognitions, and further produces accurate pose estimation. We also conduct extensive categorical object sorting experiments to show our method is able to produce accurate and reliable detections and object poses.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge