Yuzhou Lin

Predicting Continuous Locomotion Modes via Multidimensional Feature Learning from sEMG

Nov 13, 2023

Abstract:Walking-assistive devices require adaptive control methods to ensure smooth transitions between various modes of locomotion. For this purpose, detecting human locomotion modes (e.g., level walking or stair ascent) in advance is crucial for improving the intelligence and transparency of such robotic systems. This study proposes Deep-STF, a unified end-to-end deep learning model designed for integrated feature extraction in spatial, temporal, and frequency dimensions from surface electromyography (sEMG) signals. Our model enables accurate and robust continuous prediction of nine locomotion modes and 15 transitions at varying prediction time intervals, ranging from 100 to 500 ms. In addition, we introduced the concept of 'stable prediction time' as a distinct metric to quantify prediction efficiency. This term refers to the duration during which consistent and accurate predictions of mode transitions are made, measured from the time of the fifth correct prediction to the occurrence of the critical event leading to the task transition. This distinction between stable prediction time and prediction time is vital as it underscores our focus on the precision and reliability of mode transition predictions. Experimental results showcased Deep-STP's cutting-edge prediction performance across diverse locomotion modes and transitions, relying solely on sEMG data. When forecasting 100 ms ahead, Deep-STF surpassed CNN and other machine learning techniques, achieving an outstanding average prediction accuracy of 96.48%. Even with an extended 500 ms prediction horizon, accuracy only marginally decreased to 93.00%. The averaged stable prediction times for detecting next upcoming transitions spanned from 28.15 to 372.21 ms across the 100-500 ms time advances.

A novel DL approach to PE malware detection: exploring Glove vectorization, MCC_RCNN and feature fusion

Feb 04, 2021

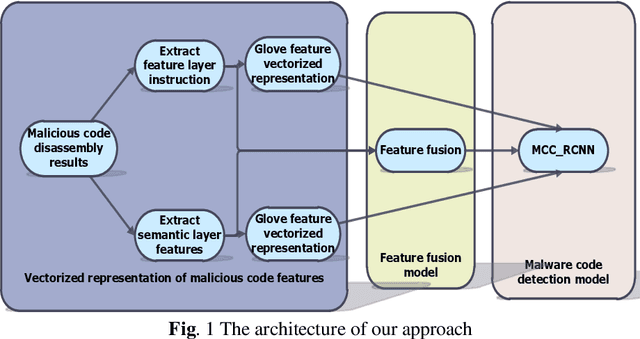

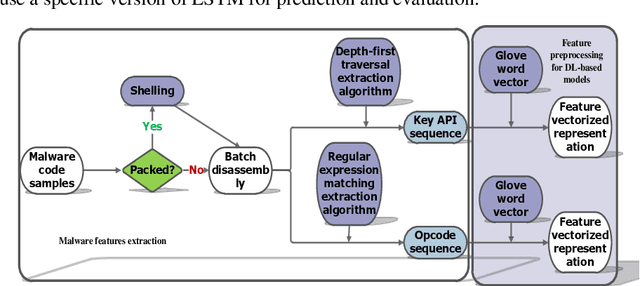

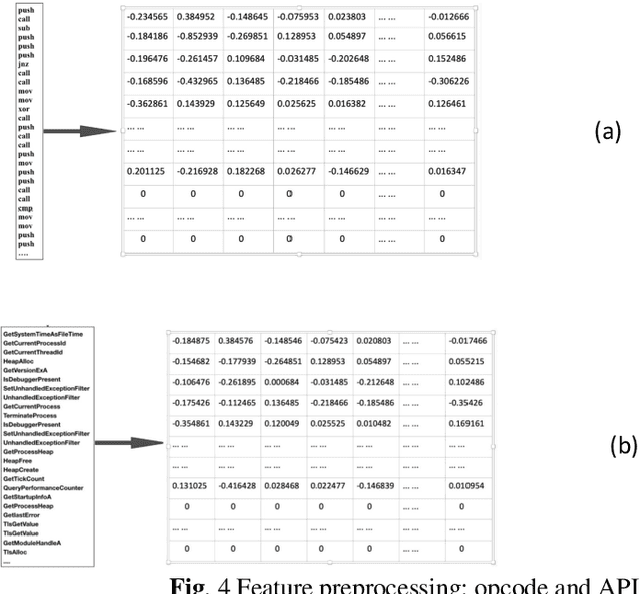

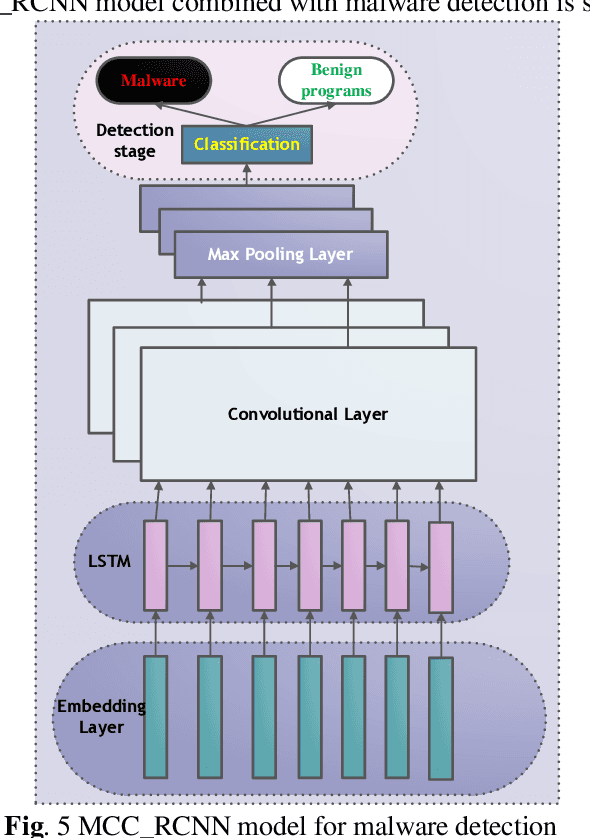

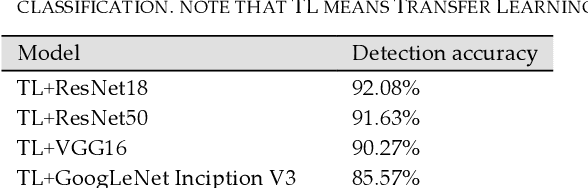

Abstract:In recent years, malware becomes more threatening. Concerning the increasing malware variants, there comes Machine Learning (ML)-based and Deep Learning (DL)-based approaches for heuristic detection. Nevertheless, the prediction accuracy of both needs to be improved. In response to the above issues in the PE malware domain, we propose the DL-based approaches for detection and use static-based features fed up into models. The contributions are as follows: we recapitulate existing malware detection methods. That is, we propose a vec-torized representation model of the malware instruction layer and semantic layer based on Glove. We implement a neural network model called MCC_RCNN (Malware Detection and Recurrent Convolutional Neural Network), comprising of the combination with CNN and RNN. Moreover, we provide a description of feature fusion in static behavior levels. With the numerical results generated from several comparative experiments towards evaluating the Glove-based vectoriza-tion, MCC_RCNN-based classification methodology and feature fusion stages, our proposed classification methods can obtain a higher prediction accuracy than the other baseline methods.

Towards interpreting ML-based automated malware detection models: a survey

Jan 15, 2021

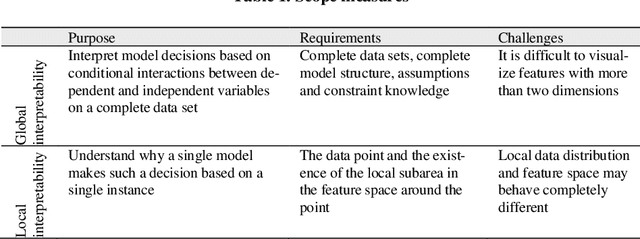

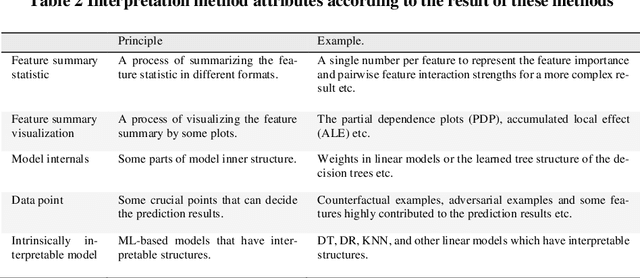

Abstract:Malware is being increasingly threatening and malware detectors based on traditional signature-based analysis are no longer suitable for current malware detection. Recently, the models based on machine learning (ML) are developed for predicting unknown malware variants and saving human strength. However, most of the existing ML models are black-box, which made their pre-diction results undependable, and therefore need further interpretation in order to be effectively deployed in the wild. This paper aims to examine and categorize the existing researches on ML-based malware detector interpretability. We first give a detailed comparison over the previous work on common ML model inter-pretability in groups after introducing the principles, attributes, evaluation indi-cators and taxonomy of common ML interpretability. Then we investigate the interpretation methods towards malware detection, by addressing the importance of interpreting malware detectors, challenges faced by this field, solutions for migitating these challenges, and a new taxonomy for classifying all the state-of-the-art malware detection interpretability work in recent years. The highlight of our survey is providing a new taxonomy towards malware detection interpreta-tion methods based on the common taxonomy summarized by previous re-searches in the common field. In addition, we are the first to evaluate the state-of-the-art approaches by interpretation method attributes to generate the final score so as to give insight to quantifying the interpretability. By concluding the results of the recent researches, we hope our work can provide suggestions for researchers who are interested in the interpretability on ML-based malware de-tection models.

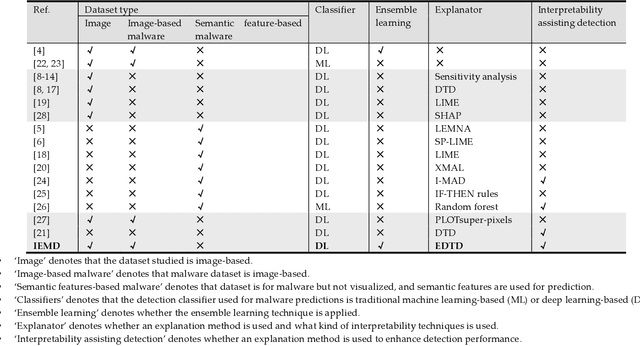

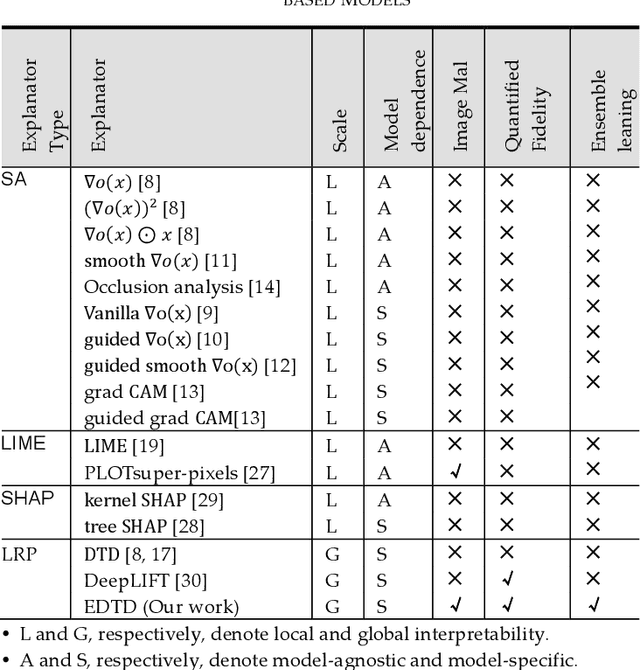

Towards Interpretable Ensemble Learning for Image-based Malware Detection

Jan 13, 2021

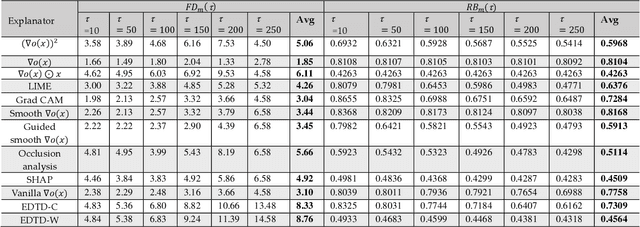

Abstract:Deep learning (DL) models for image-based malware detection have exhibited their capability in producing high prediction accuracy. But model interpretability is posing challenges to their widespread application in security and safety-critical application domains. This paper aims for designing an Interpretable Ensemble learning approach for image-based Malware Detection (IEMD). We first propose a Selective Deep Ensemble Learning-based (SDEL) detector and then design an Ensemble Deep Taylor Decomposition (EDTD) approach, which can give the pixel-level explanation to SDEL detector outputs. Furthermore, we develop formulas for calculating fidelity, robustness and expressiveness on pixel-level heatmaps in order to assess the quality of EDTD explanation. With EDTD explanation, we develop a novel Interpretable Dropout approach (IDrop), which establishes IEMD by training SDEL detector. Experiment results exhibit the better explanation of our EDTD than the previous explanation methods for image-based malware detection. Besides, experiment results indicate that IEMD achieves a higher detection accuracy up to 99.87% while exhibiting interpretability with high quality of prediction results. Moreover, experiment results indicate that IEMD interpretability increases with the increasing detection accuracy during the construction of IEMD. This consistency suggests that IDrop can mitigate the tradeoff between model interpretability and detection accuracy.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge