Yuting Shi

Phase Diagram of Vision Large Language Models Inference: A Perspective from Interaction across Image and Instruction

Nov 01, 2024Abstract:Vision Large Language Models (VLLMs) usually take input as a concatenation of image token embeddings and text token embeddings and conduct causal modeling. However, their internal behaviors remain underexplored, raising the question of interaction among two types of tokens. To investigate such multimodal interaction during model inference, in this paper, we measure the contextualization among the hidden state vectors of tokens from different modalities. Our experiments uncover a four-phase inference dynamics of VLLMs against the depth of Transformer-based LMs, including (I) Alignment: In very early layers, contextualization emerges between modalities, suggesting a feature space alignment. (II) Intra-modal Encoding: In early layers, intra-modal contextualization is enhanced while inter-modal interaction is suppressed, suggesting a local encoding within modalities. (III) Inter-modal Encoding: In later layers, contextualization across modalities is enhanced, suggesting a deeper fusion across modalities. (IV) Output Preparation: In very late layers, contextualization is reduced globally, and hidden states are aligned towards the unembedding space.

MoG-QSM: Model-based Generative Adversarial Deep Learning Network for Quantitative Susceptibility Mapping

Jan 21, 2021

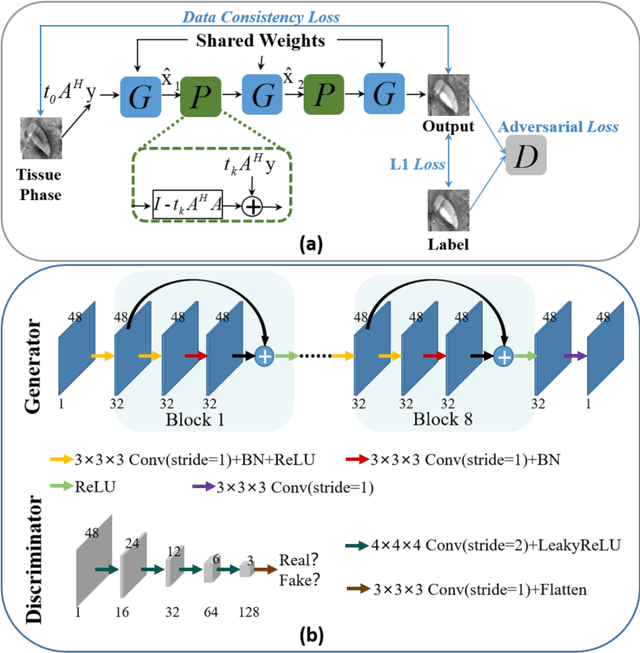

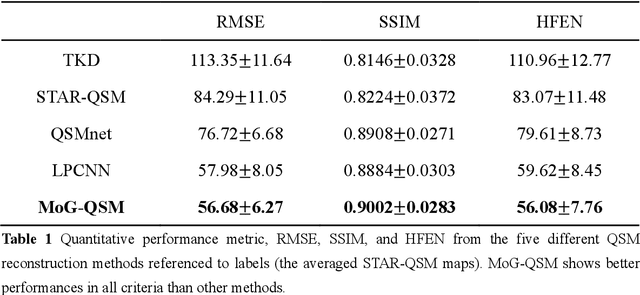

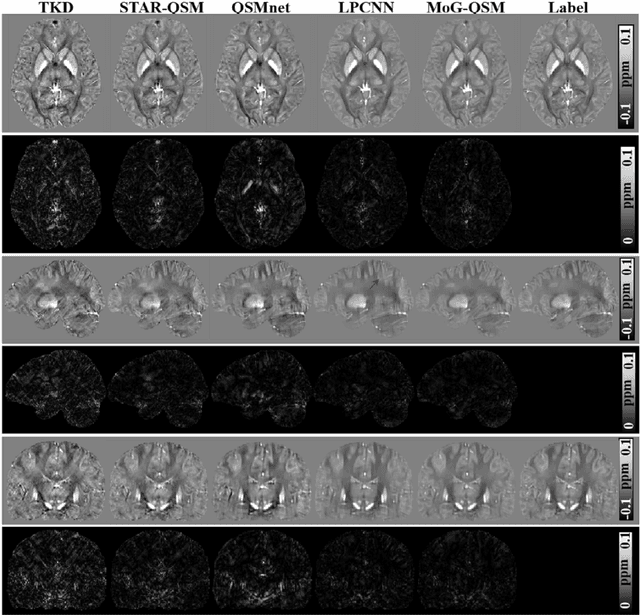

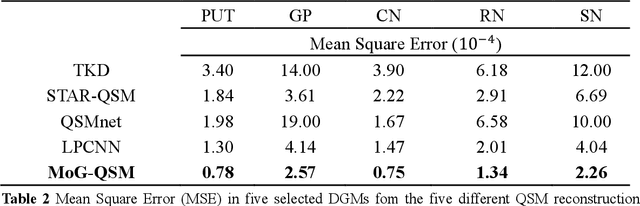

Abstract:Quantitative susceptibility mapping (QSM) estimates the underlying tissue magnetic susceptibility from the MRI gradient-echo phase signal and has demonstrated great potential in quantifying tissue susceptibility in various brain diseases. However, the intrinsic ill-posed inverse problem relating the tissue phase to the underlying susceptibility distribution affects the accuracy for quantifying tissue susceptibility. The resulting susceptibility map is known to suffer from noise amplification and streaking artifacts. To address these challenges, we propose a model-based framework that permeates benefits from generative adversarial networks to train a regularization term that contains prior information to constrain the solution of the inverse problem, referred to as MoG-QSM. A residual network leveraging a mixture of least-squares (LS) GAN and the L1 cost was trained as the generator to learn the prior information in susceptibility maps. A multilayer convolutional neural network was jointly trained to discriminate the quality of output images. MoG-QSM generates highly accurate susceptibility maps from single orientation phase maps. Quantitative evaluation parameters were compared with recently developed deep learning QSM methods and the results showed MoG-QSM achieves the best performance. Furthermore, a higher intraclass correlation coefficient (ICC) was obtained from MoG-QSM maps of the traveling subjects, demonstrating its potential for future applications, such as large cohorts of multi-center studies. MoG-QSM is also helpful for reliable longitudinal measurement of susceptibility time courses, enabling more precise monitoring for metal ion accumulation in neurodegenerative disorders.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge