Yuhan Zhou

A Survey on Data Quality Dimensions and Tools for Machine Learning

Jun 28, 2024

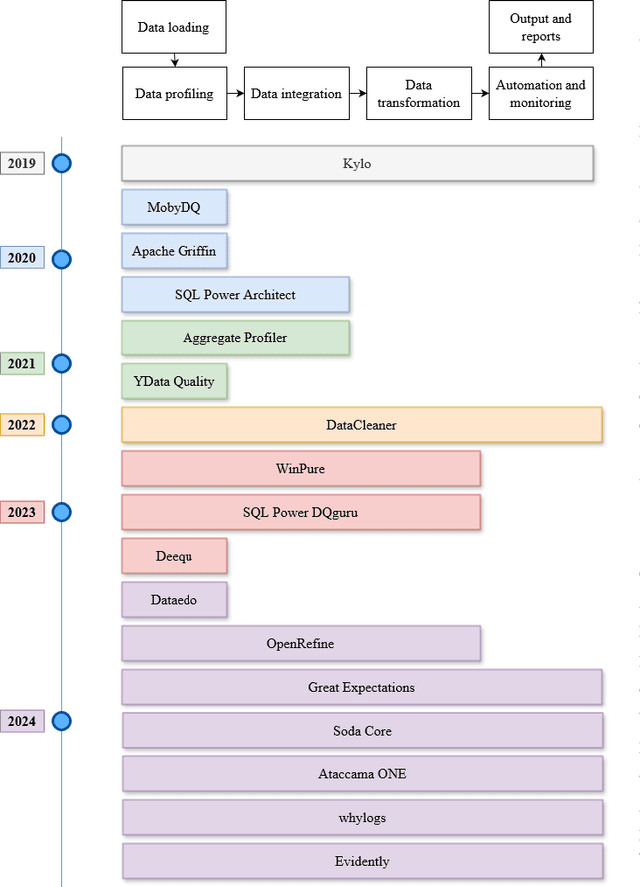

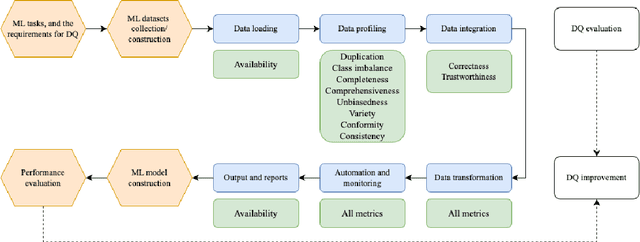

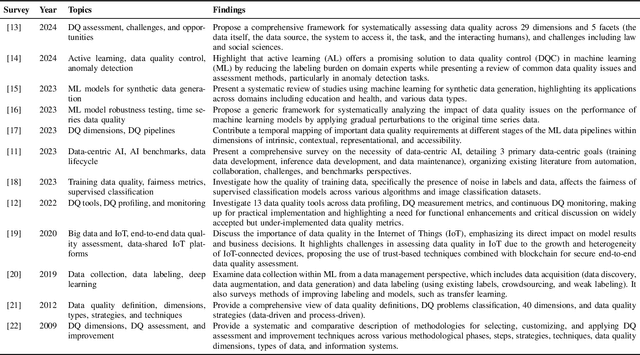

Abstract:Machine learning (ML) technologies have become substantial in practically all aspects of our society, and data quality (DQ) is critical for the performance, fairness, robustness, safety, and scalability of ML models. With the large and complex data in data-centric AI, traditional methods like exploratory data analysis (EDA) and cross-validation (CV) face challenges, highlighting the importance of mastering DQ tools. In this survey, we review 17 DQ evaluation and improvement tools in the last 5 years. By introducing the DQ dimensions, metrics, and main functions embedded in these tools, we compare their strengths and limitations and propose a roadmap for developing open-source DQ tools for ML. Based on the discussions on the challenges and emerging trends, we further highlight the potential applications of large language models (LLMs) and generative AI in DQ evaluation and improvement for ML. We believe this comprehensive survey can enhance understanding of DQ in ML and could drive progress in data-centric AI. A complete list of the literature investigated in this survey is available on GitHub at: https://github.com/haihua0913/awesome-dq4ml.

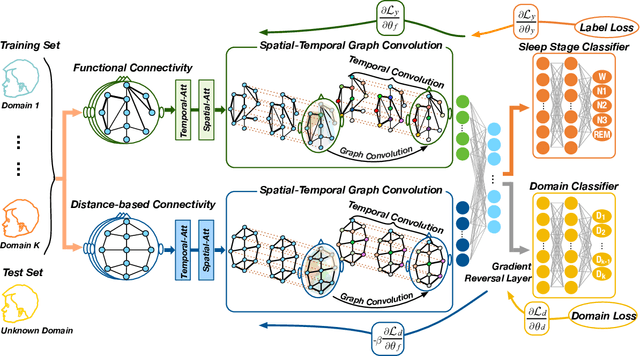

Multi-View Spatial-Temporal Graph Convolutional Networks with Domain Generalization for Sleep Stage Classification

Sep 04, 2021

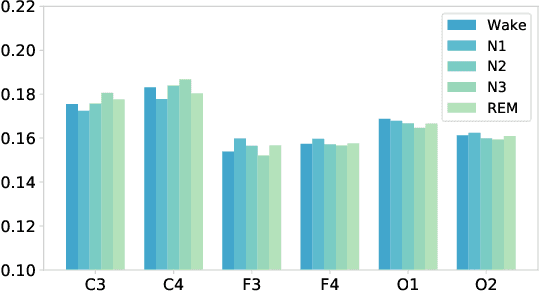

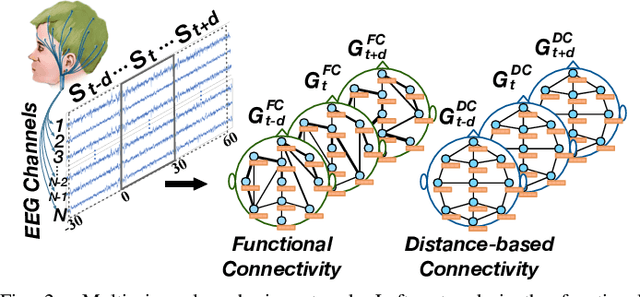

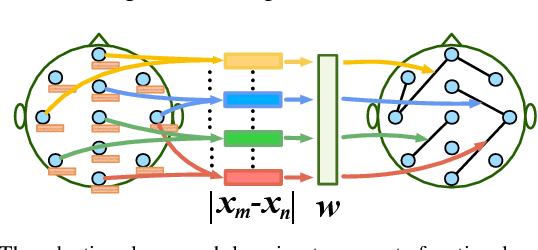

Abstract:Sleep stage classification is essential for sleep assessment and disease diagnosis. Although previous attempts to classify sleep stages have achieved high classification performance, several challenges remain open: 1) How to effectively utilize time-varying spatial and temporal features from multi-channel brain signals remains challenging. Prior works have not been able to fully utilize the spatial topological information among brain regions. 2) Due to the many differences found in individual biological signals, how to overcome the differences of subjects and improve the generalization of deep neural networks is important. 3) Most deep learning methods ignore the interpretability of the model to the brain. To address the above challenges, we propose a multi-view spatial-temporal graph convolutional networks (MSTGCN) with domain generalization for sleep stage classification. Specifically, we construct two brain view graphs for MSTGCN based on the functional connectivity and physical distance proximity of the brain regions. The MSTGCN consists of graph convolutions for extracting spatial features and temporal convolutions for capturing the transition rules among sleep stages. In addition, attention mechanism is employed for capturing the most relevant spatial-temporal information for sleep stage classification. Finally, domain generalization and MSTGCN are integrated into a unified framework to extract subject-invariant sleep features. Experiments on two public datasets demonstrate that the proposed model outperforms the state-of-the-art baselines.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge