Yue Zhuo

IG2: Integrated Gradient on Iterative Gradient Path for Feature Attribution

Jun 16, 2024Abstract:Feature attribution explains Artificial Intelligence (AI) at the instance level by providing importance scores of input features' contributions to model prediction. Integrated Gradients (IG) is a prominent path attribution method for deep neural networks, involving the integration of gradients along a path from the explained input (explicand) to a counterfactual instance (baseline). Current IG variants primarily focus on the gradient of explicand's output. However, our research indicates that the gradient of the counterfactual output significantly affects feature attribution as well. To achieve this, we propose Iterative Gradient path Integrated Gradients (IG2), considering both gradients. IG2 incorporates the counterfactual gradient iteratively into the integration path, generating a novel path (GradPath) and a novel baseline (GradCF). These two novel IG components effectively address the issues of attribution noise and arbitrary baseline choice in earlier IG methods. IG2, as a path method, satisfies many desirable axioms, which are theoretically justified in the paper. Experimental results on XAI benchmark, ImageNet, MNIST, TREC questions answering, wafer-map failure patterns, and CelebA face attributes validate that IG2 delivers superior feature attributions compared to the state-of-the-art techniques. The code is released at: https://github.com/JoeZhuo-ZY/IG2.

ABIGX: A Unified Framework for eXplainable Fault Detection and Classification

Nov 09, 2023Abstract:For explainable fault detection and classification (FDC), this paper proposes a unified framework, ABIGX (Adversarial fault reconstruction-Based Integrated Gradient eXplanation). ABIGX is derived from the essentials of previous successful fault diagnosis methods, contribution plots (CP) and reconstruction-based contribution (RBC). It is the first explanation framework that provides variable contributions for the general FDC models. The core part of ABIGX is the adversarial fault reconstruction (AFR) method, which rethinks the FR from the perspective of adversarial attack and generalizes to fault classification models with a new fault index. For fault classification, we put forward a new problem of fault class smearing, which intrinsically hinders the correct explanation. We prove that ABIGX effectively mitigates this problem and outperforms the existing gradient-based explanation methods. For fault detection, we theoretically bridge ABIGX with conventional fault diagnosis methods by proving that CP and RBC are the linear specifications of ABIGX. The experiments evaluate the explanations of FDC by quantitative metrics and intuitive illustrations, the results of which show the general superiority of ABIGX to other advanced explanation methods.

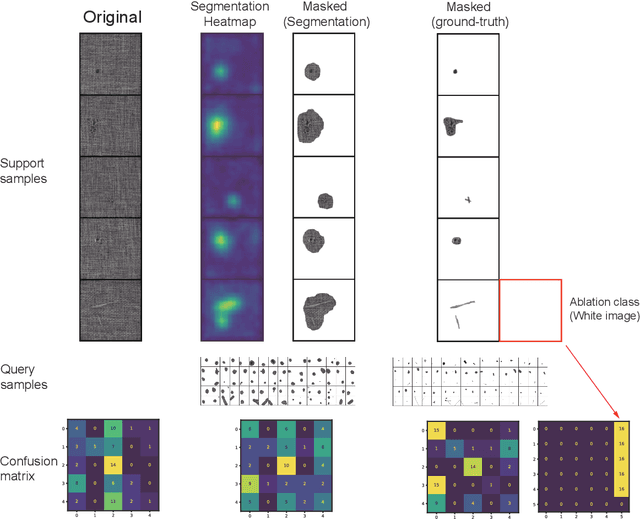

PatchProto Networks for Few-shot Visual Anomaly Classification

Oct 07, 2023

Abstract:The visual anomaly diagnosis can automatically analyze the defective products, which has been widely applied in industrial quality inspection. The anomaly classification can classify the defective products into different categories. However, the anomaly samples are hard to access in practice, which impedes the training of canonical machine learning models. This paper studies a practical issue that anomaly samples for training are extremely scarce, i.e., few-shot learning (FSL). Utilizing the sufficient normal samples, we propose PatchProto networks for few-shot anomaly classification. Different from classical FSL methods, PatchProto networks only extract CNN features of defective regions of interest, which serves as the prototypes for few-shot learning. Compared with basic few-shot classifier, the experiment results on MVTec-AD dataset show PatchProto networks significantly improve the few-shot anomaly classification accuracy.

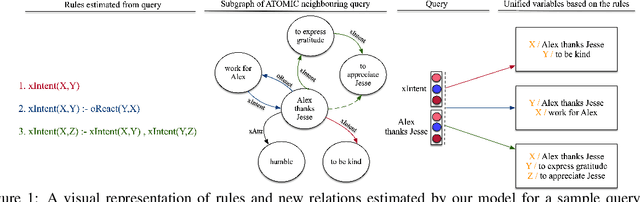

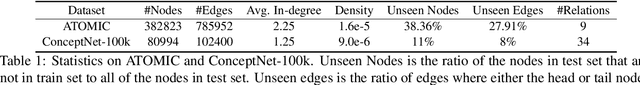

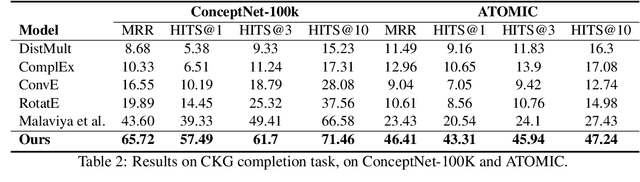

Neural-Symbolic Commonsense Reasoner with Relation Predictors

May 14, 2021

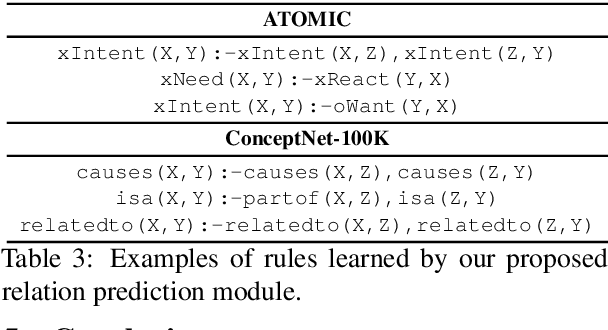

Abstract:Commonsense reasoning aims to incorporate sets of commonsense facts, retrieved from Commonsense Knowledge Graphs (CKG), to draw conclusion about ordinary situations. The dynamic nature of commonsense knowledge postulates models capable of performing multi-hop reasoning over new situations. This feature also results in having large-scale sparse Knowledge Graphs, where such reasoning process is needed to predict relations between new events. However, existing approaches in this area are limited by considering CKGs as a limited set of facts, thus rendering them unfit for reasoning over new unseen situations and events. In this paper, we present a neural-symbolic reasoner, which is capable of reasoning over large-scale dynamic CKGs. The logic rules for reasoning over CKGs are learned during training by our model. In addition to providing interpretable explanation, the learned logic rules help to generalise prediction to newly introduced events. Experimental results on the task of link prediction on CKGs prove the effectiveness of our model by outperforming the state-of-the-art models.

COSMO: Conditional SEQ2SEQ-based Mixture Model for Zero-Shot Commonsense Question Answering

Nov 02, 2020

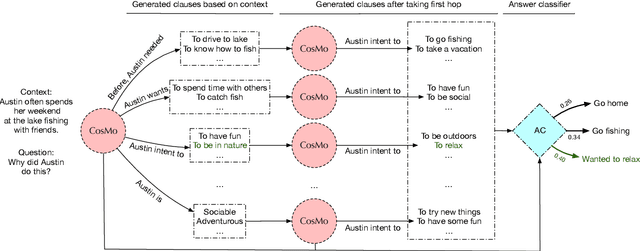

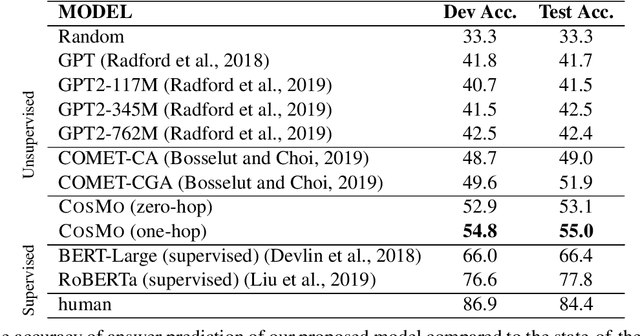

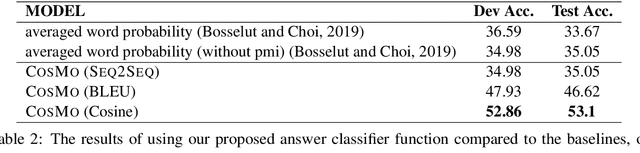

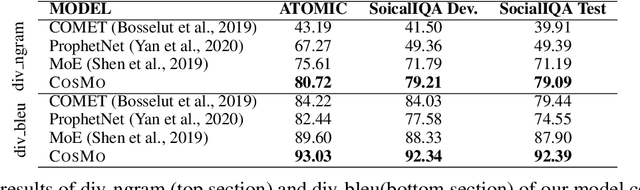

Abstract:Commonsense reasoning refers to the ability of evaluating a social situation and acting accordingly. Identification of the implicit causes and effects of a social context is the driving capability which can enable machines to perform commonsense reasoning. The dynamic world of social interactions requires context-dependent on-demand systems to infer such underlying information. However, current approaches in this realm lack the ability to perform commonsense reasoning upon facing an unseen situation, mostly due to incapability of identifying a diverse range of implicit social relations. Hence they fail to estimate the correct reasoning path. In this paper, we present Conditional SEQ2SEQ-based Mixture model (COSMO), which provides us with the capabilities of dynamic and diverse content generation. We use COSMO to generate context-dependent clauses, which form a dynamic Knowledge Graph (KG) on-the-fly for commonsense reasoning. To show the adaptability of our model to context-dependant knowledge generation, we address the task of zero-shot commonsense question answering. The empirical results indicate an improvement of up to +5.2% over the state-of-the-art models.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge