Yiying Dong

REF-VLM: Triplet-Based Referring Paradigm for Unified Visual Decoding

Mar 10, 2025

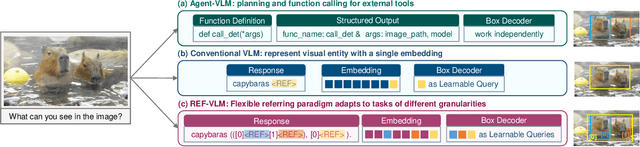

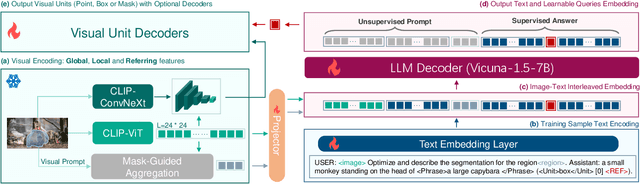

Abstract:Multimodal Large Language Models (MLLMs) demonstrate robust zero-shot capabilities across diverse vision-language tasks after training on mega-scale datasets. However, dense prediction tasks, such as semantic segmentation and keypoint detection, pose significant challenges for MLLMs when represented solely as text outputs. Simultaneously, current MLLMs utilizing latent embeddings for visual task decoding generally demonstrate limited adaptability to both multi-task learning and multi-granularity scenarios. In this work, we present REF-VLM, an end-to-end framework for unified training of various visual decoding tasks. To address complex visual decoding scenarios, we introduce the Triplet-Based Referring Paradigm (TRP), which explicitly decouples three critical dimensions in visual decoding tasks through a triplet structure: concepts, decoding types, and targets. TRP employs symbolic delimiters to enforce structured representation learning, enhancing the parsability and interpretability of model outputs. Additionally, we construct Visual-Task Instruction Following Dataset (VTInstruct), a large-scale multi-task dataset containing over 100 million multimodal dialogue samples across 25 task types. Beyond text inputs and outputs, VT-Instruct incorporates various visual prompts such as point, box, scribble, and mask, and generates outputs composed of text and visual units like box, keypoint, depth and mask. The combination of different visual prompts and visual units generates a wide variety of task types, expanding the applicability of REF-VLM significantly. Both qualitative and quantitative experiments demonstrate that our REF-VLM outperforms other MLLMs across a variety of standard benchmarks. The code, dataset, and demo available at https://github.com/MacavityT/REF-VLM.

Metasurface-generated large and arbitrary analog convolution kernels for accelerated machine vision

Sep 27, 2024

Abstract:In the rapidly evolving field of artificial intelligence, convolutional neural networks are essential for tackling complex challenges such as machine vision and medical diagnosis. Recently, to address the challenges in processing speed and power consumption of conventional digital convolution operations, many optical components have been suggested to replace the digital convolution layer in the neural network, accelerating various machine vision tasks. Nonetheless, the analog nature of the optical convolution kernel has not been fully explored. Here, we develop a spatial frequency domain training method to create arbitrarily shaped analog convolution kernels using an optical metasurface as the convolution layer, with its receptive field largely surpassing digital convolution kernels. By employing spatial multiplexing, the multiple parallel convolution kernels with both positive and negative weights are generated under the incoherent illumination condition. We experimentally demonstrate a 98.59% classification accuracy on the MNIST dataset, with simulations showing 92.63% and 68.67% accuracy on the Fashion-MNIST and CIFAR-10 datasets with additional digital layers. This work underscores the unique advantage of analog optical convolution, offering a promising avenue to accelerate machine vision tasks, especially in edge devices.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge