Yijie Dong

Multi-Attribute Attention Network for Interpretable Diagnosis of Thyroid Nodules in Ultrasound Images

Jul 09, 2022

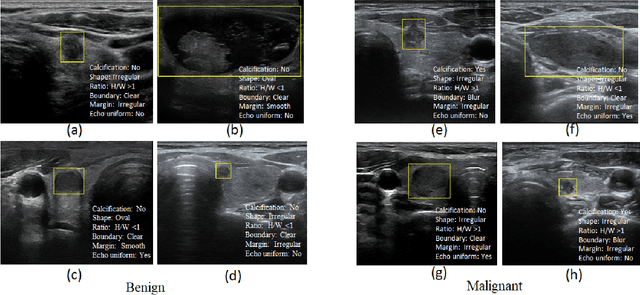

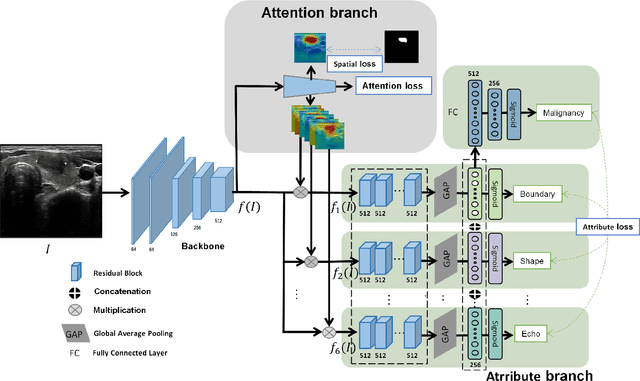

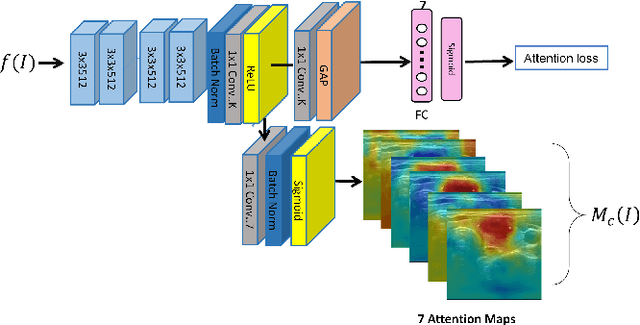

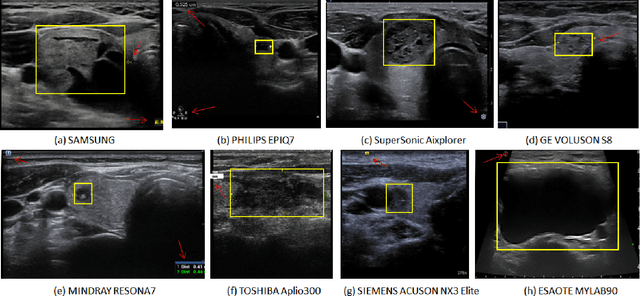

Abstract:Ultrasound (US) is the primary imaging technique for the diagnosis of thyroid cancer. However, accurate identification of nodule malignancy is a challenging task that can elude less-experienced clinicians. Recently, many computer-aided diagnosis (CAD) systems have been proposed to assist this process. However, most of them do not provide the reasoning of their classification process, which may jeopardize their credibility in practical use. To overcome this, we propose a novel deep learning framework called multi-attribute attention network (MAA-Net) that is designed to mimic the clinical diagnosis process. The proposed model learns to predict nodular attributes and infer their malignancy based on these clinically-relevant features. A multi-attention scheme is adopted to generate customized attention to improve each task and malignancy diagnosis. Furthermore, MAA-Net utilizes nodule delineations as nodules spatial prior guidance for the training rather than cropping the nodules with additional models or human interventions to prevent losing the context information. Validation experiments were performed on a large and challenging dataset containing 4554 patients. Results show that the proposed method outperformed other state-of-the-art methods and provides interpretable predictions that may better suit clinical needs.

Personalized Diagnostic Tool for Thyroid Cancer Classification using Multi-view Ultrasound

Jul 01, 2022

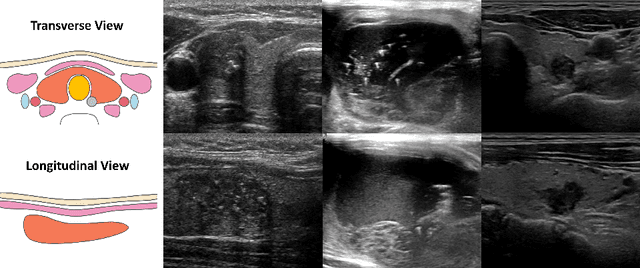

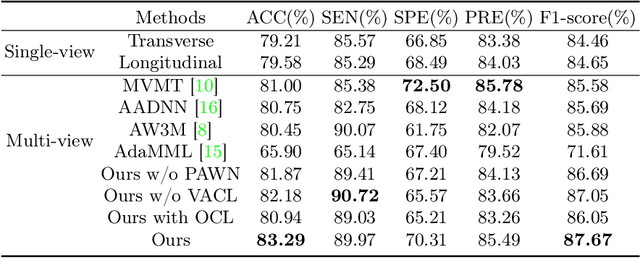

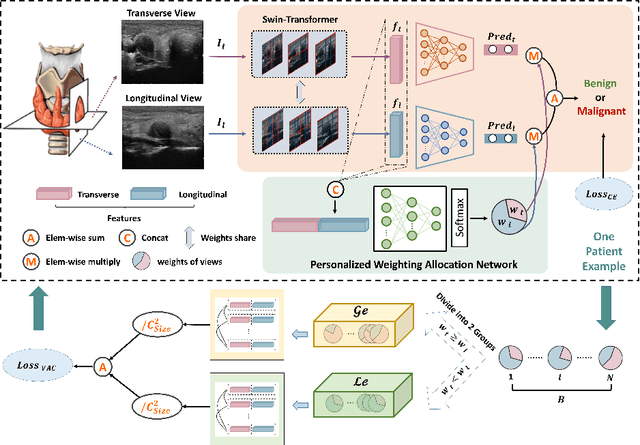

Abstract:Over the past decades, the incidence of thyroid cancer has been increasing globally. Accurate and early diagnosis allows timely treatment and helps to avoid over-diagnosis. Clinically, a nodule is commonly evaluated from both transverse and longitudinal views using thyroid ultrasound. However, the appearance of the thyroid gland and lesions can vary dramatically across individuals. Identifying key diagnostic information from both views requires specialized expertise. Furthermore, finding an optimal way to integrate multi-view information also relies on the experience of clinicians and adds further difficulty to accurate diagnosis. To address these, we propose a personalized diagnostic tool that can customize its decision-making process for different patients. It consists of a multi-view classification module for feature extraction and a personalized weighting allocation network that generates optimal weighting for different views. It is also equipped with a self-supervised view-aware contrastive loss to further improve the model robustness towards different patient groups. Experimental results show that the proposed framework can better utilize multi-view information and outperform the competing methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge