Xinwei Feng

Multimodal Table Understanding

Jun 12, 2024

Abstract:Although great progress has been made by previous table understanding methods including recent approaches based on large language models (LLMs), they rely heavily on the premise that given tables must be converted into a certain text sequence (such as Markdown or HTML) to serve as model input. However, it is difficult to access such high-quality textual table representations in some real-world scenarios, and table images are much more accessible. Therefore, how to directly understand tables using intuitive visual information is a crucial and urgent challenge for developing more practical applications. In this paper, we propose a new problem, multimodal table understanding, where the model needs to generate correct responses to various table-related requests based on the given table image. To facilitate both the model training and evaluation, we construct a large-scale dataset named MMTab, which covers a wide spectrum of table images, instructions and tasks. On this basis, we develop Table-LLaVA, a generalist tabular multimodal large language model (MLLM), which significantly outperforms recent open-source MLLM baselines on 23 benchmarks under held-in and held-out settings. The code and data is available at this https://github.com/SpursGoZmy/Table-LLaVA

A Concept Knowledge-Driven Keywords Retrieval Framework for Sponsored Search

Feb 21, 2021

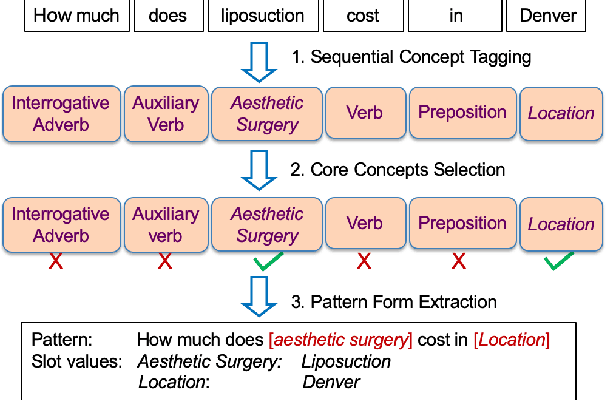

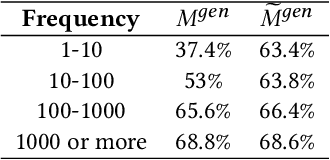

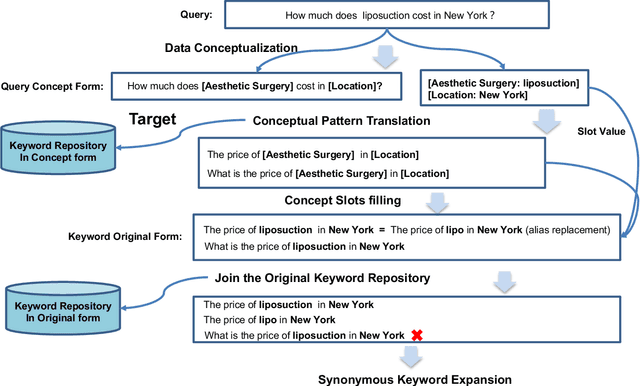

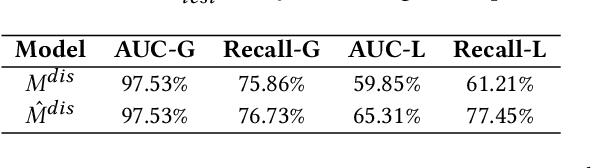

Abstract:In sponsored search, retrieving synonymous keywords for exact match type is important for accurately targeted advertising. Data-driven deep learning-based method has been proposed to tackle this problem. An apparent disadvantage of this method is its poor generalization performance on entity-level long-tail instances, even though they might share similar concept-level patterns with frequent instances. With the help of a large knowledge base, we find that most commercial synonymous query-keyword pairs can be abstracted into meaningful conceptual patterns through concept tagging. Based on this fact, we propose a novel knowledge-driven conceptual retrieval framework to mitigate this problem, which consists of three parts: data conceptualization, matching via conceptual patterns and concept-augmented discrimination. Both offline and online experiments show that our method is very effective. This framework has been successfully applied to Baidu's sponsored search system, which yields a significant improvement in revenue.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge