Xingming Zhang

Uncertain Facial Expression Recognition via Multi-task Assisted Correction

Dec 14, 2022

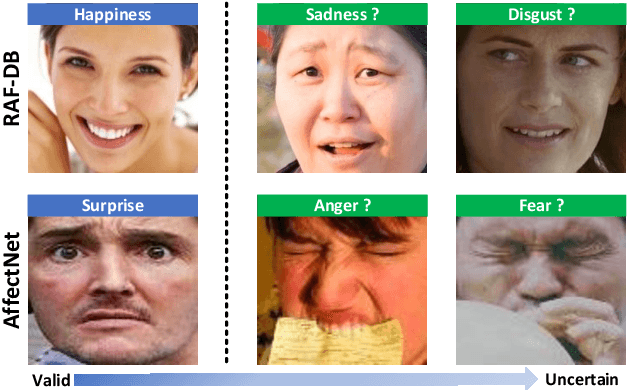

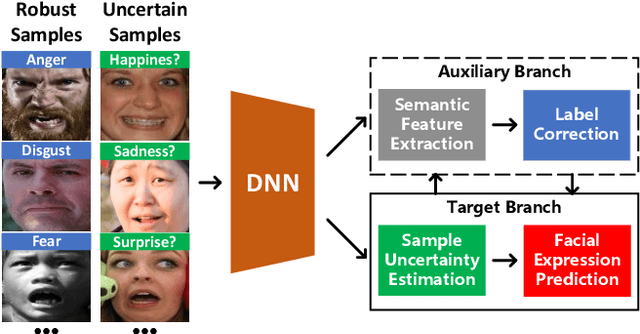

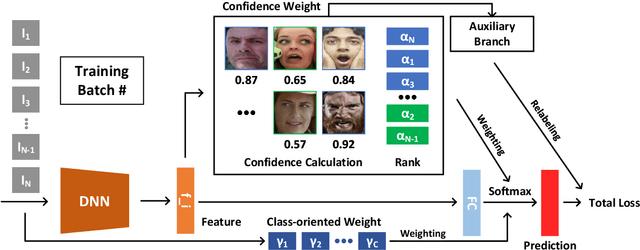

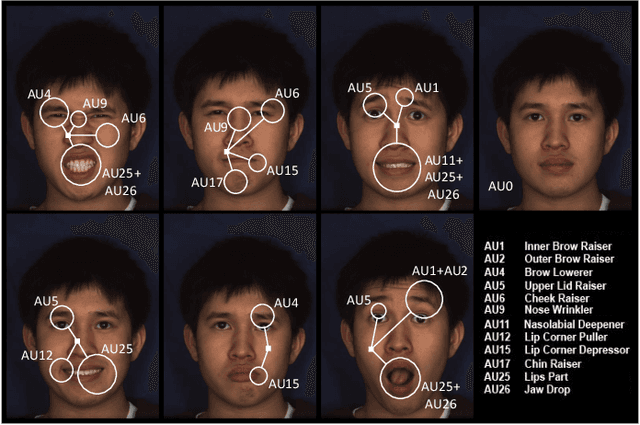

Abstract:Deep models for facial expression recognition achieve high performance by training on large-scale labeled data. However, publicly available datasets contain uncertain facial expressions caused by ambiguous annotations or confusing emotions, which could severely decline the robustness. Previous studies usually follow the bias elimination method in general tasks without considering the uncertainty problem from the perspective of different corresponding sources. In this paper, we propose a novel method of multi-task assisted correction in addressing uncertain facial expression recognition called MTAC. Specifically, a confidence estimation block and a weighted regularization module are applied to highlight solid samples and suppress uncertain samples in every batch. In addition, two auxiliary tasks, i.e., action unit detection and valence-arousal measurement, are introduced to learn semantic distributions from a data-driven AU graph and mitigate category imbalance based on latent dependencies between discrete and continuous emotions, respectively. Moreover, a re-labeling strategy guided by feature-level similarity constraint further generates new labels for identified uncertain samples to promote model learning. The proposed method can flexibly combine with existing frameworks in a fully-supervised or weakly-supervised manner. Experiments on RAF-DB, AffectNet, and AffWild2 datasets demonstrate that the MTAC obtains substantial improvements over baselines when facing synthetic and real uncertainties and outperforms the state-of-the-art methods.

Uncertain Label Correction via Auxiliary Action Unit Graphs for Facial Expression Recognition

Apr 23, 2022

Abstract:High-quality annotated images are significant to deep facial expression recognition (FER) methods. However, uncertain labels, mostly existing in large-scale public datasets, often mislead the training process. In this paper, we achieve uncertain label correction of facial expressions using auxiliary action unit (AU) graphs, called ULC-AG. Specifically, a weighted regularization module is introduced to highlight valid samples and suppress category imbalance in every batch. Based on the latent dependency between emotions and AUs, an auxiliary branch using graph convolutional layers is added to extract the semantic information from graph topologies. Finally, a re-labeling strategy corrects the ambiguous annotations by comparing their feature similarities with semantic templates. Experiments show that our ULC-AG achieves 89.31% and 61.57% accuracy on RAF-DB and AffectNet datasets, respectively, outperforming the baseline and state-of-the-art methods.

Graph-based Facial Affect Analysis: A Review of Methods, Applications and Challenges

Apr 12, 2021

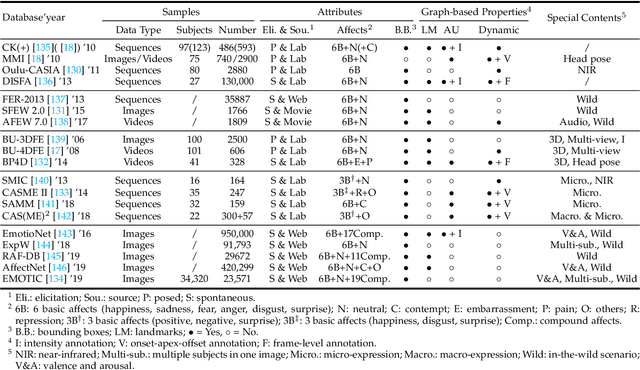

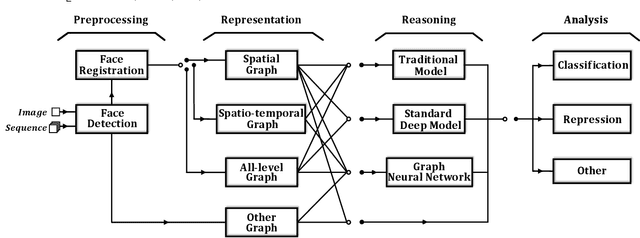

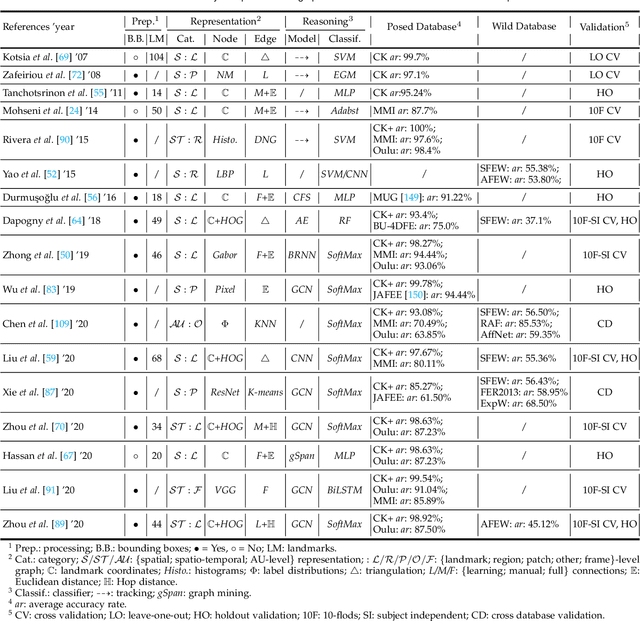

Abstract:Facial affect analysis (FAA) using visual signals is a key step in human-computer interactions. Early methods mainly focus on extracting appearance and geometry features associated with human affects, while ignore the latent semantic information among individual facial changes, leading to limited performance and generalization. Recent trends attempt to establish a graph-based representation to model these semantic relationships and develop learning frameworks to leverage it for different FAA tasks. In this paper, we provide a comprehensive review of graph-based FAA, including the evolution of algorithms and their applications. First, we introduce the background knowledge of facial affect analysis, especially on the role of graph. We then discuss approaches that are widely used for graph-based affective representation in literatures and show a trend towards graph construction. For the relational reasoning in graph-based FAA, we categorize the existing studies according to their usage of traditional methods or deep models, with a special emphasis on latest graph neural networks. Experimental comparisons of the state-of-the-art on standard FAA problems are also summarized. Finally, we discuss the challenges and potential directions. As far as we know, this is the first survey of graph-based FAA methods, and our findings can serve as a reference point for future research in this field.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge