Xiaxia Wang

GR-Agent: Adaptive Graph Reasoning Agent under Incomplete Knowledge

Dec 16, 2025

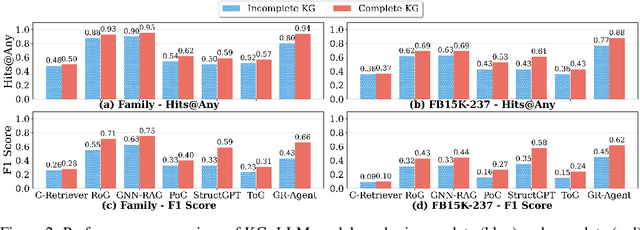

Abstract:Large language models (LLMs) achieve strong results on knowledge graph question answering (KGQA), but most benchmarks assume complete knowledge graphs (KGs) where direct supporting triples exist. This reduces evaluation to shallow retrieval and overlooks the reality of incomplete KGs, where many facts are missing and answers must be inferred from existing facts. We bridge this gap by proposing a methodology for constructing benchmarks under KG incompleteness, which removes direct supporting triples while ensuring that alternative reasoning paths required to infer the answer remain. Experiments on benchmarks constructed using our methodology show that existing methods suffer consistent performance degradation under incompleteness, highlighting their limited reasoning ability. To overcome this limitation, we present the Adaptive Graph Reasoning Agent (GR-Agent). It first constructs an interactive environment from the KG, and then formalizes KGQA as agent environment interaction within this environment. GR-Agent operates over an action space comprising graph reasoning tools and maintains a memory of potential supporting reasoning evidence, including relevant relations and reasoning paths. Extensive experiments demonstrate that GR-Agent outperforms non-training baselines and performs comparably to training-based methods under both complete and incomplete settings.

What Breaks Knowledge Graph based RAG? Empirical Insights into Reasoning under Incomplete Knowledge

Aug 11, 2025Abstract:Knowledge Graph-based Retrieval-Augmented Generation (KG-RAG) is an increasingly explored approach for combining the reasoning capabilities of large language models with the structured evidence of knowledge graphs. However, current evaluation practices fall short: existing benchmarks often include questions that can be directly answered using existing triples in KG, making it unclear whether models perform reasoning or simply retrieve answers directly. Moreover, inconsistent evaluation metrics and lenient answer matching criteria further obscure meaningful comparisons. In this work, we introduce a general method for constructing benchmarks, together with an evaluation protocol, to systematically assess KG-RAG methods under knowledge incompleteness. Our empirical results show that current KG-RAG methods have limited reasoning ability under missing knowledge, often rely on internal memorization, and exhibit varying degrees of generalization depending on their design.

TARGA: Targeted Synthetic Data Generation for Practical Reasoning over Structured Data

Dec 27, 2024

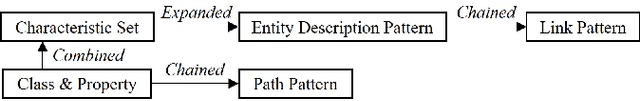

Abstract:Semantic parsing, which converts natural language questions into logic forms, plays a crucial role in reasoning within structured environments. However, existing methods encounter two significant challenges: reliance on extensive manually annotated datasets and limited generalization capability to unseen examples. To tackle these issues, we propose Targeted Synthetic Data Generation (TARGA), a practical framework that dynamically generates high-relevance synthetic data without manual annotation. Starting from the pertinent entities and relations of a given question, we probe for the potential relevant queries through layer-wise expansion and cross-layer combination. Then we generate corresponding natural language questions for these constructed queries to jointly serve as the synthetic demonstrations for in-context learning. Experiments on multiple knowledge base question answering (KBQA) datasets demonstrate that TARGA, using only a 7B-parameter model, substantially outperforms existing non-fine-tuned methods that utilize close-sourced model, achieving notable improvements in F1 scores on GrailQA(+7.7) and KBQA-Agent(+12.2). Furthermore, TARGA also exhibits superior sample efficiency, robustness, and generalization capabilities under non-I.I.D. settings.

Noise Adaption Network for Morse Code Image Classification

Oct 24, 2024Abstract:The escalating significance of information security has underscored the per-vasive role of encryption technology in safeguarding communication con-tent. Morse code, a well-established and effective encryption method, has found widespread application in telegraph communication and various do-mains. However, the transmission of Morse code images faces challenges due to diverse noises and distortions, thereby hindering comprehensive clas-sification outcomes. Existing methodologies predominantly concentrate on categorizing Morse code images affected by a single type of noise, neglecting the multitude of scenarios that noise pollution can generate. To overcome this limitation, we propose a novel two-stage approach, termed the Noise Adaptation Network (NANet), for Morse code image classification. Our method involves exclusive training on pristine images while adapting to noisy ones through the extraction of critical information unaffected by noise. In the initial stage, we introduce a U-shaped network structure designed to learn representative features and denoise images. Subsequently, the second stage employs a deep convolutional neural network for classification. By leveraging the denoising module from the first stage, our approach achieves enhanced accuracy and robustness in the subsequent classification phase. We conducted an evaluation of our approach on a diverse dataset, encom-passing Gaussian, salt-and-pepper, and uniform noise variations. The results convincingly demonstrate the superiority of our methodology over existing approaches. The datasets are available on https://github.com/apple1986/MorseCodeImageClassify

Development of Skip Connection in Deep Neural Networks for Computer Vision and Medical Image Analysis: A Survey

May 02, 2024

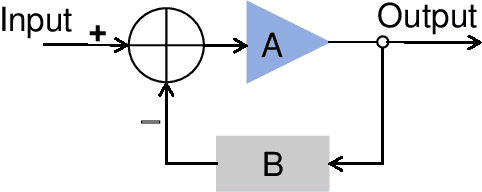

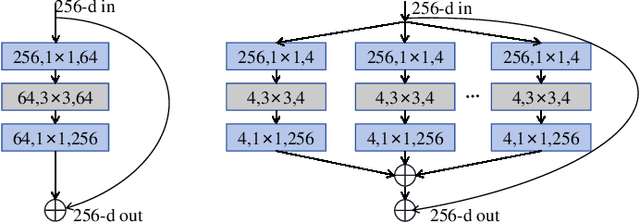

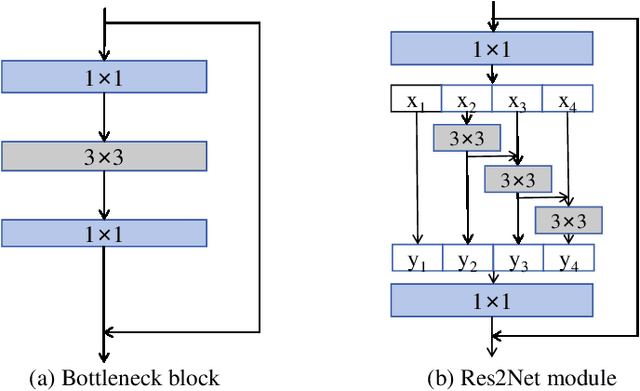

Abstract:Deep learning has made significant progress in computer vision, specifically in image classification, object detection, and semantic segmentation. The skip connection has played an essential role in the architecture of deep neural networks,enabling easier optimization through residual learning during the training stage and improving accuracy during testing. Many neural networks have inherited the idea of residual learning with skip connections for various tasks, and it has been the standard choice for designing neural networks. This survey provides a comprehensive summary and outlook on the development of skip connections in deep neural networks. The short history of skip connections is outlined, and the development of residual learning in deep neural networks is surveyed. The effectiveness of skip connections in the training and testing stages is summarized, and future directions for using skip connections in residual learning are discussed. Finally, we summarize seminal papers, source code, models, and datasets that utilize skip connections in computer vision, including image classification, object detection, semantic segmentation, and image reconstruction. We hope this survey could inspire peer researchers in the community to develop further skip connections in various forms and tasks and the theory of residual learning in deep neural networks. The project page can be found at https://github.com/apple1986/Residual_Learning_For_Images

A Survey on Extractive Knowledge Graph Summarization: Applications, Approaches, Evaluation, and Future Directions

Feb 19, 2024

Abstract:With the continuous growth of large Knowledge Graphs (KGs), extractive KG summarization becomes a trending task. Aiming at distilling a compact subgraph with condensed information, it facilitates various downstream KG-based tasks. In this survey paper, we are among the first to provide a systematic overview of its applications and define a taxonomy for existing methods from its interdisciplinary studies. Future directions are also laid out based on our extensive and comparative review.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge