Xabier De Carlos

Diagnostic Spatio-temporal Transformer with Faithful Encoding

May 26, 2023Abstract:This paper addresses the task of anomaly diagnosis when the underlying data generation process has a complex spatio-temporal (ST) dependency. The key technical challenge is to extract actionable insights from the dependency tensor characterizing high-order interactions among temporal and spatial indices. We formalize the problem as supervised dependency discovery, where the ST dependency is learned as a side product of multivariate time-series classification. We show that temporal positional encoding used in existing ST transformer works has a serious limitation in capturing higher frequencies (short time scales). We propose a new positional encoding with a theoretical guarantee, based on discrete Fourier transform. We also propose a new ST dependency discovery framework, which can provide readily consumable diagnostic information in both spatial and temporal directions. Finally, we demonstrate the utility of the proposed model, DFStrans (Diagnostic Fourier-based Spatio-temporal Transformer), in a real industrial application of building elevator control.

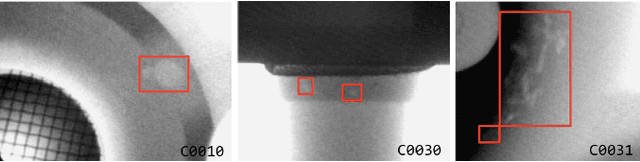

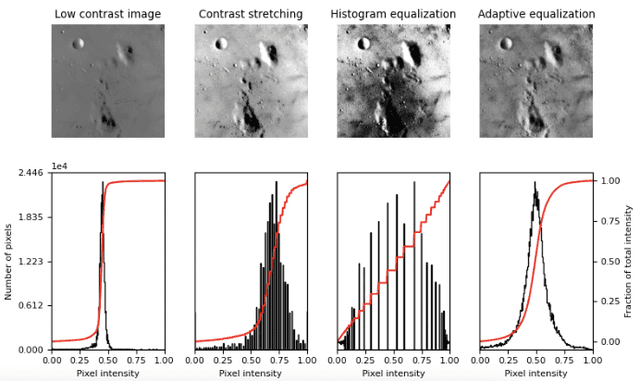

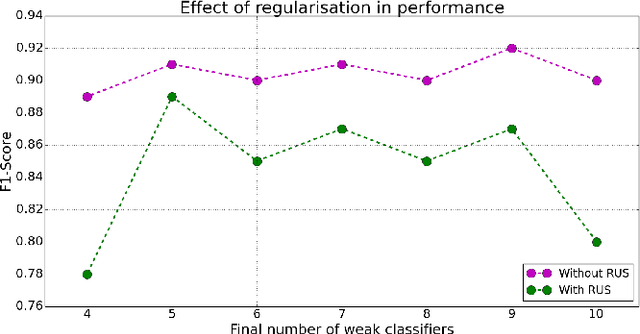

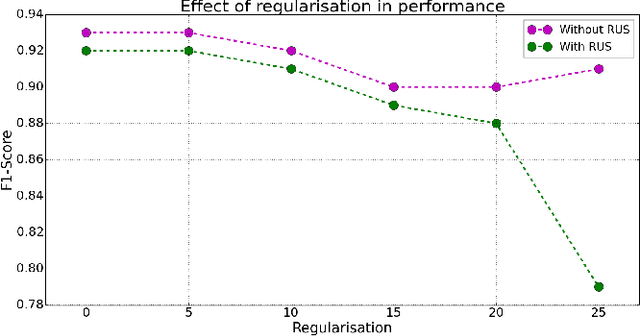

Quantum artificial vision for defect detection in manufacturing

Aug 09, 2022

Abstract:In this paper we consider several algorithms for quantum computer vision using Noisy Intermediate-Scale Quantum (NISQ) devices, and benchmark them for a real problem against their classical counterparts. Specifically, we consider two approaches: a quantum Support Vector Machine (QSVM) on a universal gate-based quantum computer, and QBoost on a quantum annealer. The quantum vision systems are benchmarked for an unbalanced dataset of images where the aim is to detect defects in manufactured car pieces. We see that the quantum algorithms outperform their classical counterparts in several ways, with QBoost allowing for larger problems to be analyzed with present-day quantum annealers. Data preprocessing, including dimensionality reduction and contrast enhancement, is also discussed, as well as hyperparameter tuning in QBoost. To the best of our knowledge, this is the first implementation of quantum computer vision systems for a problem of industrial relevance in a manufacturing production line.

DA-DGCEx: Ensuring Validity of Deep Guided Counterfactual Explanations With Distribution-Aware Autoencoder Loss

Apr 22, 2021

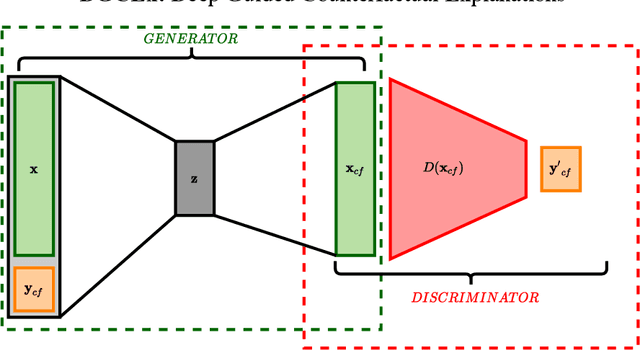

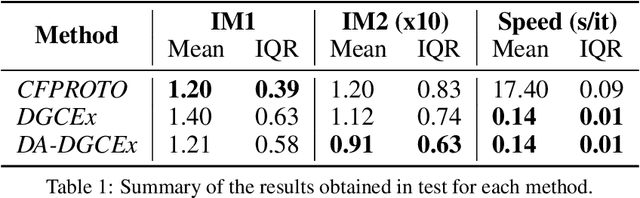

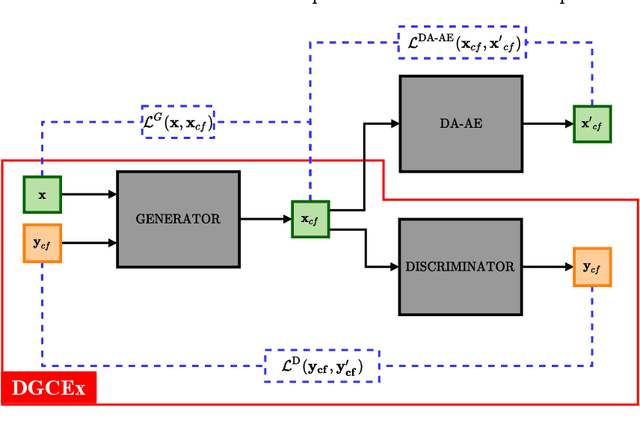

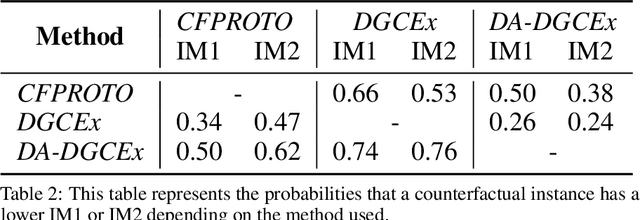

Abstract:Deep Learning has become a very valuable tool in different fields, and no one doubts the learning capacity of these models. Nevertheless, since Deep Learning models are often seen as black boxes due to their lack of interpretability, there is a general mistrust in their decision-making process. To find a balance between effectiveness and interpretability, Explainable Artificial Intelligence (XAI) is gaining popularity in recent years, and some of the methods within this area are used to generate counterfactual explanations. The process of generating these explanations generally consists of solving an optimization problem for each input to be explained, which is unfeasible when real-time feedback is needed. To speed up this process, some methods have made use of autoencoders to generate instant counterfactual explanations. Recently, a method called Deep Guided Counterfactual Explanations (DGCEx) has been proposed, which trains an autoencoder attached to a classification model, in order to generate straightforward counterfactual explanations. However, this method does not ensure that the generated counterfactual instances are close to the data manifold, so unrealistic counterfactual instances may be generated. To overcome this issue, this paper presents Distribution Aware Deep Guided Counterfactual Explanations (DA-DGCEx), which adds a term to the DGCEx cost function that penalizes out of distribution counterfactual instances.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge