William M. Severa

Neuromorphic Co-Design as a Game

Dec 11, 2023Abstract:Co-design is a prominent topic presently in computing, speaking to the mutual benefit of coordinating design choices of several layers in the technology stack. For example, this may be designing algorithms which can most efficiently take advantage of the acceleration properties of a given architecture, while simultaneously designing the hardware to support the structural needs of a class of computation. The implications of these design decisions are influential enough to be deemed a lottery, enabling an idea to win out over others irrespective of the individual merits. Coordination is a well studied topic in the mathematics of game theory, where in many cases without a coordination mechanism the outcome is sub-optimal. Here we consider what insights game theoretic analysis can offer for computer architecture co-design. In particular, we consider the interplay between algorithm and architecture advances in the field of neuromorphic computing. Analyzing developments of spiking neural network algorithms and neuromorphic hardware as a co-design game we use the Stag Hunt model to illustrate challenges for spiking algorithms or architectures to advance the field independently and advocate for a strategic pursuit to advance neuromorphic computing.

Spiking Neural Streaming Binary Arithmetic

Mar 23, 2022

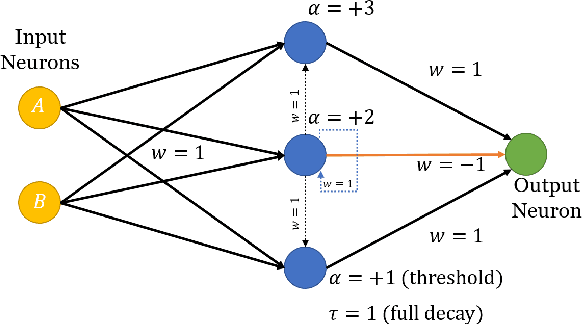

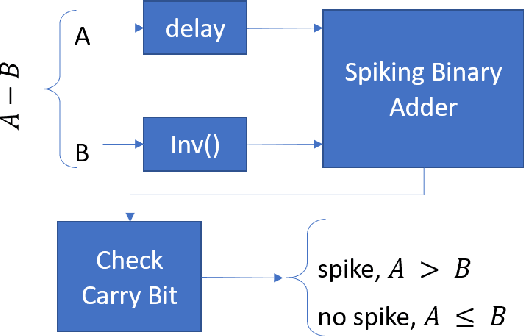

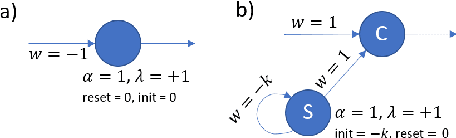

Abstract:Boolean functions and binary arithmetic operations are central to standard computing paradigms. Accordingly, many advances in computing have focused upon how to make these operations more efficient as well as exploring what they can compute. To best leverage the advantages of novel computing paradigms it is important to consider what unique computing approaches they offer. However, for any special-purpose co-processor, Boolean functions and binary arithmetic operations are useful for, among other things, avoiding unnecessary I/O on-and-off the co-processor by pre- and post-processing data on-device. This is especially true for spiking neuromorphic architectures where these basic operations are not fundamental low-level operations. Instead, these functions require specific implementation. Here we discuss the implications of an advantageous streaming binary encoding method as well as a handful of circuits designed to exactly compute elementary Boolean and binary operations.

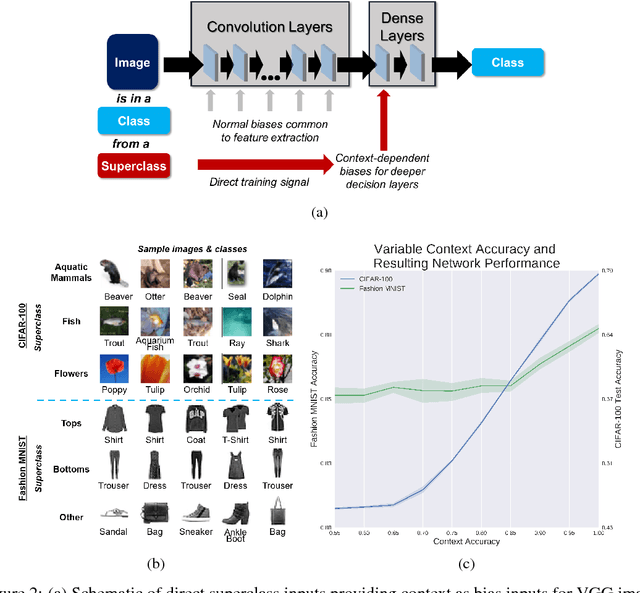

Context-modulation of hippocampal dynamics and deep convolutional networks

Nov 27, 2017

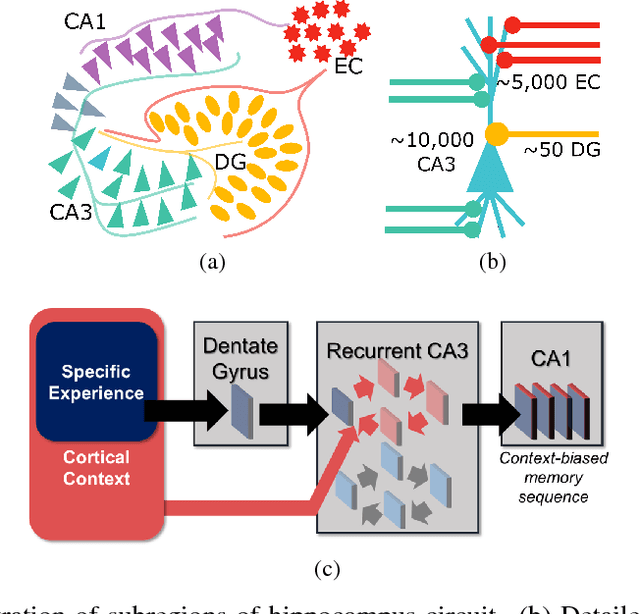

Abstract:Complex architectures of biological neural circuits, such as parallel processing pathways, has been behaviorally implicated in many cognitive studies. However, the theoretical consequences of circuit complexity on neural computation have only been explored in limited cases. Here, we introduce a mechanism by which direct and indirect pathways from cortex to the CA3 region of the hippocampus can balance both contextual gating of memory formation and driving network activity. We implement this concept in a deep artificial neural network by enabling a context-sensitive bias. The motivation for this is to improve performance of a size-constrained network. Using direct knowledge of the superclass information in the CIFAR-100 and Fashion-MNIST datasets, we show a dramatic increase in performance without an increase in network size.

Data-driven Feature Sampling for Deep Hyperspectral Classification and Segmentation

Oct 26, 2017

Abstract:The high dimensionality of hyperspectral imaging forces unique challenges in scope, size and processing requirements. Motivated by the potential for an in-the-field cell sorting detector, we examine a $\textit{Synechocystis sp.}$ PCC 6803 dataset wherein cells are grown alternatively in nitrogen rich or deplete cultures. We use deep learning techniques to both successfully classify cells and generate a mask segmenting the cells/condition from the background. Further, we use the classification accuracy to guide a data-driven, iterative feature selection method, allowing the design neural networks requiring 90% fewer input features with little accuracy degradation.

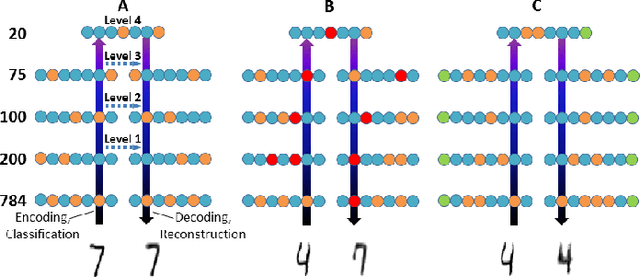

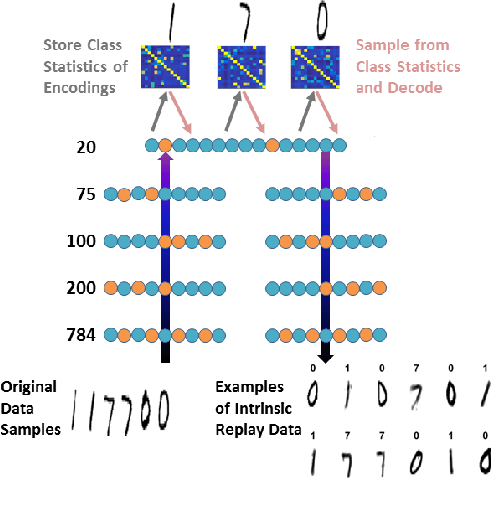

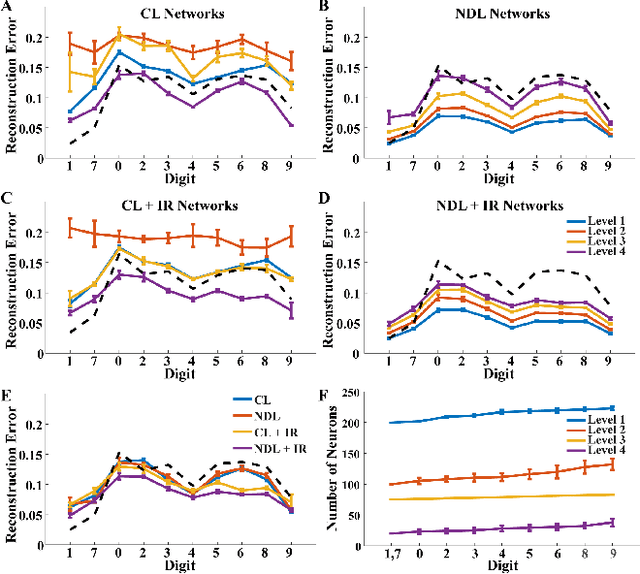

Neurogenesis Deep Learning

Mar 28, 2017

Abstract:Neural machine learning methods, such as deep neural networks (DNN), have achieved remarkable success in a number of complex data processing tasks. These methods have arguably had their strongest impact on tasks such as image and audio processing - data processing domains in which humans have long held clear advantages over conventional algorithms. In contrast to biological neural systems, which are capable of learning continuously, deep artificial networks have a limited ability for incorporating new information in an already trained network. As a result, methods for continuous learning are potentially highly impactful in enabling the application of deep networks to dynamic data sets. Here, inspired by the process of adult neurogenesis in the hippocampus, we explore the potential for adding new neurons to deep layers of artificial neural networks in order to facilitate their acquisition of novel information while preserving previously trained data representations. Our results on the MNIST handwritten digit dataset and the NIST SD 19 dataset, which includes lower and upper case letters and digits, demonstrate that neurogenesis is well suited for addressing the stability-plasticity dilemma that has long challenged adaptive machine learning algorithms.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge