Will Shand

Where have you been? A Study of Privacy Risk for Point-of-Interest Recommendation

Oct 28, 2023

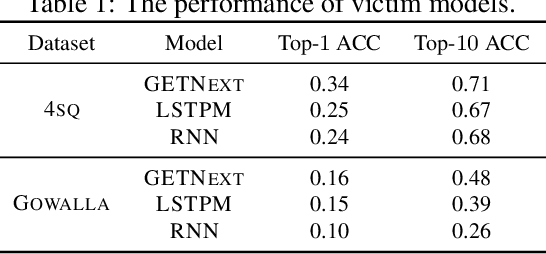

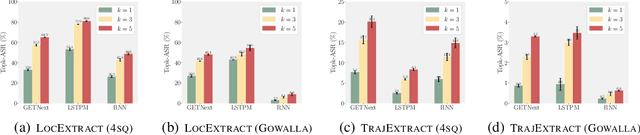

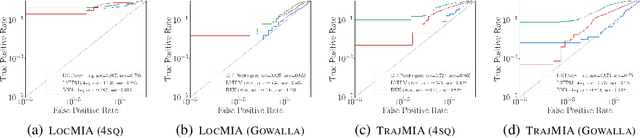

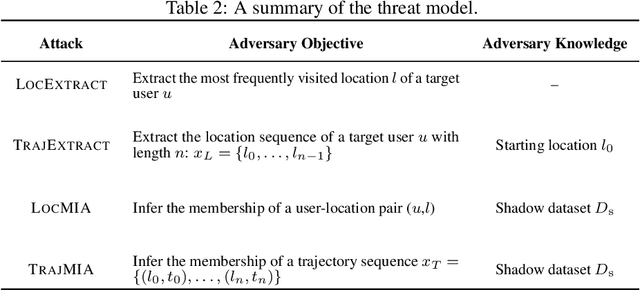

Abstract:As location-based services (LBS) have grown in popularity, the collection of human mobility data has become increasingly extensive to build machine learning (ML) models offering enhanced convenience to LBS users. However, the convenience comes with the risk of privacy leakage since this type of data might contain sensitive information related to user identities, such as home/work locations. Prior work focuses on protecting mobility data privacy during transmission or prior to release, lacking the privacy risk evaluation of mobility data-based ML models. To better understand and quantify the privacy leakage in mobility data-based ML models, we design a privacy attack suite containing data extraction and membership inference attacks tailored for point-of-interest (POI) recommendation models, one of the most widely used mobility data-based ML models. These attacks in our attack suite assume different adversary knowledge and aim to extract different types of sensitive information from mobility data, providing a holistic privacy risk assessment for POI recommendation models. Our experimental evaluation using two real-world mobility datasets demonstrates that current POI recommendation models are vulnerable to our attacks. We also present unique findings to understand what types of mobility data are more susceptible to privacy attacks. Finally, we evaluate defenses against these attacks and highlight future directions and challenges.

Locality-sensitive hashing in function spaces

Feb 10, 2020

Abstract:We discuss the problem of performing similarity search over function spaces. To perform search over such spaces in a reasonable amount of time, we use {\it locality-sensitive hashing} (LSH). We present two methods that allow LSH functions on $\mathbb{R}^N$ to be extended to $L^p$ spaces: one using function approximation in an orthonormal basis, and another using (quasi-)Monte Carlo-style techniques. We use the presented hashing schemes to construct an LSH family for Wasserstein distance over one-dimensional, continuous probability distributions.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge