Will Rowan

NeuRaLaTeX: A machine learning library written in pure LaTeX

Mar 31, 2025Abstract:In this paper, we introduce NeuRaLaTeX, which we believe to be the first deep learning library written entirely in LaTeX. As part of your LaTeX document you can specify the architecture of a neural network and its loss functions, define how to generate or load training data, and specify training hyperparameters and experiments. When the document is compiled, the LaTeX compiler will generate or load training data, train the network, run experiments, and generate figures. This paper generates a random 100 point spiral dataset, trains a two layer MLP on it, evaluates on a different random spiral dataset, produces plots and tables of results. The paper took 48 hours to compile and the entire source code for NeuRaLaTeX is contained within the source code of the paper. We propose two new metrics: the Written In Latex (WIL) metric measures the proportion of a machine learning library that is written in pure LaTeX, while the Source Code Of Method in Source Code of Paper (SCOMISCOP) metric measures the proportion of a paper's implementation that is contained within the paper source. We are state-of-the-art for both metrics, outperforming the ResNet and Transformer papers, as well as the PyTorch and Tensorflow libraries. Source code, documentation, videos, crypto scams and an invitation to invest in the commercialisation of NeuRaLaTeX are available at https://www.neuralatex.com

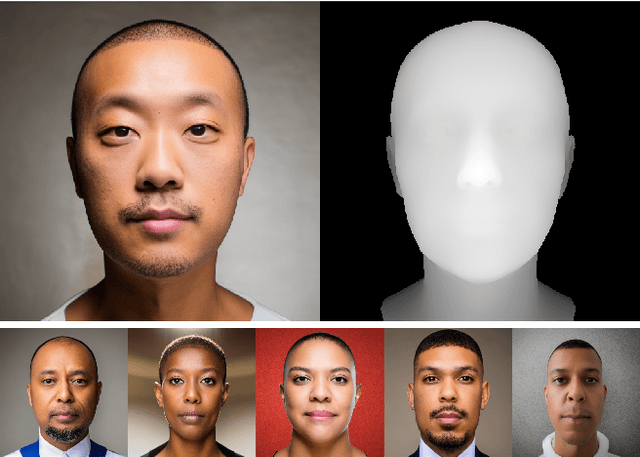

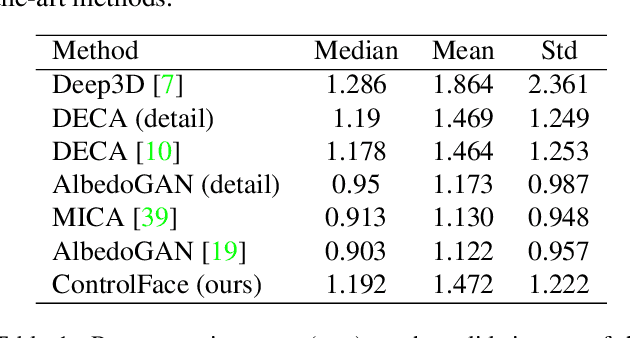

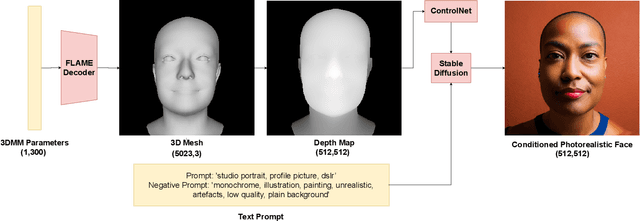

Fake It Without Making It: Conditioned Face Generation for Accurate 3D Face Shape Estimation

Jul 25, 2023

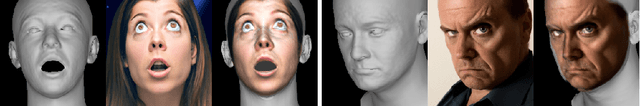

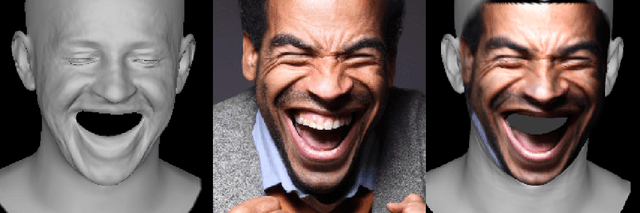

Abstract:Accurate 3D face shape estimation is an enabling technology with applications in healthcare, security, and creative industries, yet current state-of-the-art methods either rely on self-supervised training with 2D image data or supervised training with very limited 3D data. To bridge this gap, we present a novel approach which uses a conditioned stable diffusion model for face image generation, leveraging the abundance of 2D facial information to inform 3D space. By conditioning stable diffusion on depth maps sampled from a 3D Morphable Model (3DMM) of the human face, we generate diverse and shape-consistent images, forming the basis of SynthFace. We introduce this large-scale synthesised dataset of 250K photorealistic images and corresponding 3DMM parameters. We further propose ControlFace, a deep neural network, trained on SynthFace, which achieves competitive performance on the NoW benchmark, without requiring 3D supervision or manual 3D asset creation.

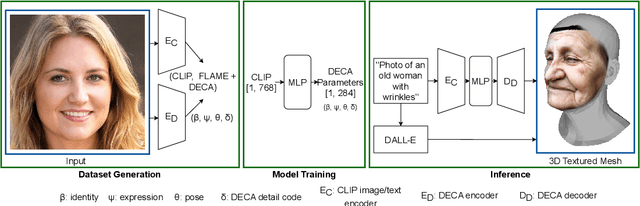

Text2Face: A Multi-Modal 3D Face Model

Mar 08, 2023

Abstract:We present the first 3D morphable modelling approach, whereby 3D face shape can be directly and completely defined using a textual prompt. Building on work in multi-modal learning, we extend the FLAME head model to a common image-and-text latent space. This allows for direct 3D Morphable Model (3DMM) parameter generation and therefore shape manipulation from textual descriptions. Our method, Text2Face, has many applications; for example: generating police photofits where the input is already in natural language. It further enables multi-modal 3DMM image fitting to sketches and sculptures, as well as images.

The Effectiveness of Temporal Dependency in Deepfake Video Detection

May 13, 2022

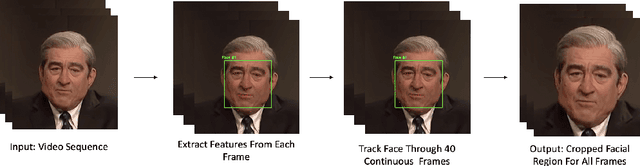

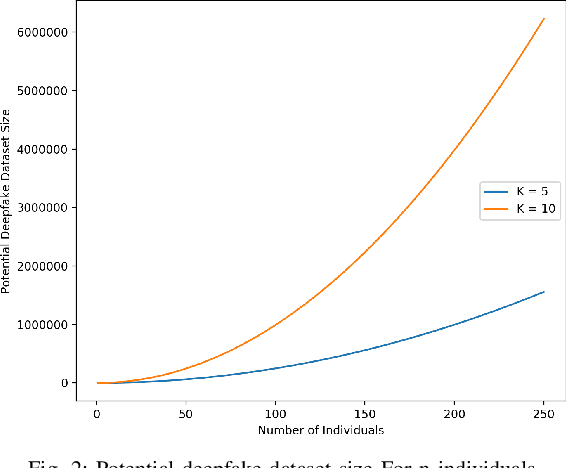

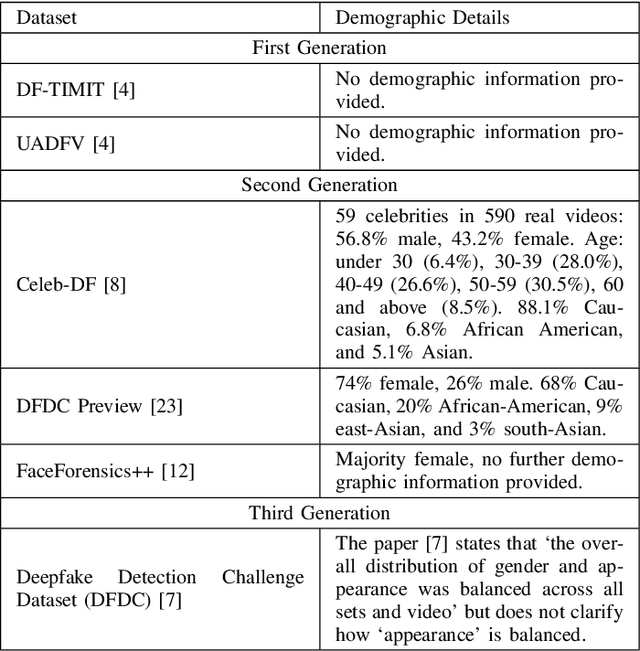

Abstract:Deepfakes are a form of synthetic image generation used to generate fake videos of individuals for malicious purposes. The resulting videos may be used to spread misinformation, reduce trust in media, or as a form of blackmail. These threats necessitate automated methods of deepfake video detection. This paper investigates whether temporal information can improve the deepfake detection performance of deep learning models. To investigate this, we propose a framework that classifies new and existing approaches by their defining characteristics. These are the types of feature extraction: automatic or manual, and the temporal relationship between frames: dependent or independent. We apply this framework to investigate the effect of temporal dependency on a model's deepfake detection performance. We find that temporal dependency produces a statistically significant (p < 0.05) increase in performance in classifying real images for the model using automatic feature selection, demonstrating that spatio-temporal information can increase the performance of deepfake video detection models.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge