Wenjing Gao

Multi-Stage Graph Learning for fMRI Analysis to Diagnose Neuro-Developmental Disorders

Oct 07, 2024

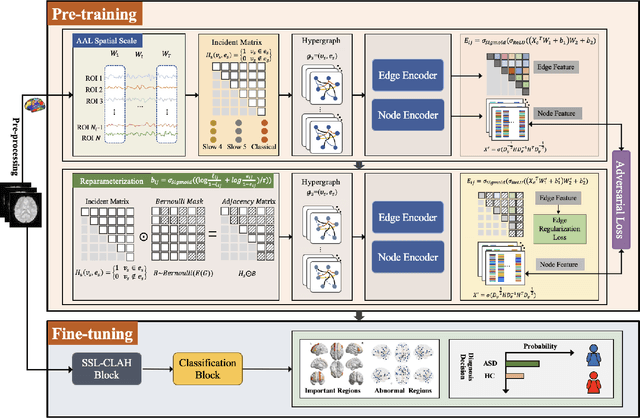

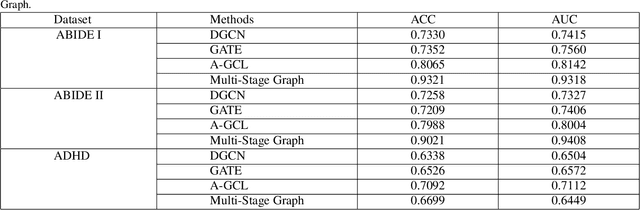

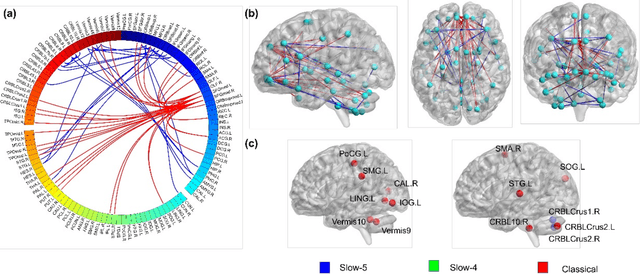

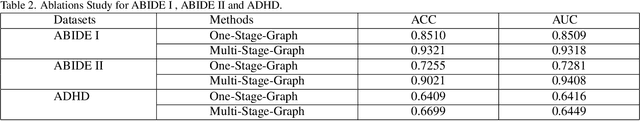

Abstract:The insufficient supervision limit the performance of the deep supervised models for brain disease diagnosis. It is important to develop a learning framework that can capture more information in limited data and insufficient supervision. To address these issues at some extend, we propose a multi-stage graph learning framework which incorporates 1) pretrain stage : self-supervised graph learning on insufficient supervision of the fmri data 2) fine-tune stage : supervised graph learning for brain disorder diagnosis. Experiment results on three datasets, Autism Brain Imaging Data Exchange ABIDE I, ABIDE II and ADHD with AAL1,demonstrating the superiority and generalizability of the proposed framework compared to the state of art of models.(ranging from 0.7330 to 0.9321,0.7209 to 0.9021,0.6338 to 0.6699)

Unsupervised Clustering Active Learning for Person Re-identification

Dec 26, 2021

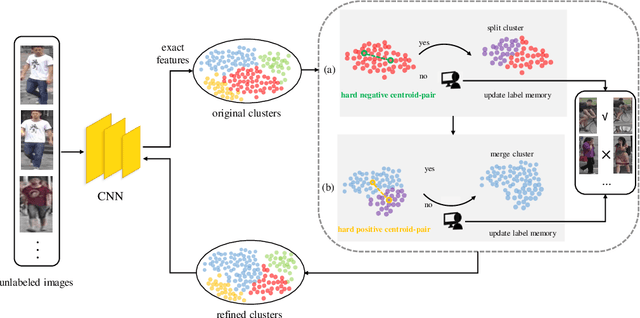

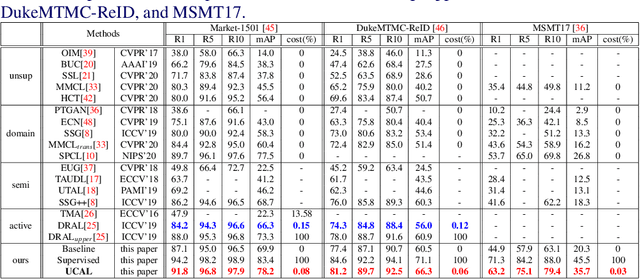

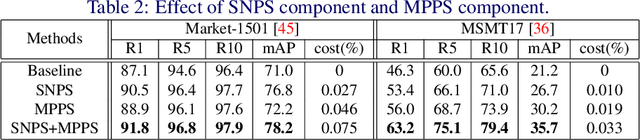

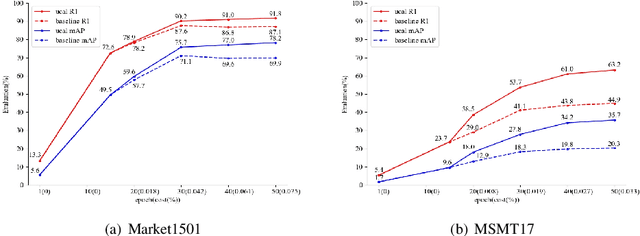

Abstract:Supervised person re-identification (re-id) approaches require a large amount of pairwise manual labeled data, which is not applicable in most real-world scenarios for re-id deployment. On the other hand, unsupervised re-id methods rely on unlabeled data to train models but performs poorly compared with supervised re-id methods. In this work, we aim to combine unsupervised re-id learning with a small number of human annotations to achieve a competitive performance. Towards this goal, we present a Unsupervised Clustering Active Learning (UCAL) re-id deep learning approach. It is capable of incrementally discovering the representative centroid-pairs and requiring human annotate them. These few labeled representative pairwise data can improve the unsupervised representation learning model with other large amounts of unlabeled data. More importantly, because the representative centroid-pairs are selected for annotation, UCAL can work with very low-cost human effort. Extensive experiments demonstrate the superiority of the proposed model over state-of-the-art active learning methods on three re-id benchmark datasets.

Shape Recognition by Bag of Skeleton-associated Contour Parts

May 20, 2016

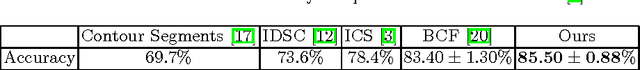

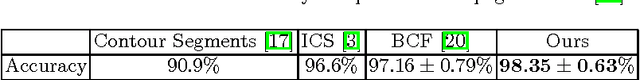

Abstract:Contour and skeleton are two complementary representations for shape recognition. However combining them in a principal way is nontrivial, as they are generally abstracted by different structures (closed string vs graph), respectively. This paper aims at addressing the shape recognition problem by combining contour and skeleton according to the correspondence between them. The correspondence provides a straightforward way to associate skeletal information with a shape contour. More specifically, we propose a new shape descriptor. named Skeleton-associated Shape Context (SSC), which captures the features of a contour fragment associated with skeletal information. Benefited from the association, the proposed shape descriptor provides the complementary geometric information from both contour and skeleton parts, including the spatial distribution and the thickness change along the shape part. To form a meaningful shape feature vector for an overall shape, the Bag of Features framework is applied to the SSC descriptors extracted from it. Finally, the shape feature vector is fed into a linear SVM classifier to recognize the shape. The encouraging experimental results demonstrate that the proposed way to combine contour and skeleton is effective for shape recognition, which achieves the state-of-the-art performances on several standard shape benchmarks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge