Wassim Jabbour

Dynamic layer selection in decoder-only transformers

Oct 26, 2024Abstract:The vast size of Large Language Models (LLMs) has prompted a search to optimize inference. One effective approach is dynamic inference, which adapts the architecture to the sample-at-hand to reduce the overall computational cost. We empirically examine two common dynamic inference methods for natural language generation (NLG): layer skipping and early exiting. We find that a pre-trained decoder-only model is significantly more robust to layer removal via layer skipping, as opposed to early exit. We demonstrate the difficulty of using hidden state information to adapt computation on a per-token basis for layer skipping. Finally, we show that dynamic computation allocation on a per-sequence basis holds promise for significant efficiency gains by constructing an oracle controller. Remarkably, we find that there exists an allocation which achieves equal performance to the full model using only 23.3% of its layers on average.

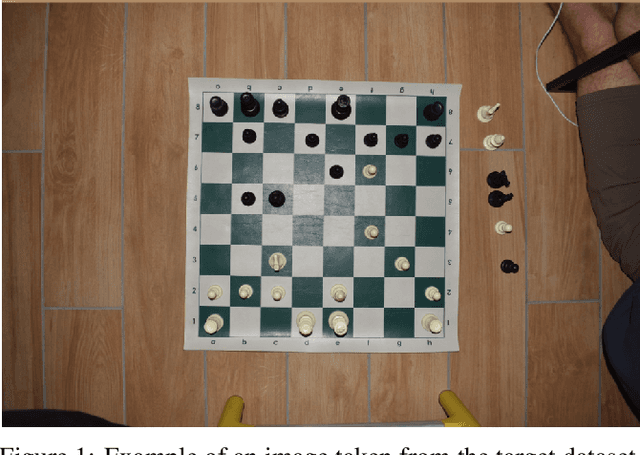

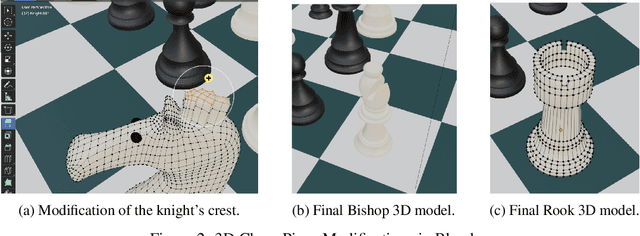

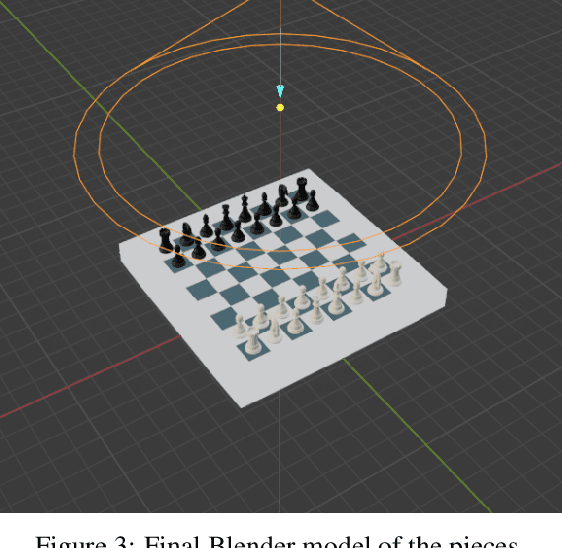

Unsupervised Domain Adaptation Approaches for Chessboard Recognition

Oct 19, 2024

Abstract:Chess involves extensive study and requires players to keep manual records of their matches, a process which is time-consuming and distracting. The lack of high-quality labeled photographs of chess boards, and the tediousness of manual labeling, have hindered the wide application of Deep Learning (DL) to automating this record-keeping process. This paper proposes an end-to-end pipeline that employs domain adaptation (DA) to predict the labels of real, top-view, unlabeled chessboard images using synthetic, labeled images. The pipeline is composed of a pre-processing phase which detects the board, crops the individual squares, and feeds them one at a time to a DL model. The model then predicts the labels of the squares and passes the ordered predictions to a post-processing pipeline which generates the Forsyth-Edwards Notation (FEN) of the position. The three approaches considered are the following: A VGG16 model pre-trained on ImageNet, defined here as the Base-Source model, fine-tuned to predict source domain squares and then used to predict target domain squares without any domain adaptation; an improved version of the Base-Source model which applied CORAL loss to some of the final fully connected layers of the VGG16 to implement DA; and a Domain Adversarial Neural Network (DANN) which used the adversarial training of a domain discriminator to perform the DA. Also, although we opted not to use the labels of the target domain for this study, we trained a baseline with the same architecture as the Base-Source model (Named Base-Target) directly on the target domain in order to get an upper bound on the performance achievable through domain adaptation. The results show that the DANN model only results in a 3% loss in accuracy when compared to the Base-Target model while saving all the effort required to label the data.

Scavenging Hyena: Distilling Transformers into Long Convolution Models

Jan 31, 2024Abstract:The rapid evolution of Large Language Models (LLMs), epitomized by architectures like GPT-4, has reshaped the landscape of natural language processing. This paper introduces a pioneering approach to address the efficiency concerns associated with LLM pre-training, proposing the use of knowledge distillation for cross-architecture transfer. Leveraging insights from the efficient Hyena mechanism, our method replaces attention heads in transformer models by Hyena, offering a cost-effective alternative to traditional pre-training while confronting the challenge of processing long contextual information, inherent in quadratic attention mechanisms. Unlike conventional compression-focused methods, our technique not only enhances inference speed but also surpasses pre-training in terms of both accuracy and efficiency. In the era of evolving LLMs, our work contributes to the pursuit of sustainable AI solutions, striking a balance between computational power and environmental impact.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge