Vineel Nagisetty

OPSurv: Orthogonal Polynomials Quadrature Algorithm for Survival Analysis

Feb 02, 2024Abstract:This paper introduces the Orthogonal Polynomials Quadrature Algorithm for Survival Analysis (OPSurv), a new method providing time-continuous functional outputs for both single and competing risks scenarios in survival analysis. OPSurv utilizes the initial zero condition of the Cumulative Incidence function and a unique decomposition of probability densities using orthogonal polynomials, allowing it to learn functional approximation coefficients for each risk event and construct Cumulative Incidence Function estimates via Gauss--Legendre quadrature. This approach effectively counters overfitting, particularly in competing risks scenarios, enhancing model expressiveness and control. The paper further details empirical validations and theoretical justifications of OPSurv, highlighting its robust performance as an advancement in survival analysis with competing risks.

CGDTest: A Constrained Gradient Descent Algorithm for Testing Neural Networks

Apr 04, 2023

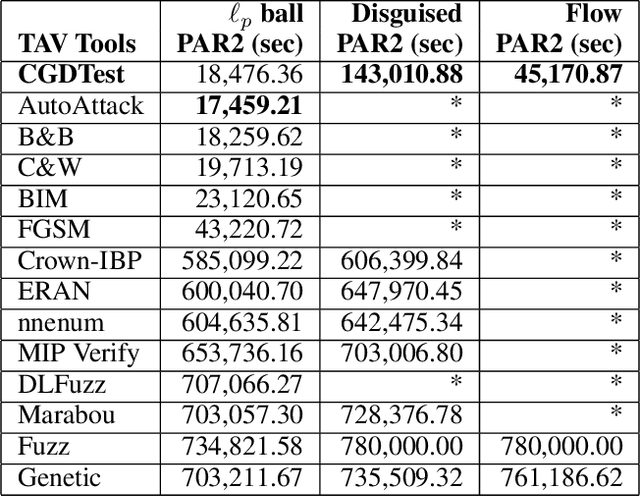

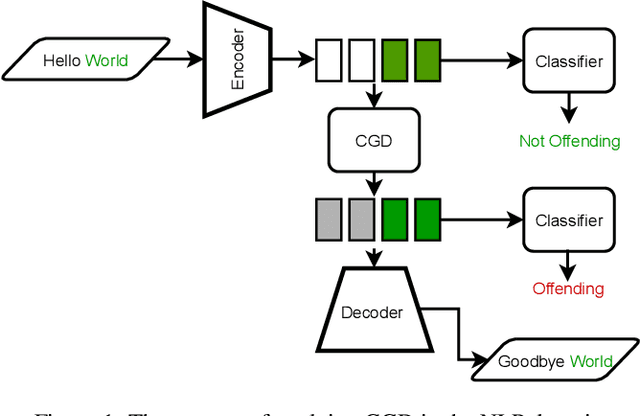

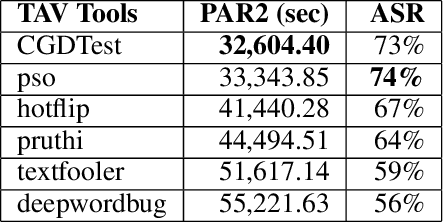

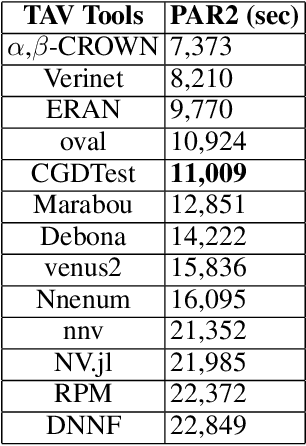

Abstract:In this paper, we propose a new Deep Neural Network (DNN) testing algorithm called the Constrained Gradient Descent (CGD) method, and an implementation we call CGDTest aimed at exposing security and robustness issues such as adversarial robustness and bias in DNNs. Our CGD algorithm is a gradient-descent (GD) method, with the twist that the user can also specify logical properties that characterize the kinds of inputs that the user may want. This functionality sets CGDTest apart from other similar DNN testing tools since it allows users to specify logical constraints to test DNNs not only for $\ell_p$ ball-based adversarial robustness but, more importantly, includes richer properties such as disguised and flow adversarial constraints, as well as adversarial robustness in the NLP domain. We showcase the utility and power of CGDTest via extensive experimentation in the context of vision and NLP domains, comparing against 32 state-of-the-art methods over these diverse domains. Our results indicate that CGDTest outperforms state-of-the-art testing tools for $\ell_p$ ball-based adversarial robustness, and is significantly superior in testing for other adversarial robustness, with improvements in PAR2 scores of over 1500% in some cases over the next best tool. Our evaluation shows that our CGD method outperforms competing methods we compared against in terms of expressibility (i.e., a rich constraint language and concomitant tool support to express a wide variety of properties), scalability (i.e., can be applied to very large real-world models with up to 138 million parameters), and generality (i.e., can be used to test a plethora of model architectures).

A Solver + Gradient Descent Training Algorithm for Deep Neural Networks

Jul 07, 2022

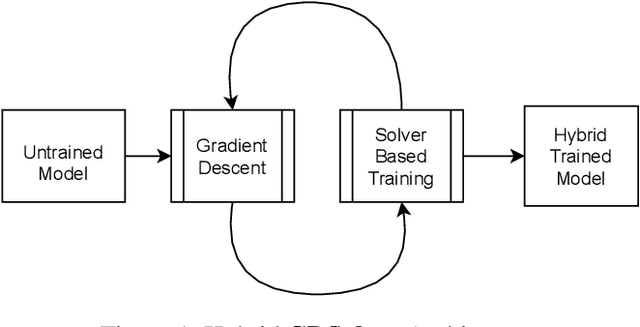

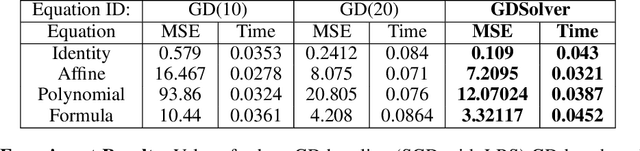

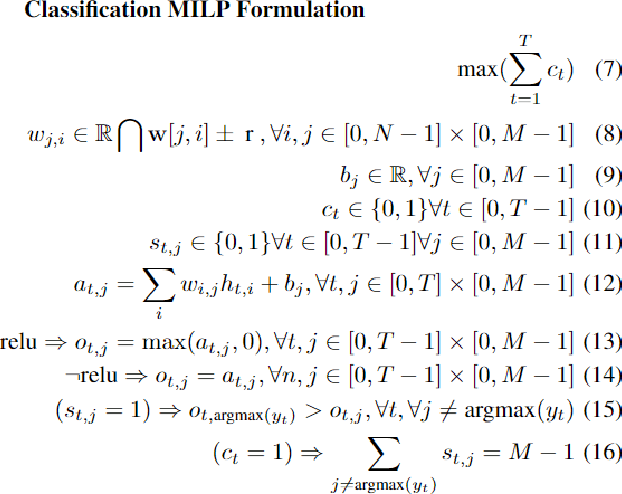

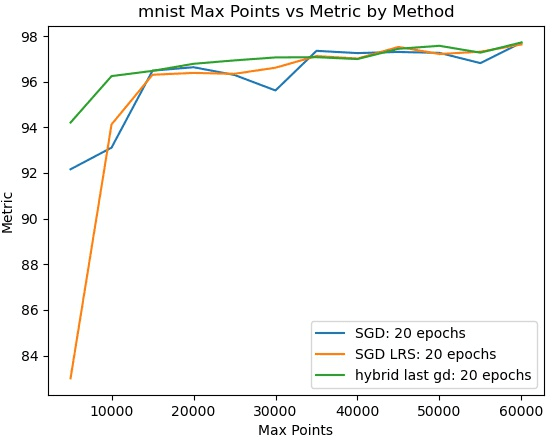

Abstract:We present a novel hybrid algorithm for training Deep Neural Networks that combines the state-of-the-art Gradient Descent (GD) method with a Mixed Integer Linear Programming (MILP) solver, outperforming GD and variants in terms of accuracy, as well as resource and data efficiency for both regression and classification tasks. Our GD+Solver hybrid algorithm, called GDSolver, works as follows: given a DNN $D$ as input, GDSolver invokes GD to partially train $D$ until it gets stuck in a local minima, at which point GDSolver invokes an MILP solver to exhaustively search a region of the loss landscape around the weight assignments of $D$'s final layer parameters with the goal of tunnelling through and escaping the local minima. The process is repeated until desired accuracy is achieved. In our experiments, we find that GDSolver not only scales well to additional data and very large model sizes, but also outperforms all other competing methods in terms of rates of convergence and data efficiency. For regression tasks, GDSolver produced models that, on average, had 31.5% lower MSE in 48% less time, and for classification tasks on MNIST and CIFAR10, GDSolver was able to achieve the highest accuracy over all competing methods, using only 50% of the training data that GD baselines required.

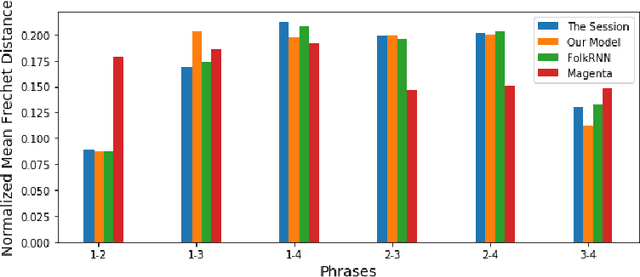

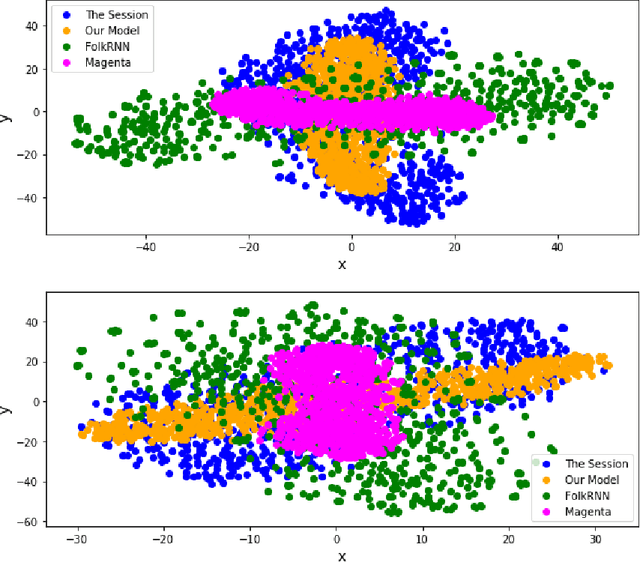

GANs & Reels: Creating Irish Music using a Generative Adversarial Network

Oct 29, 2020

Abstract:In this paper we present a method for algorithmic melody generation using a generative adversarial network without recurrent components. Music generation has been successfully done using recurrent neural networks, where the model learns sequence information that can help create authentic sounding melodies. Here, we use DC-GAN architecture with dilated convolutions and towers to capture sequential information as spatial image information, and learn long-range dependencies in fixed-length melody forms such as Irish traditional reel.

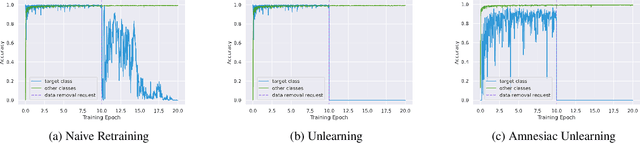

Amnesiac Machine Learning

Oct 21, 2020

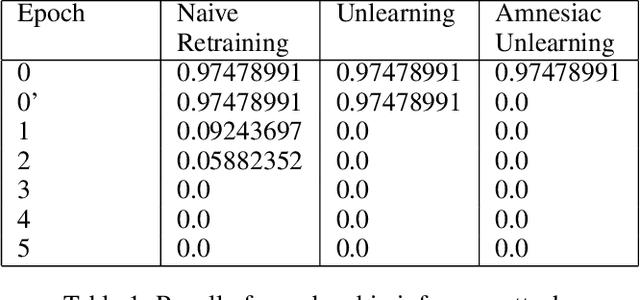

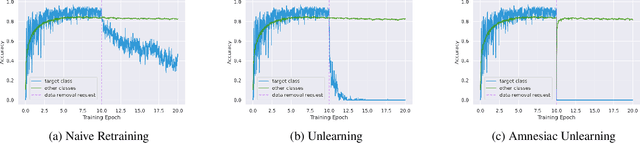

Abstract:The Right to be Forgotten is part of the recently enacted General Data Protection Regulation (GDPR) law that affects any data holder that has data on European Union residents. It gives EU residents the ability to request deletion of their personal data, including training records used to train machine learning models. Unfortunately, Deep Neural Network models are vulnerable to information leaking attacks such as model inversion attacks which extract class information from a trained model and membership inference attacks which determine the presence of an example in a model's training data. If a malicious party can mount an attack and learn private information that was meant to be removed, then it implies that the model owner has not properly protected their user's rights and their models may not be compliant with the GDPR law. In this paper, we present two efficient methods that address this question of how a model owner or data holder may delete personal data from models in such a way that they may not be vulnerable to model inversion and membership inference attacks while maintaining model efficacy. We start by presenting a real-world threat model that shows that simply removing training data is insufficient to protect users. We follow that up with two data removal methods, namely Unlearning and Amnesiac Unlearning, that enable model owners to protect themselves against such attacks while being compliant with regulations. We provide extensive empirical analysis that show that these methods are indeed efficient, safe to apply, effectively remove learned information about sensitive data from trained models while maintaining model efficacy.

LogicGAN: Logic-guided Generative Adversarial Networks

Feb 24, 2020

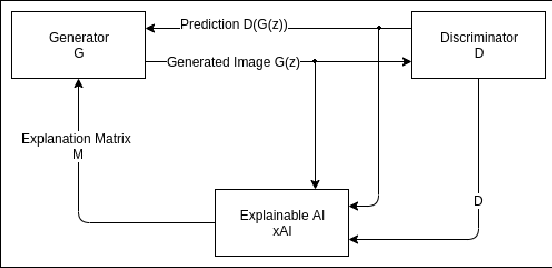

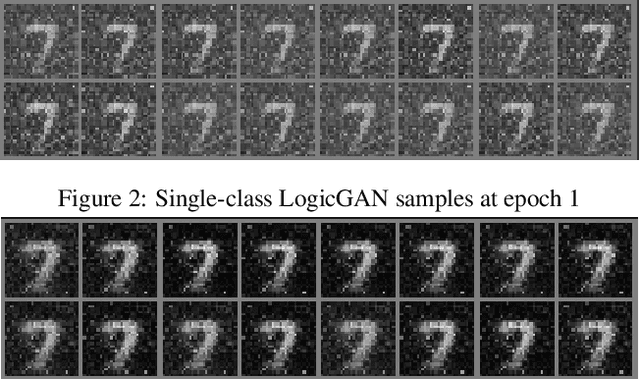

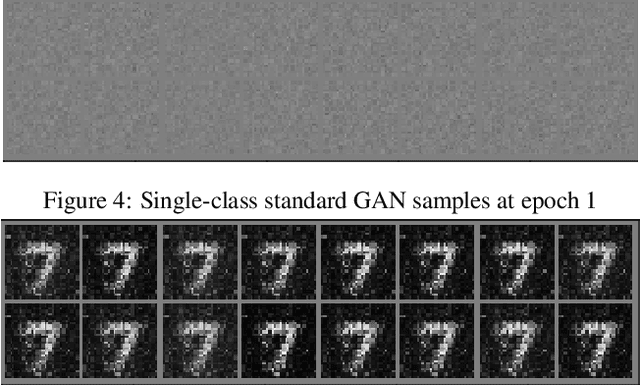

Abstract:Generative Adversarial Networks (GANs) are a revolutionary class of Deep Neural Networks (DNNs) that have been successfully used to generate realistic images, music, text, and other data. However, it is well known that GAN training can be notoriously resource-intensive and presents many challenges. Further, a potential weakness in GANs is that discriminator DNNs typically provide only one value (loss) of corrective feedback to generator DNNs (namely, the discriminator's assessment of the generated example). By contrast, we propose a new class of GAN we refer to as LogicGAN, that leverages recent advances in (logic-based) explainable AI (xAI) systems to provide a "richer" form of corrective feedback from discriminators to generators. Specifically, we modify the gradient descent process using xAI systems that specify the reason as to why the discriminator made the classification it did, thus providing the richer corrective feedback that helps the generator to better fool the discriminator. Using our approach, we show that LogicGANs learn much faster on MNIST data, achieving an improvement in data efficiency of 45% in single and 12.73% in multi-class setting over standard GANs while maintaining the same quality as measured by Fr\'echet Inception Distance. Further, we argue that LogicGAN enables users greater control over how models learn than standard GAN systems.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge