Vinay Amatya

Physics-informed Machine Learning of Parameterized Fundamental Diagrams

Aug 01, 2022

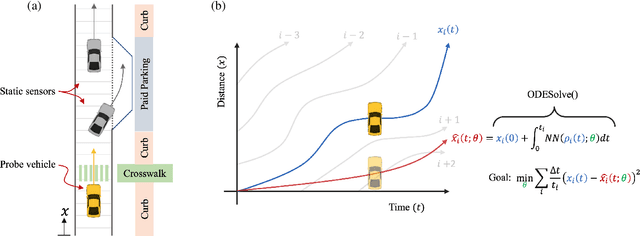

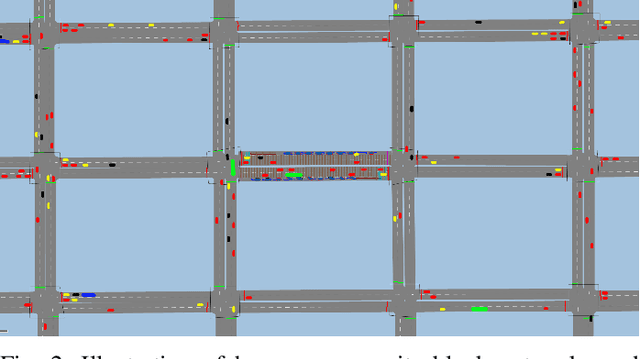

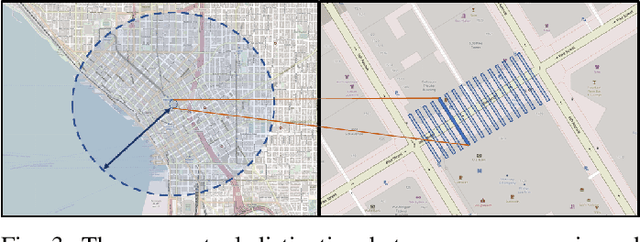

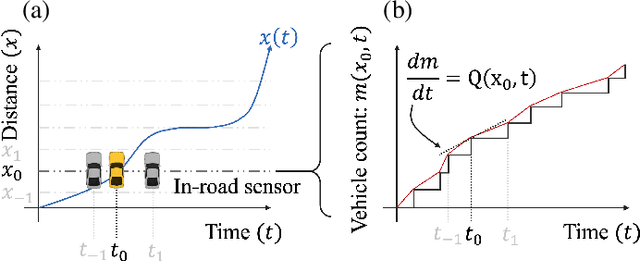

Abstract:Fundamental diagrams describe the relationship between speed, flow, and density for some roadway (or set of roadway) configuration(s). These diagrams typically do not reflect, however, information on how speed-flow relationships change as a function of exogenous variables such as curb configuration, weather or other exogenous, contextual information. In this paper we present a machine learning methodology that respects known engineering constraints and physical laws of roadway flux - those that are captured in fundamental diagrams - and show how this can be used to introduce contextual information into the generation of these diagrams. The modeling task is formulated as a probe vehicle trajectory reconstruction problem with Neural Ordinary Differential Equations (Neural ODEs). With the presented methodology, we extend the fundamental diagram to non-idealized roadway segments with potentially obstructed traffic data. For simulated data, we generalize this relationship by introducing contextual information at the learning stage, i.e. vehicle composition, driver behavior, curb zoning configuration, etc, and show how the speed-flow relationship changes as a function of these exogenous factors independent of roadway design.

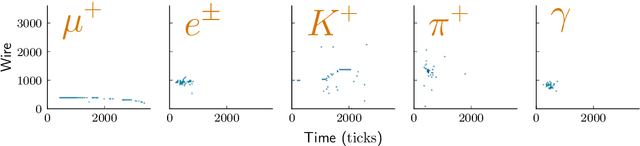

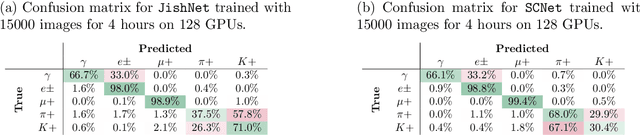

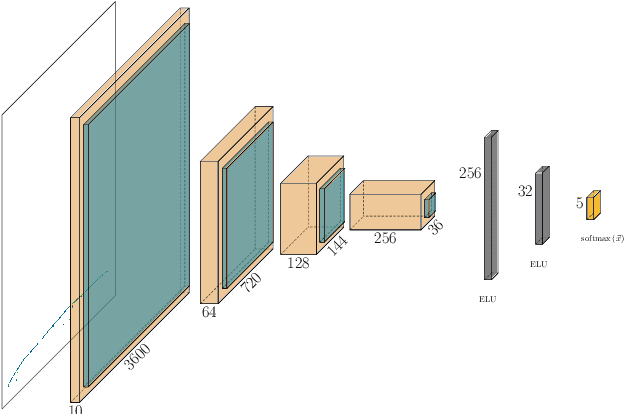

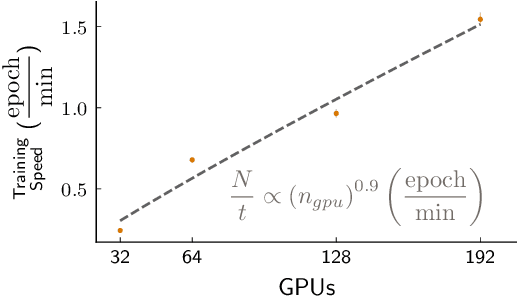

Scaling the training of particle classification on simulated MicroBooNE events to multiple GPUs

Apr 17, 2020

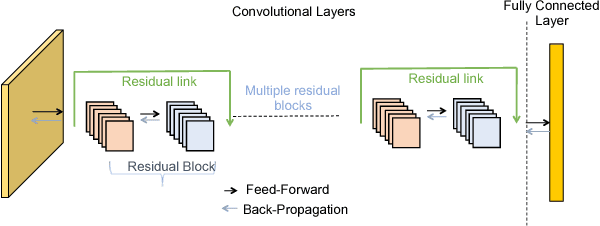

Abstract:Measurements in Liquid Argon Time Projection Chamber (LArTPC) neutrino detectors, such as the MicroBooNE detector at Fermilab, feature large, high fidelity event images. Deep learning techniques have been extremely successful in classification tasks of photographs, but their application to LArTPC event images is challenging, due to the large size of the events. Events in these detectors are typically two orders of magnitude larger than images found in classical challenges, like recognition of handwritten digits contained in the MNIST database or object recognition in the ImageNet database. Ideally, training would occur on many instances of the entire event data, instead of many instances of cropped regions of interest from the event data. However, such efforts lead to extremely long training cycles, which slow down the exploration of new network architectures and hyperparameter scans to improve the classification performance. We present studies of scaling a LArTPC classification problem on multiple architectures, spanning multiple nodes. The studies are carried out on simulated events in the MicroBooNE detector. We emphasize that it is beyond the scope of this study to optimize networks or extract the physics from any results here. Institutional computing at Pacific Northwest National Laboratory and the SummitDev machine at Oak Ridge National Laboratory's Leadership Computing Facility have been used. To our knowledge, this is the first use of state-of-the-art Convolutional Neural Networks for particle physics and their attendant compute techniques onto the DOE Leadership Class Facilities. We expect benefits to accrue particularly to the Deep Underground Neutrino Experiment (DUNE) LArTPC program, the flagship US High Energy Physics (HEP) program for the coming decades.

GossipGraD: Scalable Deep Learning using Gossip Communication based Asynchronous Gradient Descent

Mar 15, 2018

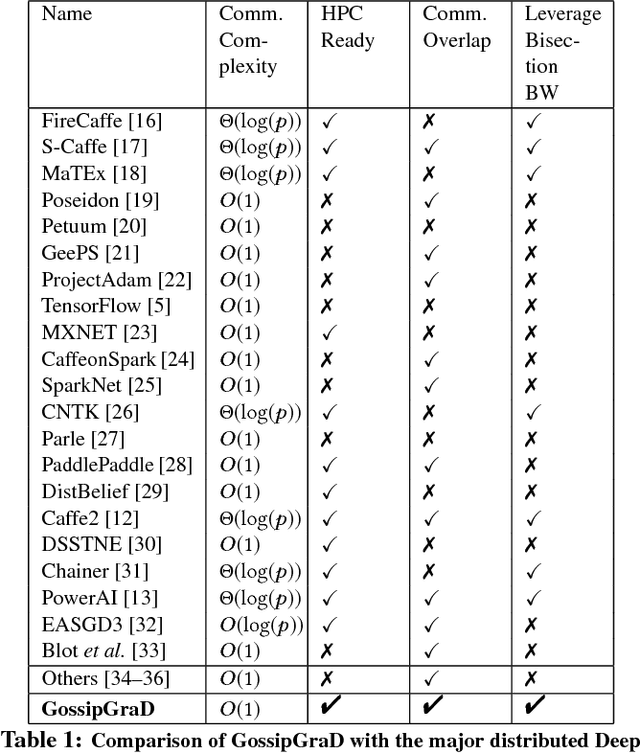

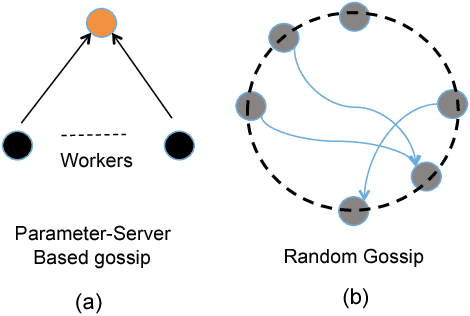

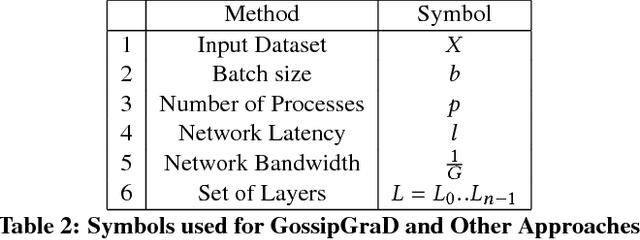

Abstract:In this paper, we present GossipGraD - a gossip communication protocol based Stochastic Gradient Descent (SGD) algorithm for scaling Deep Learning (DL) algorithms on large-scale systems. The salient features of GossipGraD are: 1) reduction in overall communication complexity from {\Theta}(log(p)) for p compute nodes in well-studied SGD to O(1), 2) model diffusion such that compute nodes exchange their updates (gradients) indirectly after every log(p) steps, 3) rotation of communication partners for facilitating direct diffusion of gradients, 4) asynchronous distributed shuffle of samples during the feedforward phase in SGD to prevent over-fitting, 5) asynchronous communication of gradients for further reducing the communication cost of SGD and GossipGraD. We implement GossipGraD for GPU and CPU clusters and use NVIDIA GPUs (Pascal P100) connected with InfiniBand, and Intel Knights Landing (KNL) connected with Aries network. We evaluate GossipGraD using well-studied dataset ImageNet-1K (~250GB), and widely studied neural network topologies such as GoogLeNet and ResNet50 (current winner of ImageNet Large Scale Visualization Research Challenge (ILSVRC)). Our performance evaluation using both KNL and Pascal GPUs indicates that GossipGraD can achieve perfect efficiency for these datasets and their associated neural network topologies. Specifically, for ResNet50, GossipGraD is able to achieve ~100% compute efficiency using 128 NVIDIA Pascal P100 GPUs - while matching the top-1 classification accuracy published in literature.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge