Tomé Albuquerque

CountPath: Automating Fragment Counting in Digital Pathology

Mar 13, 2025

Abstract:Quality control of medical images is a critical component of digital pathology, ensuring that diagnostic images meet required standards. A pre-analytical task within this process is the verification of the number of specimen fragments, a process that ensures that the number of fragments on a slide matches the number documented in the macroscopic report. This step is important to ensure that the slides contain the appropriate diagnostic material from the grossing process, thereby guaranteeing the accuracy of subsequent microscopic examination and diagnosis. Traditionally, this assessment is performed manually, requiring significant time and effort while being subject to significant variability due to its subjective nature. To address these challenges, this study explores an automated approach to fragment counting using the YOLOv9 and Vision Transformer models. Our results demonstrate that the automated system achieves a level of performance comparable to expert assessments, offering a reliable and efficient alternative to manual counting. Additionally, we present findings on interobserver variability, showing that the automated approach achieves an accuracy of 86%, which falls within the range of variation observed among experts (82-88%), further supporting its potential for integration into routine pathology workflows.

Characterizing the Interpretability of Attention Maps in Digital Pathology

Jul 02, 2024

Abstract:Interpreting machine learning model decisions is crucial for high-risk applications like healthcare. In digital pathology, large whole slide images (WSIs) are decomposed into smaller tiles and tile-derived features are processed by attention-based multiple instance learning (ABMIL) models to predict WSI-level labels. These networks generate tile-specific attention weights, which can be visualized as attention maps for interpretability. However, a standardized evaluation framework for these maps is lacking, questioning their reliability and ability to detect spurious correlations that can mislead models. We herein propose a framework to assess the ability of attention networks to attend to relevant features in digital pathology by creating artificial model confounders and using dedicated interpretability metrics. Models are trained and evaluated on data with tile modifications correlated with WSI labels, enabling the analysis of model sensitivity to artificial confounders and the accuracy of attention maps in highlighting them. Confounders are introduced either through synthetic tile modifications or through tile ablations based on their specific image-based features, with the latter being used to assess more clinically relevant scenarios. We also analyze the impact of varying confounder quantities at both the tile and WSI levels. Our results show that ABMIL models perform as desired within our framework. While attention maps generally highlight relevant regions, their robustness is affected by the type and number of confounders. Our versatile framework has the potential to be used in the evaluation of various methods and the exploration of image-based features driving model predictions, which could aid in biomarker discovery.

Unimodal Distributions for Ordinal Regression

Mar 08, 2023

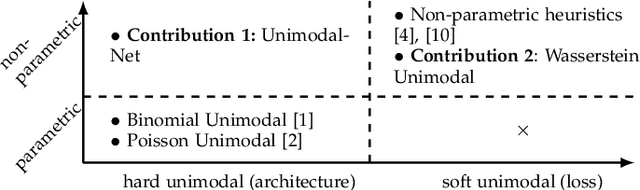

Abstract:In many real-world prediction tasks, class labels contain information about the relative order between labels that are not captured by commonly used loss functions such as multicategory cross-entropy. Recently, the preference for unimodal distributions in the output space has been incorporated into models and loss functions to account for such ordering information. However, current approaches rely on heuristics that lack a theoretical foundation. Here, we propose two new approaches to incorporate the preference for unimodal distributions into the predictive model. We analyse the set of unimodal distributions in the probability simplex and establish fundamental properties. We then propose a new architecture that imposes unimodal distributions and a new loss term that relies on the notion of projection in a set to promote unimodality. Experiments show the new architecture achieves top-2 performance, while the proposed new loss term is very competitive while maintaining high unimodality.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge