Todor Stefanov

P2W: From Power Traces to Weights Matrix -- An Unconventional Transfer Learning Approach

Feb 20, 2025Abstract:The rapid growth of deploying machine learning (ML) models within embedded systems on a chip (SoCs) has led to transformative shifts in fields like healthcare and autonomous vehicles. One of the primary challenges for training such embedded ML models is the lack of publicly available high-quality training data. Transfer learning approaches address this challenge by utilizing the knowledge encapsulated in an existing ML model as a starting point for training a new ML model. However, existing transfer learning approaches require direct access to the existing model which is not always feasible, especially for ML models deployed on embedded SoCs. Therefore, in this paper, we introduce a novel unconventional transfer learning approach to train a new ML model by extracting and using weights from an existing ML model running on an embedded SoC without having access to the model within the SoC. Our approach captures power consumption measurements from the SoC while it is executing the ML model and translates them to an approximated weights matrix used to initialize the new ML model. This improves the learning efficiency and predictive performance of the new model, especially in scenarios with limited data available to train the model. Our novel approach can effectively increase the accuracy of the new ML model up to 3 times compared to classical training methods using the same amount of limited training data.

SlimSeiz: Efficient Channel-Adaptive Seizure Prediction Using a Mamba-Enhanced Network

Oct 13, 2024Abstract:Epileptic seizures cause abnormal brain activity, and their unpredictability can lead to accidents, underscoring the need for long-term seizure prediction. Although seizures can be predicted by analyzing electroencephalogram (EEG) signals, existing methods often require too many electrode channels or larger models, limiting mobile usability. This paper introduces a SlimSeiz framework that utilizes adaptive channel selection with a lightweight neural network model. SlimSeiz operates in two states: the first stage selects the optimal channel set for seizure prediction using machine learning algorithms, and the second stage employs a lightweight neural network based on convolution and Mamba for prediction. On the Children's Hospital Boston-MIT (CHB-MIT) EEG dataset, SlimSeiz can reduce channels from 22 to 8 while achieving a satisfactory result of 94.8% accuracy, 95.5% sensitivity, and 94.0% specificity with only 21.2K model parameters, matching or outperforming larger models' performance. We also validate SlimSeiz on a new EEG dataset, SRH-LEI, collected from Shanghai Renji Hospital, demonstrating its effectiveness across different patients. The code and SRH-LEI dataset are available at https://github.com/guoruilu/SlimSeiz.

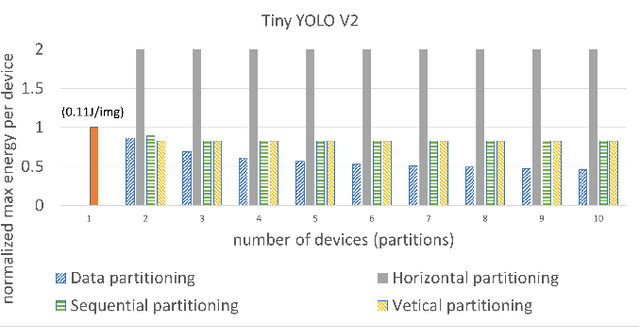

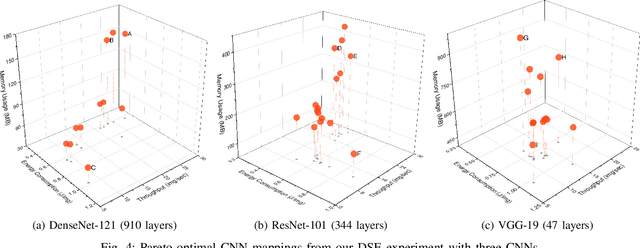

The Effects of Partitioning Strategies on Energy Consumption in Distributed CNN Inference at The Edge

Oct 15, 2022

Abstract:Nowadays, many AI applications utilizing resource-constrained edge devices (e.g., small mobile robots, tiny IoT devices, etc.) require Convolutional Neural Network (CNN) inference on a distributed system at the edge due to limited resources of a single edge device to accommodate and execute a large CNN. There are four main partitioning strategies that can be utilized to partition a large CNN model and perform distributed CNN inference on multiple devices at the edge. However, to the best of our knowledge, no research has been conducted to investigate how these four partitioning strategies affect the energy consumption per edge device. Such an investigation is important because it will reveal the potential of these partitioning strategies to be used effectively for reduction of the per-device energy consumption when a large CNN model is deployed for distributed inference at the edge. Therefore, in this paper, we investigate and compare the per-device energy consumption of CNN model inference at the edge on a distributed system when the four partitioning strategies are utilized. The goal of our investigation and comparison is to find out which partitioning strategies (and under what conditions) have the highest potential to decrease the energy consumption per edge device when CNN inference is performed at the edge on a distributed system.

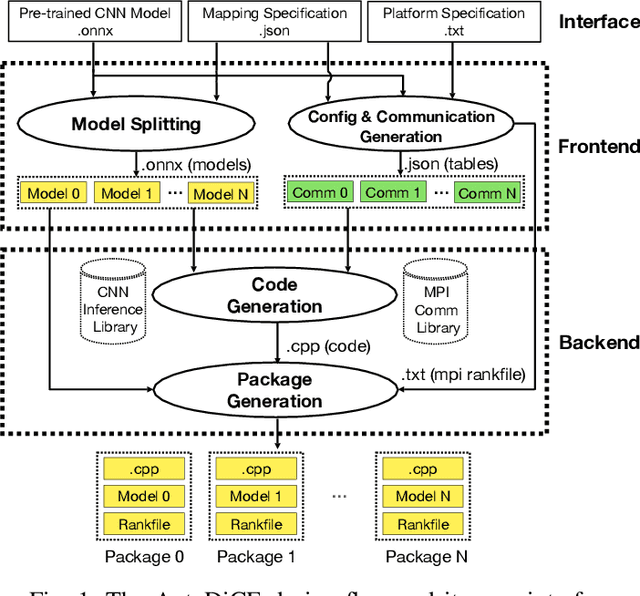

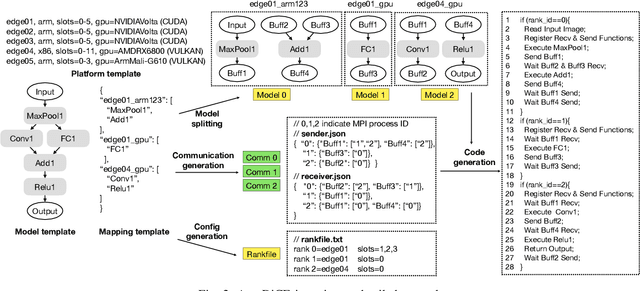

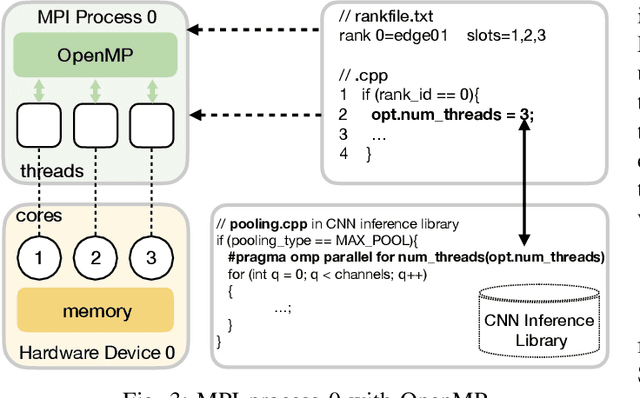

AutoDiCE: Fully Automated Distributed CNN Inference at the Edge

Jul 20, 2022

Abstract:Deep Learning approaches based on Convolutional Neural Networks (CNNs) are extensively utilized and very successful in a wide range of application areas, including image classification and speech recognition. For the execution of trained CNNs, i.e. model inference, we nowadays witness a shift from the Cloud to the Edge. Unfortunately, deploying and inferring large, compute and memory intensive CNNs on edge devices is challenging because these devices typically have limited power budgets and compute/memory resources. One approach to address this challenge is to leverage all available resources across multiple edge devices to deploy and execute a large CNN by properly partitioning the CNN and running each CNN partition on a separate edge device. Although such distribution, deployment, and execution of large CNNs on multiple edge devices is a desirable and beneficial approach, there currently does not exist a design and programming framework that takes a trained CNN model, together with a CNN partitioning specification, and fully automates the CNN model splitting and deployment on multiple edge devices to facilitate distributed CNN inference at the Edge. Therefore, in this paper, we propose a novel framework, called AutoDiCE, for automated splitting of a CNN model into a set of sub-models and automated code generation for distributed and collaborative execution of these sub-models on multiple, possibly heterogeneous, edge devices, while supporting the exploitation of parallelism among and within the edge devices. Our experimental results show that AutoDiCE can deliver distributed CNN inference with reduced energy consumption and memory usage per edge device, and improved overall system throughput at the same time.

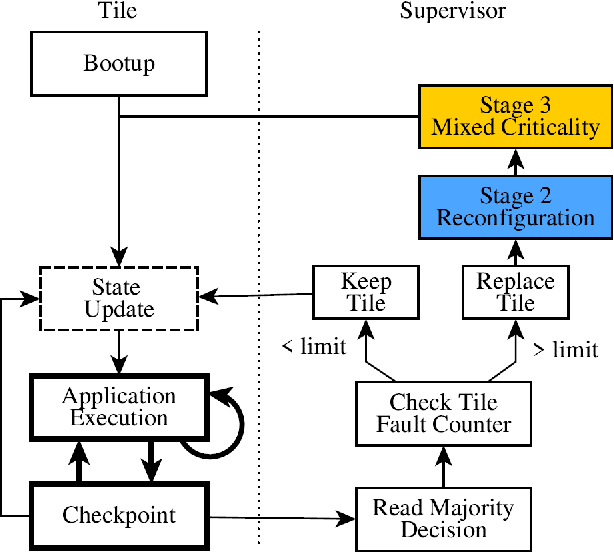

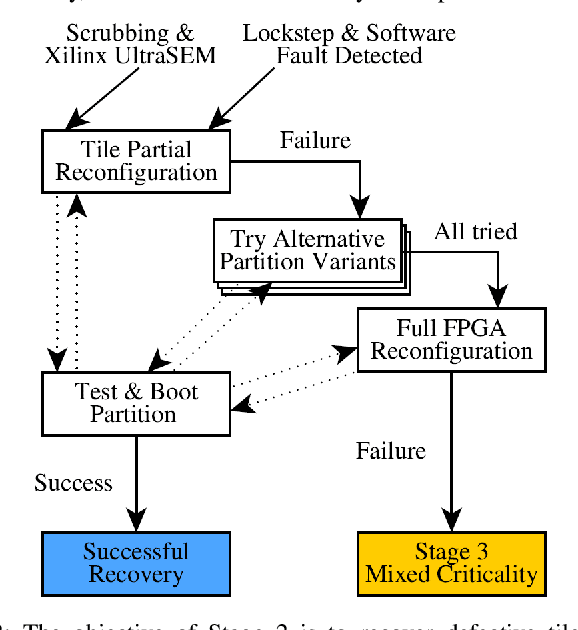

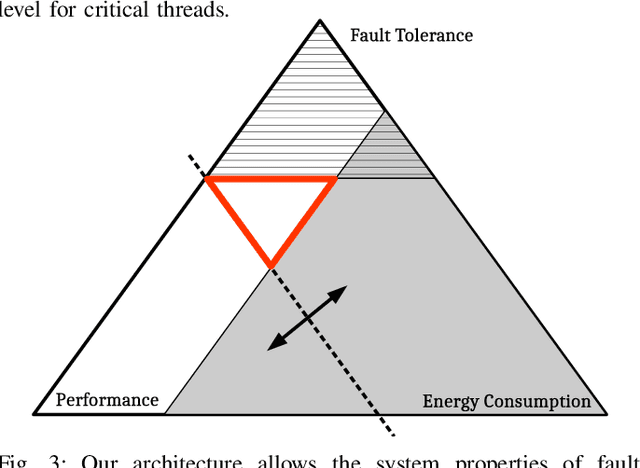

Fault-Tolerant Nanosatellite Computing on a Budget

Mar 21, 2019

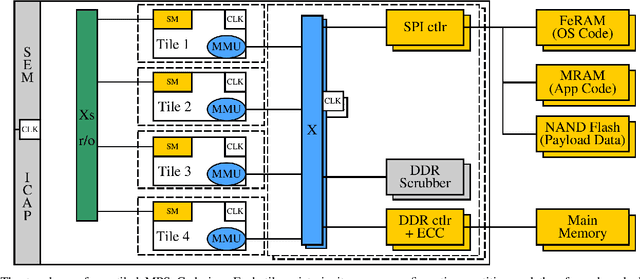

Abstract:Micro- and nanosatellites have become popular platforms for a variety of commercial and scientific applications, but today are considered suitable mainly for short and low-priority space missions due to their low reliability. In part, this can be attributed to their reliance upon cheap, low-feature size, COTS components originally designed for embedded and mobile-market applications, for which traditional hardware-voting concepts are ineffective. Software-fault-tolerance concepts have been shown effective for such systems, but have largely been ignored by the space industry due to low maturity, as most have only been researched in theory. In practice, designers of payload instruments and miniaturized satellites are usually forced to sacrifice reliability in favor deliver the level of performance necessary for cutting-edge science and innovative commercial applications. Thus, we developed a software-fault-tolerance-approach based upon thread-level coarse-grain lockstep, which was validated using fault-injection. To offer strong long-term fault coverage, our architecture is implemented as tiled MPSoC on an FPGA, utilizing partial reconfiguration, as well as mixed criticality. This architecture can satisfy the high performance requirements of current and future scientific and commercial space missions at very low cost, while offering the strong fault-coverage guarantees necessary for platform control even for missions with a long duration. This architecture was developed for a 4-year ESA project. Together with two industrial partners, we are developing a prototype to then undergo radiation testing.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge