Theodor Wulff

Joint Action Language Modelling for Transparent Policy Execution

Apr 14, 2025

Abstract:An agent's intention often remains hidden behind the black-box nature of embodied policies. Communication using natural language statements that describe the next action can provide transparency towards the agent's behavior. We aim to insert transparent behavior directly into the learning process, by transforming the problem of policy learning into a language generation problem and combining it with traditional autoregressive modelling. The resulting model produces transparent natural language statements followed by tokens representing the specific actions to solve long-horizon tasks in the Language-Table environment. Following previous work, the model is able to learn to produce a policy represented by special discretized tokens in an autoregressive manner. We place special emphasis on investigating the relationship between predicting actions and producing high-quality language for a transparent agent. We find that in many cases both the quality of the action trajectory and the transparent statement increase when they are generated simultaneously.

Balancing long- and short-term dynamics for the modeling of saliency in videos

Apr 08, 2025Abstract:The role of long- and short-term dynamics towards salient object detection in videos is under-researched. We present a Transformer-based approach to learn a joint representation of video frames and past saliency information. Our model embeds long- and short-term information to detect dynamically shifting saliency in video. We provide our model with a stream of video frames and past saliency maps, which acts as a prior for the next prediction, and extract spatiotemporal tokens from both modalities. The decomposition of the frame sequence into tokens lets the model incorporate short-term information from within the token, while being able to make long-term connections between tokens throughout the sequence. The core of the system consists of a dual-stream Transformer architecture to process the extracted sequences independently before fusing the two modalities. Additionally, we apply a saliency-based masking scheme to the input frames to learn an embedding that facilitates the recognition of deviations from previous outputs. We observe that the additional prior information aids in the first detection of the salient location. Our findings indicate that the ratio of spatiotemporal long- and short-term features directly impacts the model's performance. While increasing the short-term context is beneficial up to a certain threshold, the model's performance greatly benefits from an expansion of the long-term context.

Noise-Free Explanation for Driving Action Prediction

Jul 08, 2024

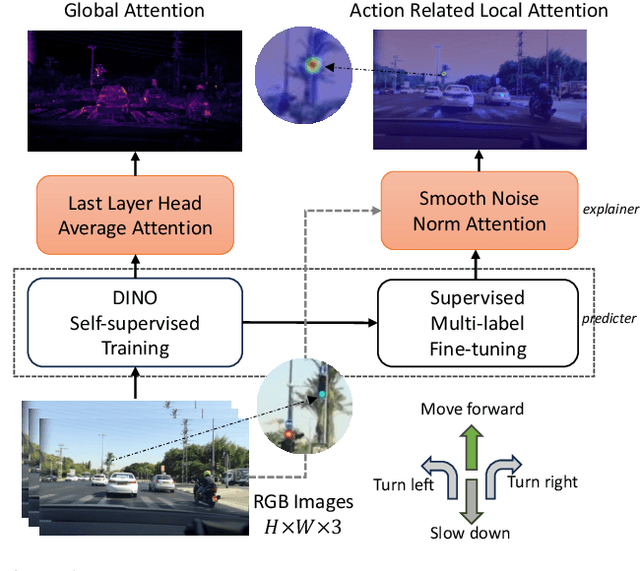

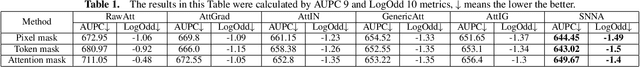

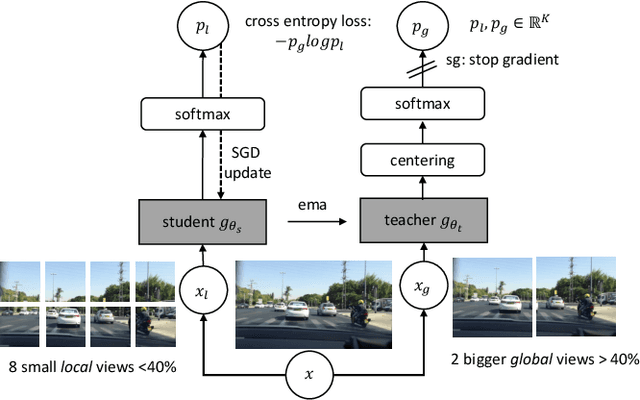

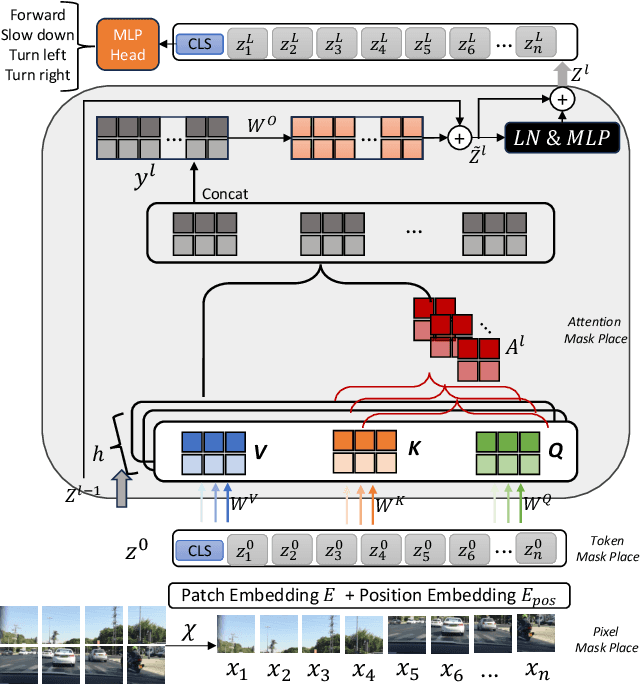

Abstract:Although attention mechanisms have achieved considerable progress in Transformer-based architectures across various Artificial Intelligence (AI) domains, their inner workings remain to be explored. Existing explainable methods have different emphases but are rather one-sided. They primarily analyse the attention mechanisms or gradient-based attribution while neglecting the magnitudes of input feature values or the skip-connection module. Moreover, they inevitably bring spurious noisy pixel attributions unrelated to the model's decision, hindering humans' trust in the spotted visualization result. Hence, we propose an easy-to-implement but effective way to remedy this flaw: Smooth Noise Norm Attention (SNNA). We weigh the attention by the norm of the transformed value vector and guide the label-specific signal with the attention gradient, then randomly sample the input perturbations and average the corresponding gradients to produce noise-free attribution. Instead of evaluating the explanation method on the binary or multi-class classification tasks like in previous works, we explore the more complex multi-label classification scenario in this work, i.e., the driving action prediction task, and trained a model for it specifically. Both qualitative and quantitative evaluation results show the superiority of SNNA compared to other SOTA attention-based explainable methods in generating a clearer visual explanation map and ranking the input pixel importance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge