Teng-Yun Hsiao

Revisiting Test-Time Scaling: A Survey and a Diversity-Aware Method for Efficient Reasoning

Jun 05, 2025Abstract:Test-Time Scaling (TTS) improves the reasoning performance of Large Language Models (LLMs) by allocating additional compute during inference. We conduct a structured survey of TTS methods and categorize them into sampling-based, search-based, and trajectory optimization strategies. We observe that reasoning-optimized models often produce less diverse outputs, which limits TTS effectiveness. To address this, we propose ADAPT (A Diversity Aware Prefix fine-Tuning), a lightweight method that applies prefix tuning with a diversity-focused data strategy. Experiments on mathematical reasoning tasks show that ADAPT reaches 80% accuracy using eight times less compute than strong baselines. Our findings highlight the essential role of generative diversity in maximizing TTS effectiveness.

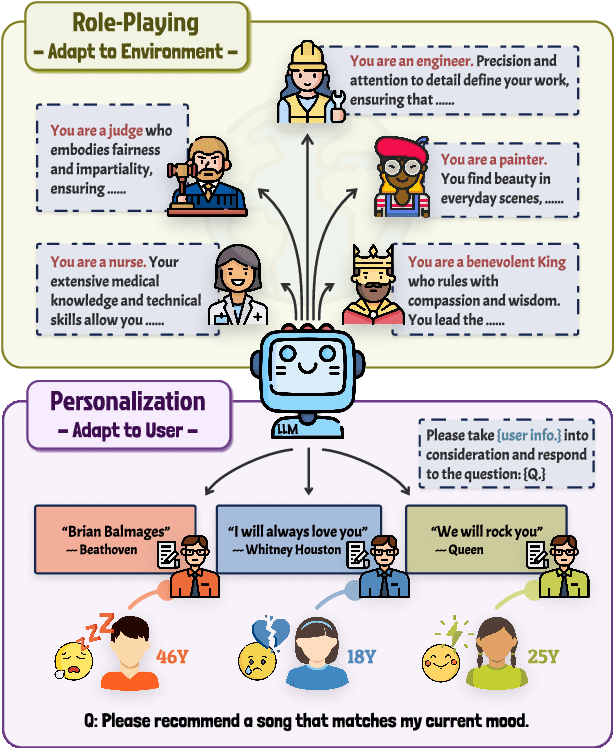

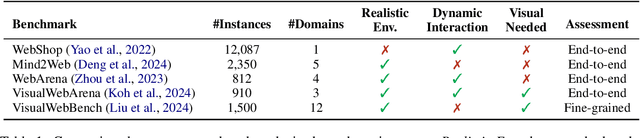

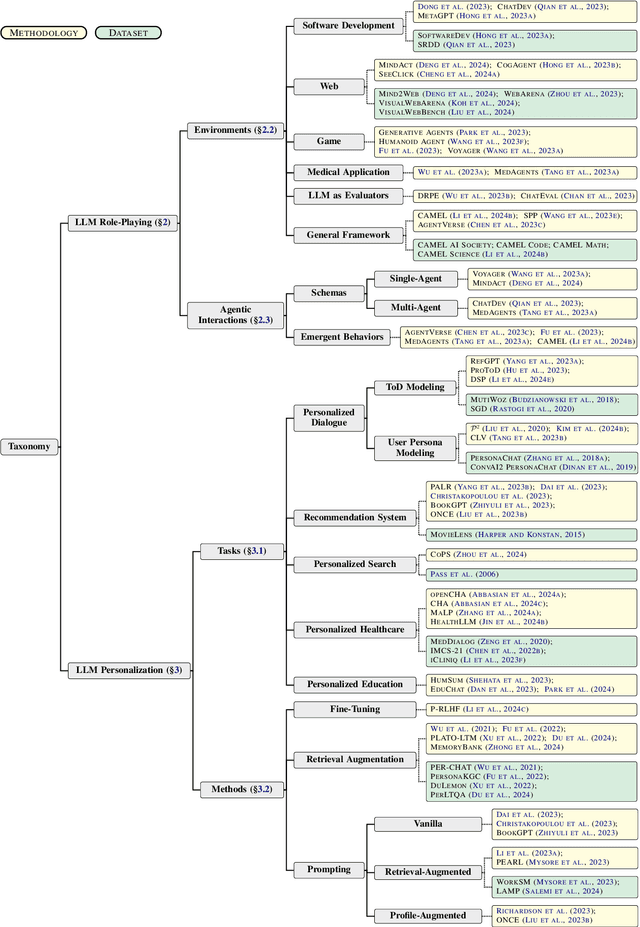

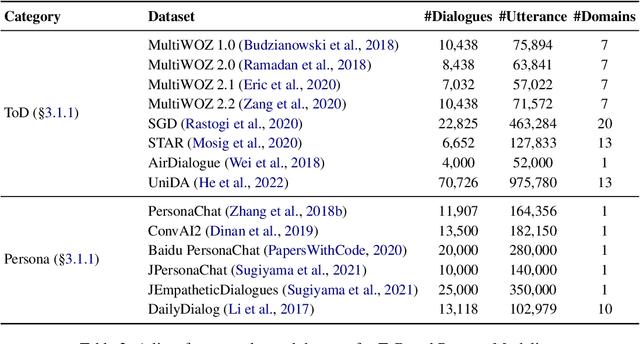

Two Tales of Persona in LLMs: A Survey of Role-Playing and Personalization

Jun 03, 2024

Abstract:Recently, methods investigating how to adapt large language models (LLMs) for specific scenarios have gained great attention. Particularly, the concept of \textit{persona}, originally adopted in dialogue literature, has re-surged as a promising avenue. However, the growing research on persona is relatively disorganized, lacking a systematic overview. To close the gap, we present a comprehensive survey to categorize the current state of the field. We identify two lines of research, namely (1) LLM Role-Playing, where personas are assigned to LLMs, and (2) LLM Personalization, where LLMs take care of user personas. To the best of our knowledge, we present the first survey tailored for LLM role-playing and LLM personalization under the unified view of persona, including taxonomy, current challenges, and potential directions. To foster future endeavors, we actively maintain a paper collection available to the community: https://github.com/MiuLab/PersonaLLM-Survey

Uniform Memory Retrieval with Larger Capacity for Modern Hopfield Models

Apr 04, 2024Abstract:We propose a two-stage memory retrieval dynamics for modern Hopfield models, termed $\mathtt{U\text{-}Hop}$, with enhanced memory capacity. Our key contribution is a learnable feature map $\Phi$ which transforms the Hopfield energy function into a kernel space. This transformation ensures convergence between the local minima of energy and the fixed points of retrieval dynamics within the kernel space. Consequently, the kernel norm induced by $\Phi$ serves as a novel similarity measure. It utilizes the stored memory patterns as learning data to enhance memory capacity across all modern Hopfield models. Specifically, we accomplish this by constructing a separation loss $\mathcal{L}_\Phi$ that separates the local minima of kernelized energy by separating stored memory patterns in kernel space. Methodologically, $\mathtt{U\text{-}Hop}$ memory retrieval process consists of: \textbf{(Stage~I.)} minimizing separation loss for a more uniformed memory (local minimum) distribution, followed by \textbf{(Stage~II.)} standard Hopfield energy minimization for memory retrieval. This results in a significant reduction of possible meta-stable states in the Hopfield energy function, thus enhancing memory capacity by preventing memory confusion. Empirically, with real-world datasets, we demonstrate that $\mathtt{U\text{-}Hop}$ outperforms all existing modern Hopfield models and SOTA similarity measures, achieving substantial improvements in both associative memory retrieval and deep learning tasks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge