Taylor J. Carpenter

ModelGuard: Runtime Validation of Lipschitz-continuous Models

Apr 30, 2021

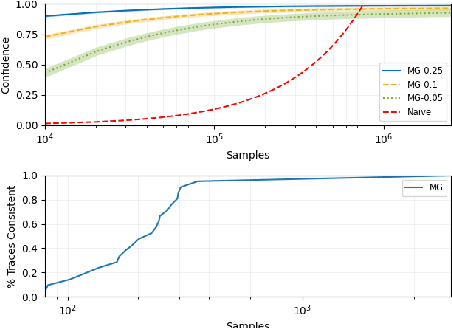

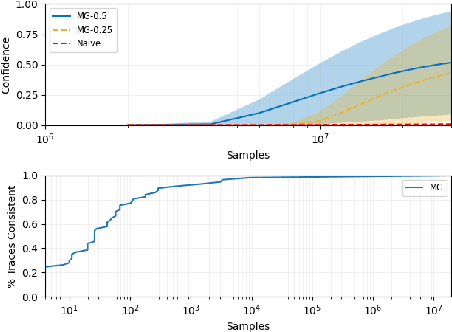

Abstract:This paper presents ModelGuard, a sampling-based approach to runtime model validation for Lipschitz-continuous models. Although techniques exist for the validation of many classes of models the majority of these methods cannot be applied to the whole of Lipschitz-continuous models, which includes neural network models. Additionally, existing techniques generally consider only white-box models. By taking a sampling-based approach, we can address black-box models, represented only by an input-output relationship and a Lipschitz constant. We show that by randomly sampling from a parameter space and evaluating the model, it is possible to guarantee the correctness of traces labeled consistent and provide a confidence on the correctness of traces labeled inconsistent. We evaluate the applicability and scalability of ModelGuard in three case studies, including a physical platform.

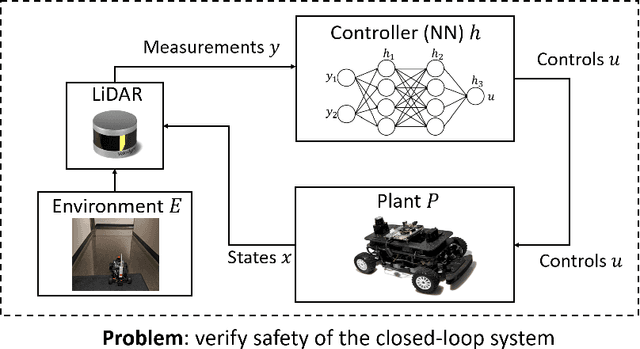

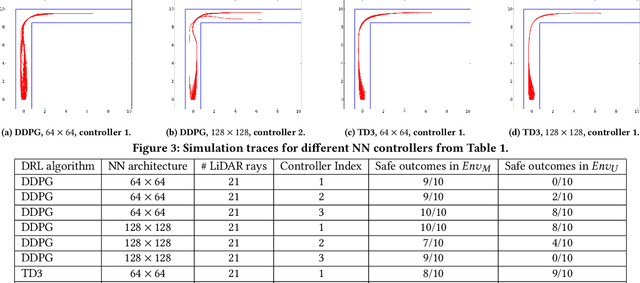

Case Study: Verifying the Safety of an Autonomous Racing Car with a Neural Network Controller

Oct 24, 2019

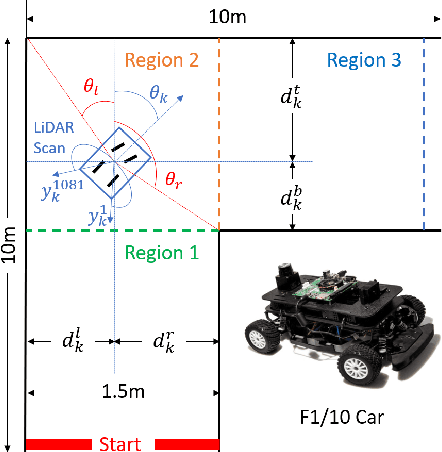

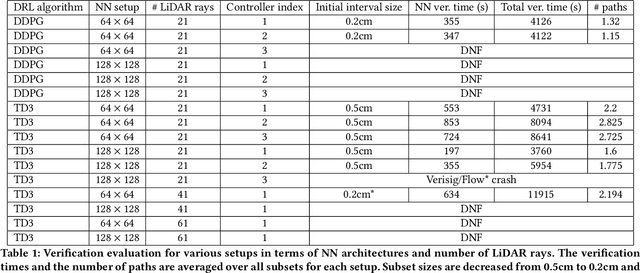

Abstract:This paper describes a verification case study on an autonomous racing car with a neural network (NN) controller. Although several verification approaches have been proposed over the last year, they have only been evaluated on low-dimensional systems or systems with constrained environments. To explore the limits of existing approaches, we present a challenging benchmark in which the NN takes raw LiDAR measurements as input and outputs steering for the car. We train a dozen NNs using two reinforcement learning algorithms and show that the state of the art in verification can handle systems with around 40 LiDAR rays, well short of a typical LiDAR scan with 1081 rays. Furthermore, we perform real experiments to investigate the benefits and limitations of verification with respect to the sim2real gap, i.e., the difference between a system's modeled and real performance. We identify cases, similar to the modeled environment, in which verification is strongly correlated with safe behavior. Finally, we illustrate LiDAR fault patterns that can be used to develop robust and safe reinforcement learning algorithms.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge