Tarique Siddiqui

Sibyl: Forecasting Time-Evolving Query Workloads

Jan 08, 2024Abstract:Database systems often rely on historical query traces to perform workload-based performance tuning. However, real production workloads are time-evolving, making historical queries ineffective for optimizing future workloads. To address this challenge, we propose SIBYL, an end-to-end machine learning-based framework that accurately forecasts a sequence of future queries, with the entire query statements, in various prediction windows. Drawing insights from real-workloads, we propose template-based featurization techniques and develop a stacked-LSTM with an encoder-decoder architecture for accurate forecasting of query workloads. We also develop techniques to improve forecasting accuracy over large prediction windows and achieve high scalability over large workloads with high variability in arrival rates of queries. Finally, we propose techniques to handle workload drifts. Our evaluation on four real workloads demonstrates that SIBYL can forecast workloads with an $87.3\%$ median F1 score, and can result in $1.7\times$ and $1.3\times$ performance improvement when applied to materialized view selection and index selection applications, respectively.

ML-Powered Index Tuning: An Overview of Recent Progress and Open Challenges

Aug 25, 2023

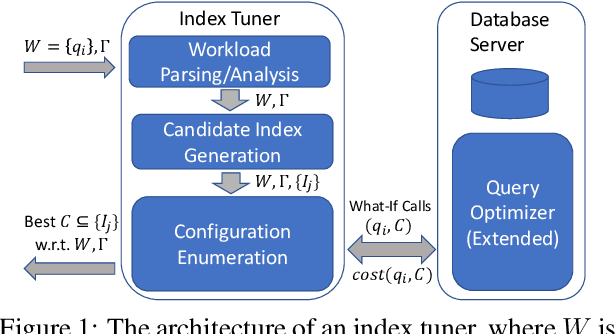

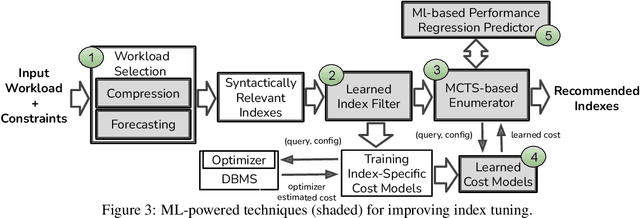

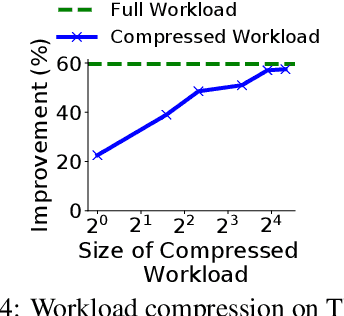

Abstract:The scale and complexity of workloads in modern cloud services have brought into sharper focus a critical challenge in automated index tuning -- the need to recommend high-quality indexes while maintaining index tuning scalability. This challenge is further compounded by the requirement for automated index implementations to introduce minimal query performance regressions in production deployments, representing a significant barrier to achieving scalability and full automation. This paper directs attention to these challenges within automated index tuning and explores ways in which machine learning (ML) techniques provide new opportunities in their mitigation. In particular, we reflect on recent efforts in developing ML techniques for workload selection, candidate index filtering, speeding up index configuration search, reducing the amount of query optimizer calls, and lowering the chances of performance regressions. We highlight the key takeaways from these efforts and underline the gaps that need to be closed for their effective functioning within the traditional index tuning framework. Additionally, we present a preliminary cross-platform design aimed at democratizing index tuning across multiple SQL-like systems -- an imperative in today's continuously expanding data system landscape. We believe our findings will help provide context and impetus to the research and development efforts in automated index tuning.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge