Tao Bi

Recognizing Micro-expression in Video Clip with Adaptive Key-frame Mining

Sep 19, 2020

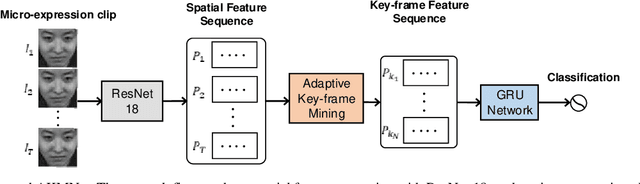

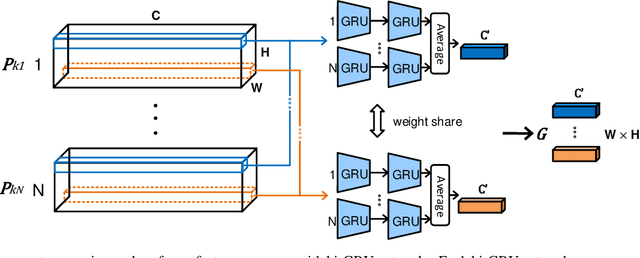

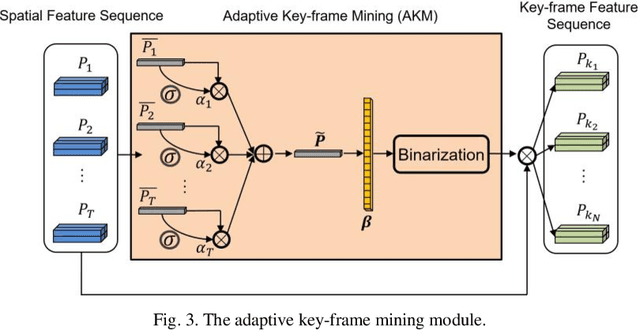

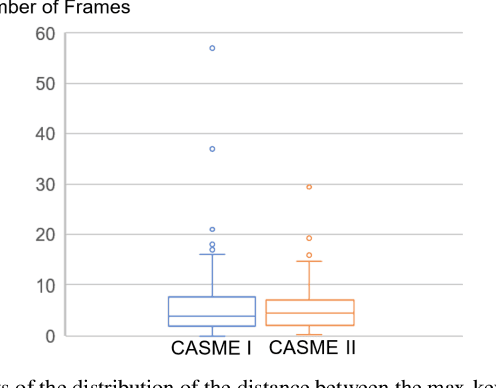

Abstract:As a spontaneous expression of emotion on face, micro-expression is receiving increasing focus. Whist better recognition accuracy is achieved by various deep learning (DL) techniques, one characteristic of micro-expression has been not fully leveraged. That is, such facial movement is transient and sparsely localized through time. Therefore, the representation learned from a long video clip is usually redundant. On the other hand, methods utilizing the single apex frame require manual annotations and sacrifice the temporal dynamic information. To simultaneously spot and recognize such fleeting facial movement, we propose a novel end-to-end deep learning architecture, referred to as Adaptive Key-frame Mining Network (AKMNet). Operating on the raw video clip of micro-expression, AKMNet is able to learn discriminative spatio-temporal representation by combining the spatial feature of self-exploited local key frames and their global-temporal dynamics. Empirical and theoretical evaluations show advantages of the proposed approach with improved performance comparing with other state-of-the-art methods.

A Novel Apex-Time Network for Cross-Dataset Micro-Expression Recognition

Apr 23, 2019

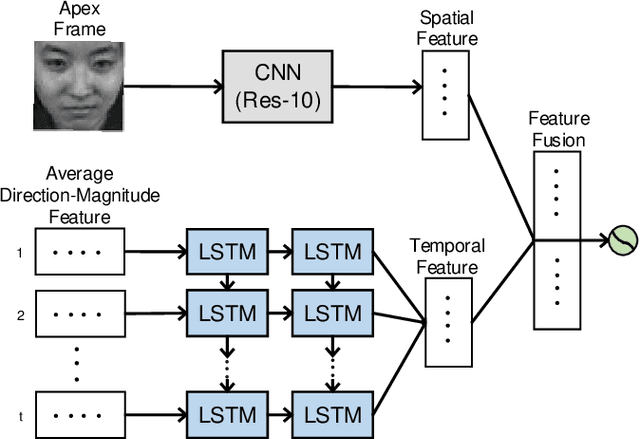

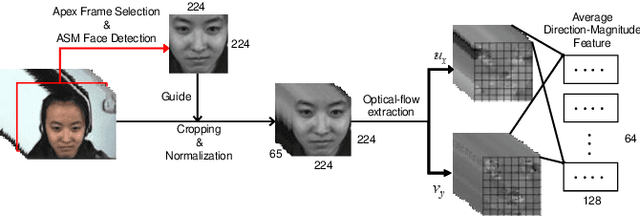

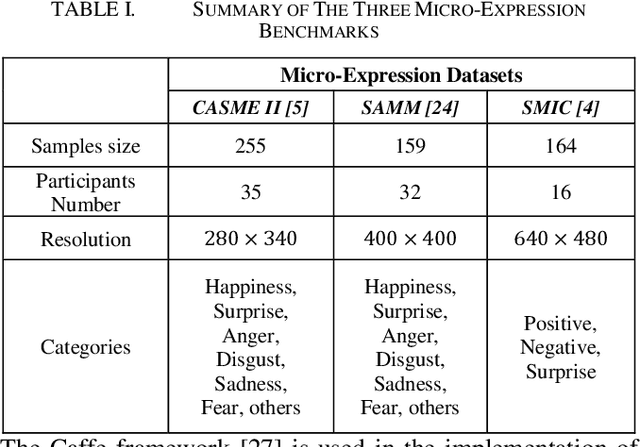

Abstract:The automatic recognition of micro-expression has been boosted ever since the successful introduction of deep learning approaches. Whilst researchers working on such topics are more and more tending to learn from the nature of micro-expression, the practice of using deep learning techniques has evolved from processing the entire video clip of micro-expression to the recognition on apex frame. Using apex frame is able to get rid of redundant information but the temporal evidence of micro-expression would be thereby left out. In this paper, we propose to do the recognition based on the spatial information from apex frame as well as on the temporal information from respective-adjacent frames. As such, a novel Apex-Time Network (ATNet) is proposed. Through extensive experiments on three benchmarks, we demonstrate the improvement achieved by adding the temporal information learned from adjacent frames around the apex frame. Specially, the model with such temporal information is more robust in cross-dataset validations.

Micro-Attention for Micro-Expression recognition

Nov 09, 2018

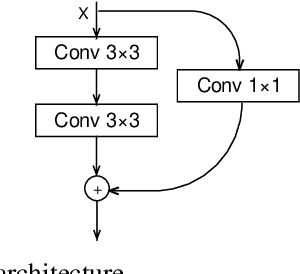

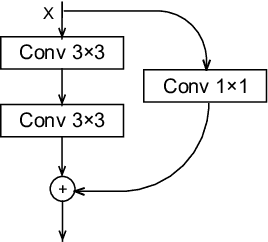

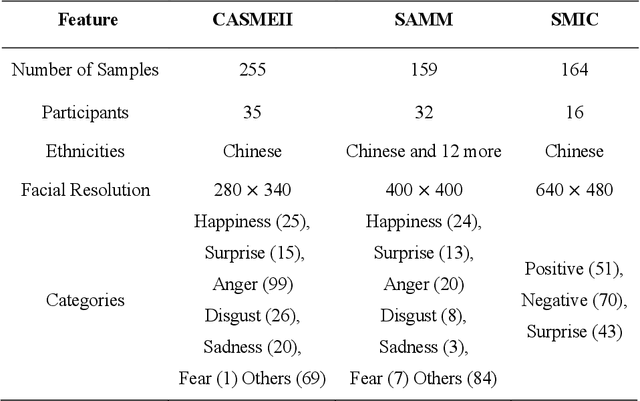

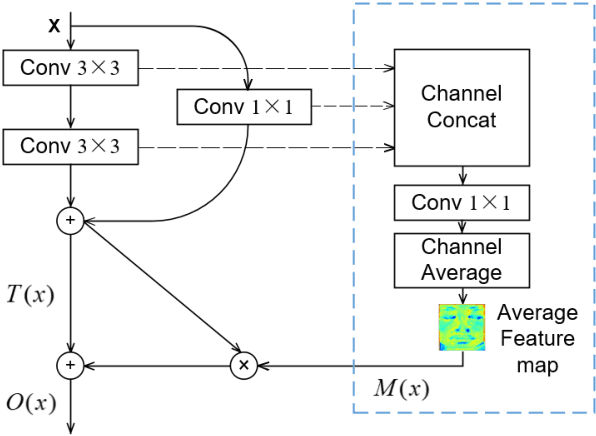

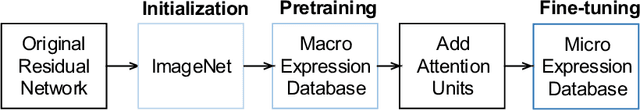

Abstract:Micro-expression, for its high objectivity in emotion detection, has emerged to be a promising modality in affective computing. Recently, deep learning methods have been successfully introduced into micro-expression recognition areas. Whilst the higher recognition accuracy achieved with deep learning methods, substantial challenges in micro-expression recognition remain. Issues with the existence of micro expression in small-local areas on face and limited size of databases still constrain the recognition accuracy of such facial behavior. In this work, to tackle such challenges, we propose novel attention mechanism called micro-attention cooperating with residual network. Micro-attention enables the network to learn to focus on facial area of interest (action units). Moreover, coping with small datasets, a simple yet efficient transfer learning approach is utilized to alleviate the overfitting risk. With an extensive experimental evaluation on two benchmarks (CASMEII, SAMM) and post-hoc feature visualizations, we demonstrate the effectiveness of proposed micro-attention and push the boundary of automatic recognition of micro-expression.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge