Svetlana Yanushkevich

Probabilistic causal graphs as categorical data synthesizers: Do they do better than Gaussian Copulas and Conditional Tabular GANs?

Apr 15, 2025Abstract:This study investigates the generation of high-quality synthetic categorical data, such as survey data, using causal graph models. Generating synthetic data aims not only to create a variety of data for training the models but also to preserve privacy while capturing relationships between the data. The research employs Structural Equation Modeling (SEM) followed by Bayesian Networks (BN). We used the categorical data that are based on the survey of accessibility to services for people with disabilities. We created both SEM and BN models to represent causal relationships and to capture joint distributions between variables. In our case studies, such variables include, in particular, demographics, types of disability, types of accessibility barriers and frequencies of encountering those barriers. The study compared the SEM-based BN method with alternative approaches, including the probabilistic Gaussian copula technique and generative models like the Conditional Tabular Generative Adversarial Network (CTGAN). The proposed method outperformed others in statistical metrics, including the Chi-square test, Kullback-Leibler divergence, and Total Variation Distance (TVD). In particular, the BN model demonstrated superior performance, achieving the highest TVD, indicating alignment with the original data. The Gaussian Copula ranked second, while CTGAN exhibited moderate performance. These analyses confirmed the ability of the SEM-based BN to produce synthetic data that maintain statistical and relational validity while maintaining confidentiality. This approach is particularly beneficial for research on sensitive data, such as accessibility and disability studies.

Can LLMs Assist Expert Elicitation for Probabilistic Causal Modeling?

Apr 14, 2025Abstract:Objective: This study investigates the potential of Large Language Models (LLMs) as an alternative to human expert elicitation for extracting structured causal knowledge and facilitating causal modeling in biometric and healthcare applications. Material and Methods: LLM-generated causal structures, specifically Bayesian networks (BNs), were benchmarked against traditional statistical methods (e.g., Bayesian Information Criterion) using healthcare datasets. Validation techniques included structural equation modeling (SEM) to verifying relationships, and measures such as entropy, predictive accuracy, and robustness to compare network structures. Results and Discussion: LLM-generated BNs demonstrated lower entropy than expert-elicited and statistically generated BNs, suggesting higher confidence and precision in predictions. However, limitations such as contextual constraints, hallucinated dependencies, and potential biases inherited from training data require further investigation. Conclusion: LLMs represent a novel frontier in expert elicitation for probabilistic causal modeling, promising to improve transparency and reduce uncertainty in the decision-making using such models.

Intelligent Stress Assessment for e-Coaching

Nov 03, 2023

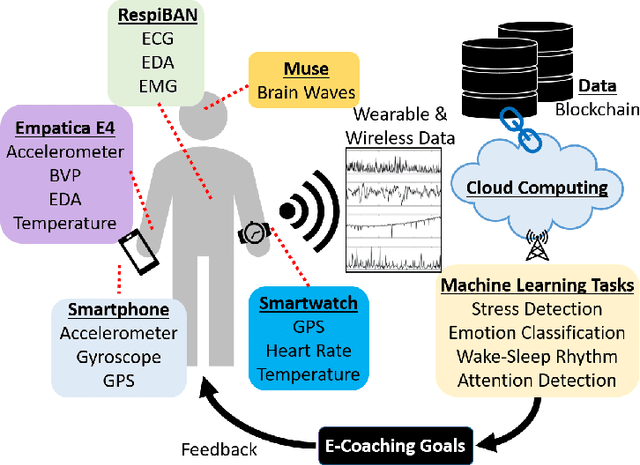

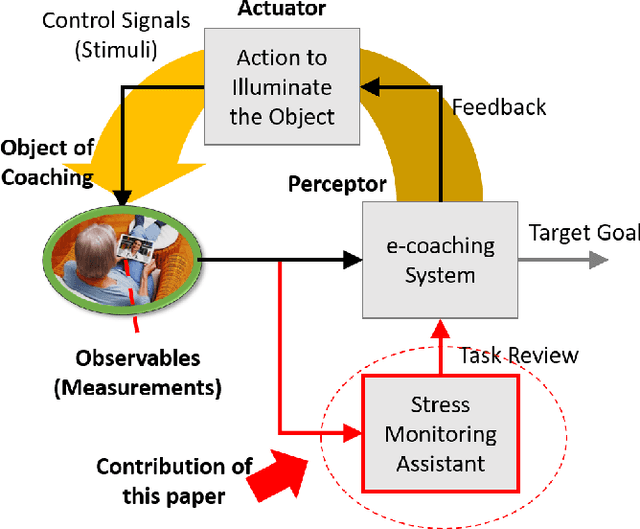

Abstract:This paper considers the adaptation of the e-coaching concept at times of emergencies and disasters, through aiding the e-coaching with intelligent tools for monitoring humans' affective state. The states such as anxiety, panic, avoidance, and stress, if properly detected, can be mitigated using the e-coaching tactic and strategy. In this work, we focus on a stress monitoring assistant tool developed on machine learning techniques. We provide the results of an experimental study using the proposed method.

Assessing Upper Limb Motor Function in the Immediate Post-Stroke Perioud Using Accelerometry

Nov 01, 2023

Abstract:Accelerometry has been extensively studied as an objective means of measuring upper limb function in patients post-stroke. The objective of this paper is to determine whether the accelerometry-derived measurements frequently used in more long-term rehabilitation studies can also be used to monitor and rapidly detect sudden changes in upper limb motor function in more recently hospitalized stroke patients. Six binary classification models were created by training on variable data window times of paretic upper limb accelerometer feature data. The models were assessed on their effectiveness for differentiating new input data into two classes: severe or moderately severe motor function. The classification models yielded Area Under the Curve (AUC) scores that ranged from 0.72 to 0.82 for 15-minute data windows to 0.77 to 0.94 for 120-minute data windows. These results served as a preliminary assessment and a basis on which to further investigate the efficacy of using accelerometry and machine learning to alert healthcare professionals to rapid changes in motor function in the days immediately following a stroke.

Hand Gesture Classification on Praxis Dataset: Trading Accuracy for Expense

Nov 01, 2023

Abstract:In this paper, we investigate hand gesture classifiers that rely upon the abstracted 'skeletal' data recorded using the RGB-Depth sensor. We focus on 'skeletal' data represented by the body joint coordinates, from the Praxis dataset. The PRAXIS dataset contains recordings of patients with cortical pathologies such as Alzheimer's disease, performing a Praxis test under the direction of a clinician. In this paper, we propose hand gesture classifiers that are more effective with the PRAXIS dataset than previously proposed models. Body joint data offers a compressed form of data that can be analyzed specifically for hand gesture recognition. Using a combination of windowing techniques with deep learning architecture such as a Recurrent Neural Network (RNN), we achieved an overall accuracy of 70.8% using only body joint data. In addition, we investigated a long-short-term-memory (LSTM) to extract and analyze the movement of the joints through time to recognize the hand gestures being performed and achieved a gesture recognition rate of 74.3% and 67.3% for static and dynamic gestures, respectively. The proposed approach contributed to the task of developing an automated, accurate, and inexpensive approach to diagnosing cortical pathologies for multiple healthcare applications.

* 8 pages, 6 figures

Transformer-based Hand Gesture Recognition via High-Density EMG Signals: From Instantaneous Recognition to Fusion of Motor Unit Spike Trains

Dec 07, 2022

Abstract:Designing efficient and labor-saving prosthetic hands requires powerful hand gesture recognition algorithms that can achieve high accuracy with limited complexity and latency. In this context, the paper proposes a compact deep learning framework referred to as the CT-HGR, which employs a vision transformer network to conduct hand gesture recognition using highdensity sEMG (HD-sEMG) signals. The attention mechanism in the proposed model identifies similarities among different data segments with a greater capacity for parallel computations and addresses the memory limitation problems while dealing with inputs of large sequence lengths. CT-HGR can be trained from scratch without any need for transfer learning and can simultaneously extract both temporal and spatial features of HD-sEMG data. Additionally, the CT-HGR framework can perform instantaneous recognition using sEMG image spatially composed from HD-sEMG signals. A variant of the CT-HGR is also designed to incorporate microscopic neural drive information in the form of Motor Unit Spike Trains (MUSTs) extracted from HD-sEMG signals using Blind Source Separation (BSS). This variant is combined with its baseline version via a hybrid architecture to evaluate potentials of fusing macroscopic and microscopic neural drive information. The utilized HD-sEMG dataset involves 128 electrodes that collect the signals related to 65 isometric hand gestures of 20 subjects. The proposed CT-HGR framework is applied to 31.25, 62.5, 125, 250 ms window sizes of the above-mentioned dataset utilizing 32, 64, 128 electrode channels. The average accuracy over all the participants using 32 electrodes and a window size of 31.25 ms is 86.23%, which gradually increases till reaching 91.98% for 128 electrodes and a window size of 250 ms. The CT-HGR achieves accuracy of 89.13% for instantaneous recognition based on a single frame of HD-sEMG image.

Stress Propagation in Human-Robot Teams Based on Computational Logic Model

Nov 08, 2022

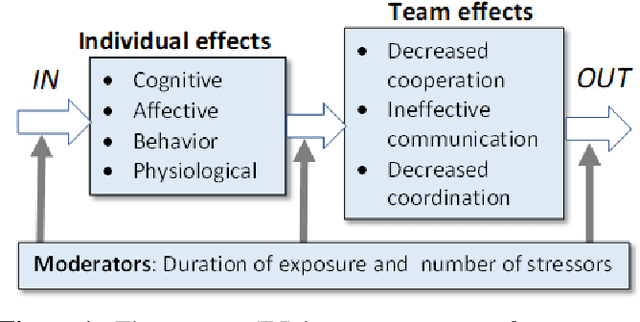

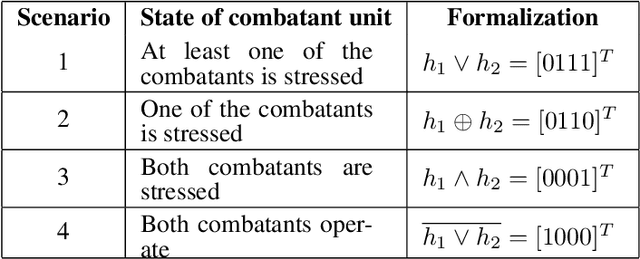

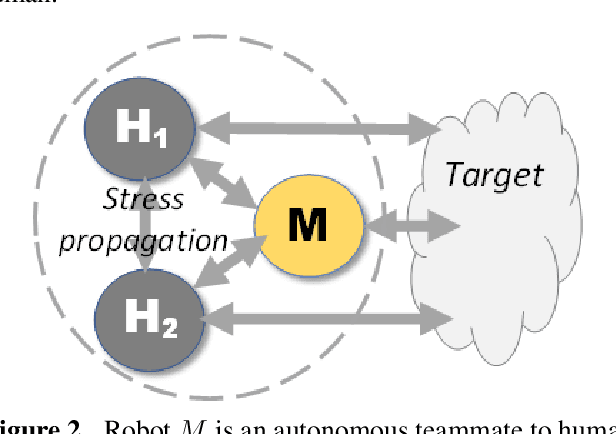

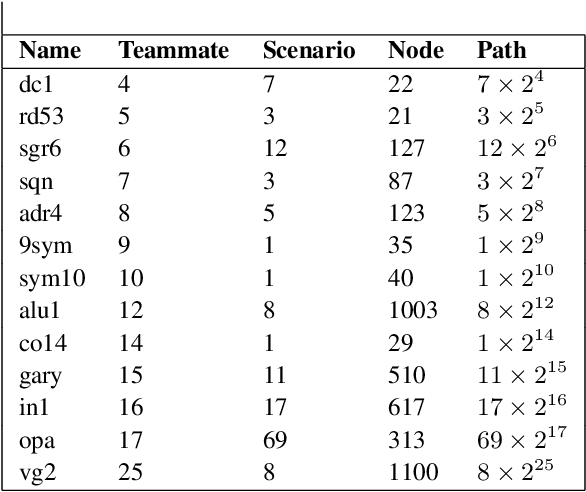

Abstract:Mission teams are exposed to the emotional toll of life and death decisions. These are small groups of specially trained people supported by intelligent machines for dealing with stressful environments and scenarios. We developed a composite model for stress monitoring in such teams of human and autonomous machines. This modelling aims to identify the conditions that may contribute to mission failure. The proposed model is composed of three parts: 1) a computational logic part that statically describes the stress states of teammates; 2) a decision part that manifests the mission status at any time; 3) a stress propagation part based on standard Susceptible-Infected-Susceptible (SIS) paradigm. In contrast to the approaches such as agent-based, random-walk and game models, the proposed model combines various mechanisms to satisfy the conditions of stress propagation in small groups. Our core approach involves data structures such as decision tables and decision diagrams. These tools are adaptable to human-machine teaming as well.

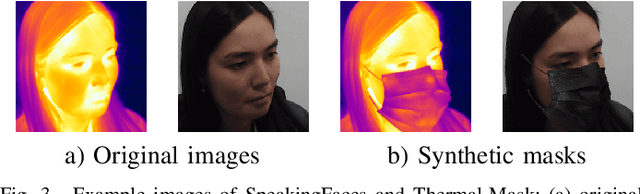

Fairness on Synthetic Visual and Thermal Mask Images

Sep 19, 2022

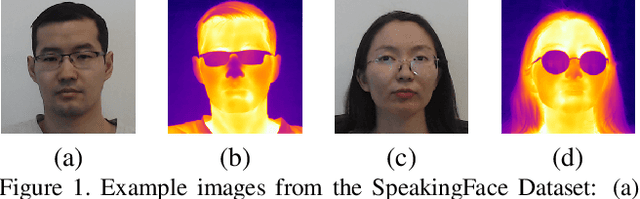

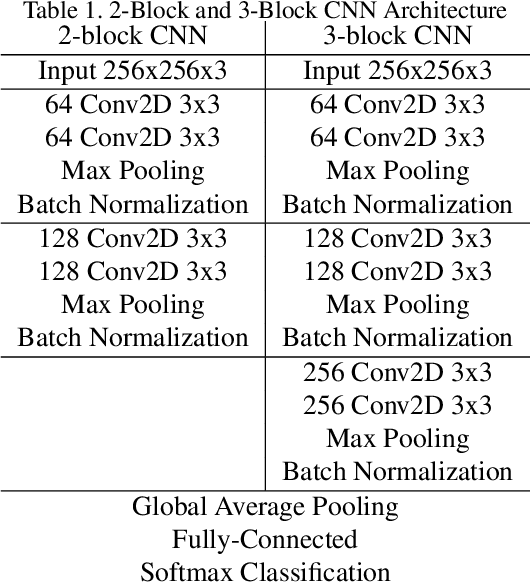

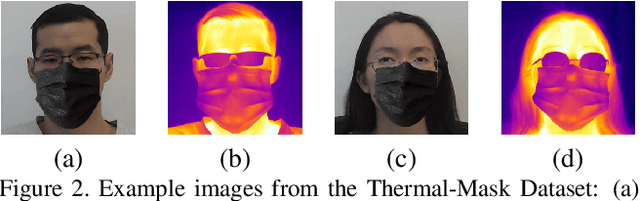

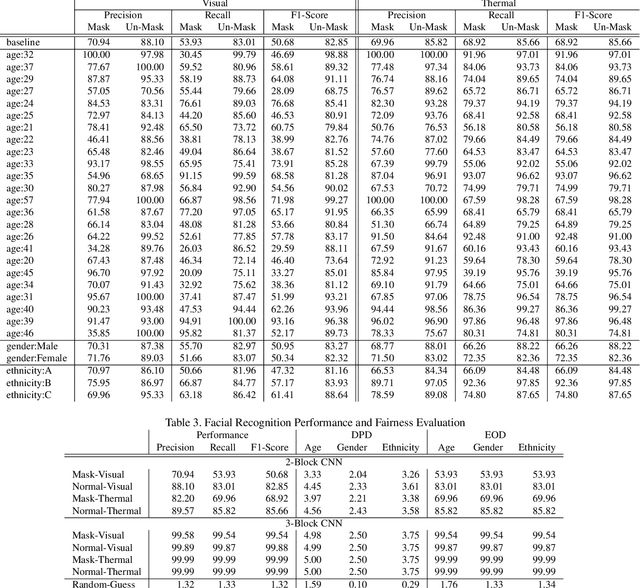

Abstract:In this paper, we study performance and fairness on visual and thermal images and expand the assessment to masked synthetic images. Using the SpeakingFace and Thermal-Mask dataset, we propose a process to assess fairness on real images and show how the same process can be applied to synthetic images. The resulting process shows a demographic parity difference of 1.59 for random guessing and increases to 5.0 when the recognition performance increases to a precision and recall rate of 99.99\%. We indicate that inherently biased datasets can deeply impact the fairness of any biometric system. A primary cause of a biased dataset is the class imbalance due to the data collection process. To address imbalanced datasets, the classes with fewer samples can be augmented with synthetic images to generate a more balanced dataset resulting in less bias when training a machine learning system. For biometric-enabled systems, fairness is of critical importance, while the related concept of Equity, Diversity, and Inclusion (EDI) is well suited for the generalization of fairness in biometrics, in this paper, we focus on the 3 most common demographic groups age, gender, and ethnicity.

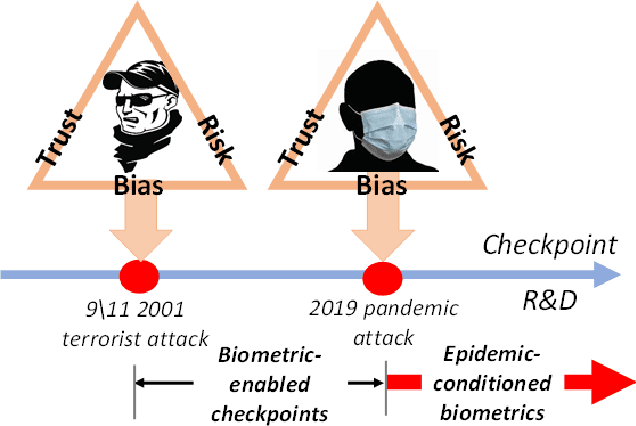

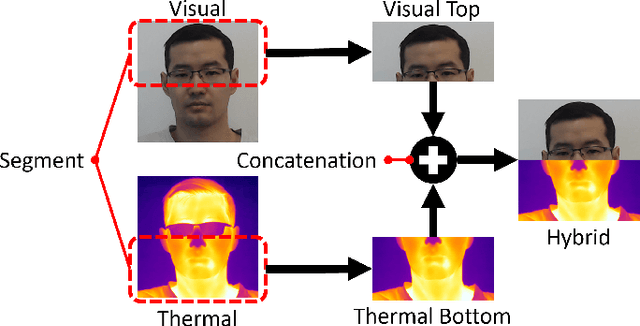

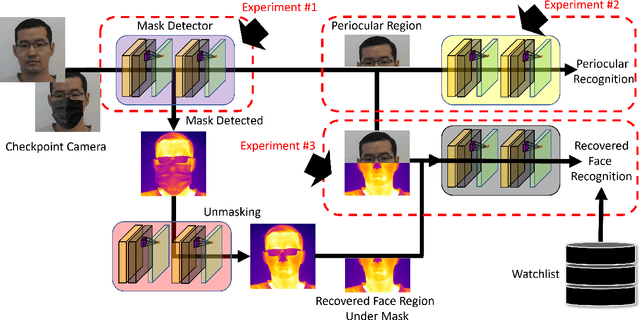

Biometrics in the Time of Pandemic: 40% Masked Face Recognition Degradation can be Reduced to 2%

Jan 03, 2022

Abstract:In this study of the face recognition on masked versus unmasked faces generated using Flickr-Faces-HQ and SpeakingFaces datasets, we report 36.78% degradation of recognition performance caused by the mask-wearing at the time of pandemics, in particular, in border checkpoint scenarios. We have achieved better performance and reduced the degradation to 1.79% using advanced deep learning approaches in the cross-spectral domain.

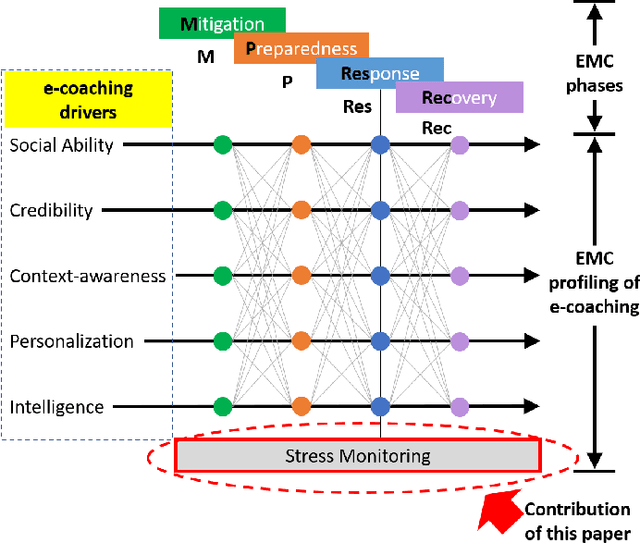

Counter-Epidemiological Projections of e-Coaching

May 24, 2021

Abstract:This paper considers e-coaching at times of pandemic. It utilizes the Emergency Management Cycle (EMC), a core doctrine for managing disasters. The EMC dimensions provide a useful taxonomical view for the development and application of e-coaching systems, emphasizing technological and societal issues. Typical pandemic symptoms such as anxiety, panic, avoidance, and stress, if properly detected, can be mitigated using the e-coaching tactic and strategy. In this work, we focus on a stress monitoring assistant developed upon machine learning techniques. We provide the results of an experimental study of a prototype of such an assistant. Our study leads to the conclusion that stress monitoring shall become a valuable component of e-coaching at all EMC phases.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge