Suhwan Lee

Measuring the Stability of Process Outcome Predictions in Online Settings

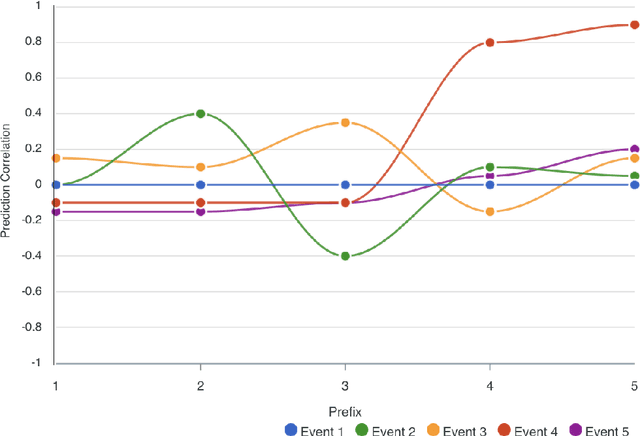

Oct 13, 2023Abstract:Predictive Process Monitoring aims to forecast the future progress of process instances using historical event data. As predictive process monitoring is increasingly applied in online settings to enable timely interventions, evaluating the performance of the underlying models becomes crucial for ensuring their consistency and reliability over time. This is especially important in high risk business scenarios where incorrect predictions may have severe consequences. However, predictive models are currently usually evaluated using a single, aggregated value or a time-series visualization, which makes it challenging to assess their performance and, specifically, their stability over time. This paper proposes an evaluation framework for assessing the stability of models for online predictive process monitoring. The framework introduces four performance meta-measures: the frequency of significant performance drops, the magnitude of such drops, the recovery rate, and the volatility of performance. To validate this framework, we applied it to two artificial and two real-world event logs. The results demonstrate that these meta-measures facilitate the comparison and selection of predictive models for different risk-taking scenarios. Such insights are of particular value to enhance decision-making in dynamic business environments.

The Analysis of Online Event Streams: Predicting the Next Activity for Anomaly Detection

Mar 17, 2022

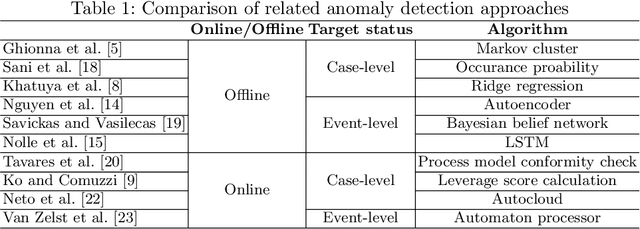

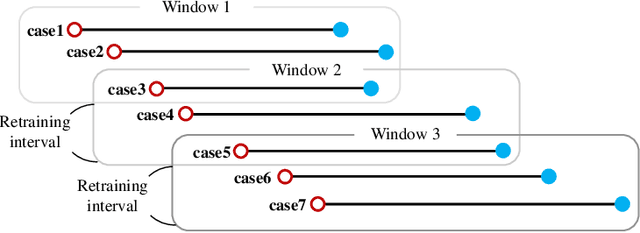

Abstract:Anomaly detection in process mining focuses on identifying anomalous cases or events in process executions. The resulting diagnostics are used to provide measures to prevent fraudulent behavior, as well as to derive recommendations for improving process compliance and security. Most existing techniques focus on detecting anomalous cases in an offline setting. However, to identify potential anomalies in a timely manner and take immediate countermeasures, it is necessary to detect event-level anomalies online, in real-time. In this paper, we propose to tackle the online event anomaly detection problem using next-activity prediction methods. More specifically, we investigate the use of both ML models (such as RF and XGBoost) and deep models (such as LSTM) to predict the probabilities of next-activities and consider the events predicted unlikely as anomalies. We compare these predictive anomaly detection methods to four classical unsupervised anomaly detection approaches (such as Isolation forest and LOF) in the online setting. Our evaluation shows that the proposed method using ML models tends to outperform the one using a deep model, while both methods outperform the classical unsupervised approaches in detecting anomalous events.

Explainable Predictive Process Monitoring: A User Evaluation

Feb 15, 2022

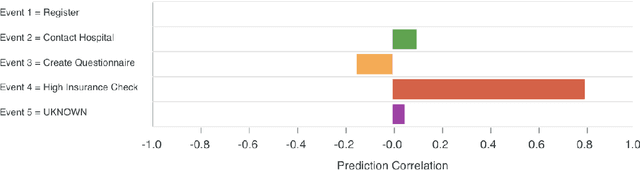

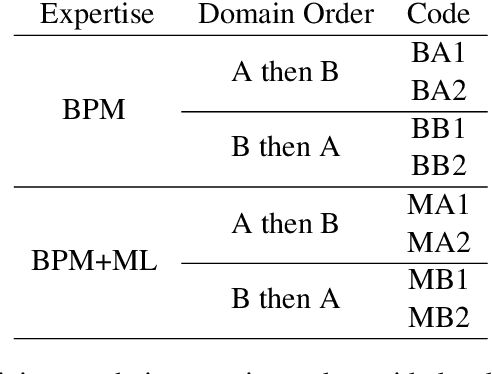

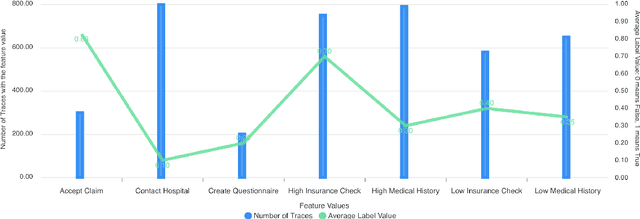

Abstract:Explainability is motivated by the lack of transparency of black-box Machine Learning approaches, which do not foster trust and acceptance of Machine Learning algorithms. This also happens in the Predictive Process Monitoring field, where predictions, obtained by applying Machine Learning techniques, need to be explained to users, so as to gain their trust and acceptance. In this work, we carry on a user evaluation on explanation approaches for Predictive Process Monitoring aiming at investigating whether and how the explanations provided (i) are understandable; (ii) are useful in decision making tasks;(iii) can be further improved for process analysts, with different Machine Learning expertise levels. The results of the user evaluation show that, although explanation plots are overall understandable and useful for decision making tasks for Business Process Management users -- with and without experience in Machine Learning -- differences exist in the comprehension and usage of different plots, as well as in the way users with different Machine Learning expertise understand and use them.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge