Sue Booker

Detecting Emotion Primitives from Speech and their use in discerning Categorical Emotions

Jan 31, 2020

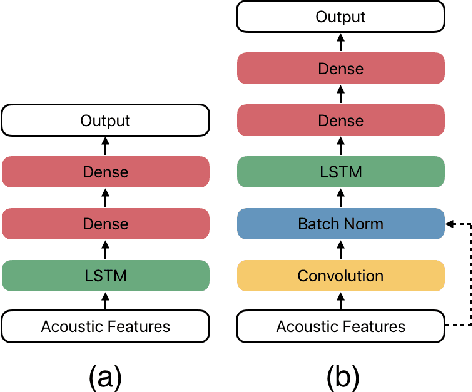

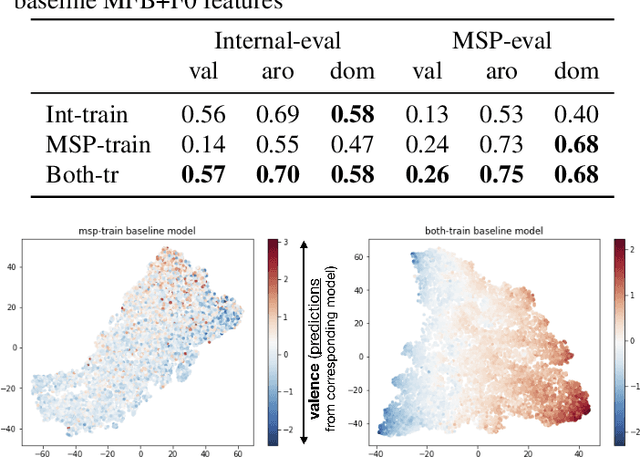

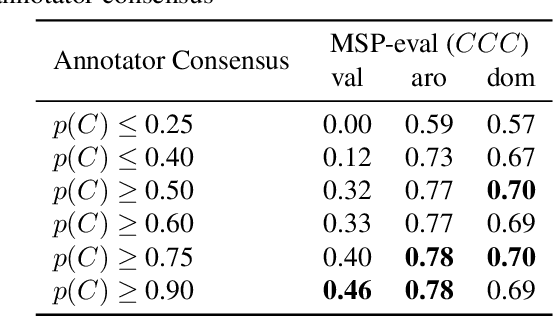

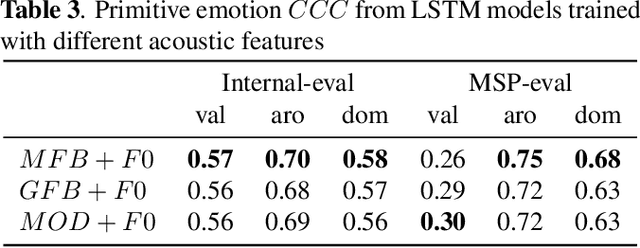

Abstract:Emotion plays an essential role in human-to-human communication, enabling us to convey feelings such as happiness, frustration, and sincerity. While modern speech technologies rely heavily on speech recognition and natural language understanding for speech content understanding, the investigation of vocal expression is increasingly gaining attention. Key considerations for building robust emotion models include characterizing and improving the extent to which a model, given its training data distribution, is able to generalize to unseen data conditions. This work investigated a long-shot-term memory (LSTM) network and a time convolution - LSTM (TC-LSTM) to detect primitive emotion attributes such as valence, arousal, and dominance, from speech. It was observed that training with multiple datasets and using robust features improved the concordance correlation coefficient (CCC) for valence, by 30\% with respect to the baseline system. Additionally, this work investigated how emotion primitives can be used to detect categorical emotions such as happiness, disgust, contempt, anger, and surprise from neutral speech, and results indicated that arousal, followed by dominance was a better detector of such emotions.

Leveraging Acoustic Cues and Paralinguistic Embeddings to Detect Expression from Voice

Jun 28, 2019

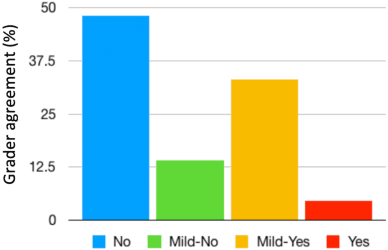

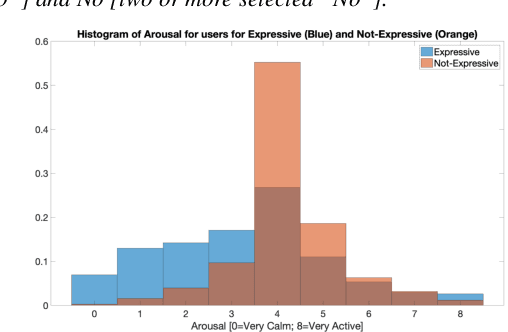

Abstract:Millions of people reach out to digital assistants such as Siri every day, asking for information, making phone calls, seeking assistance, and much more. The expectation is that such assistants should understand the intent of the users query. Detecting the intent of a query from a short, isolated utterance is a difficult task. Intent cannot always be obtained from speech-recognized transcriptions. A transcription driven approach can interpret what has been said but fails to acknowledge how it has been said, and as a consequence, may ignore the expression present in the voice. Our work investigates whether a system can reliably detect vocal expression in queries using acoustic and paralinguistic embedding. Results show that the proposed method offers a relative equal error rate (EER) decrease of 60% compared to a bag-of-word based system, corroborating that expression is significantly represented by vocal attributes, rather than being purely lexical. Addition of emotion embedding helped to reduce the EER by 30% relative to the acoustic embedding, demonstrating the relevance of emotion in expressive voice.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge