Sri Raghu Malireddi

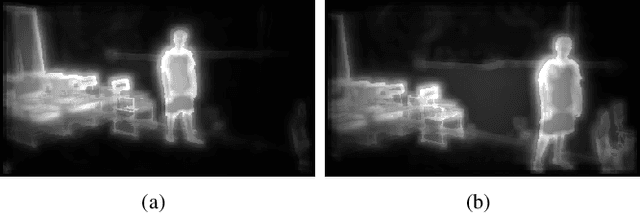

HandSeg: An Automatically Labeled Dataset for Hand Segmentation from Depth Images

Aug 02, 2018

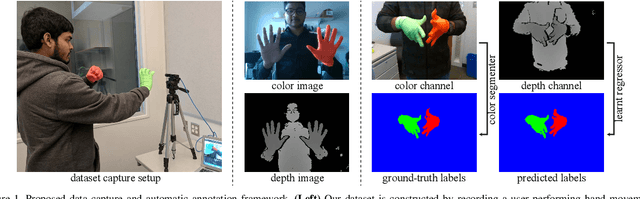

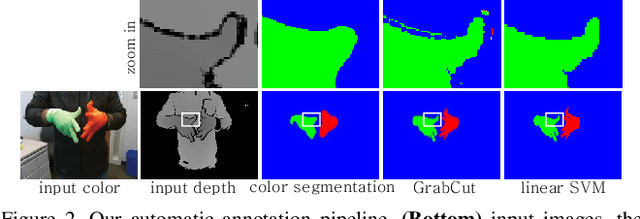

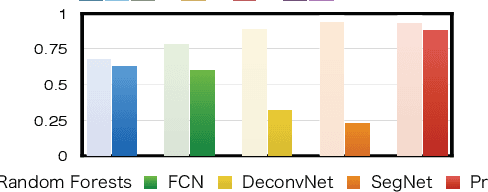

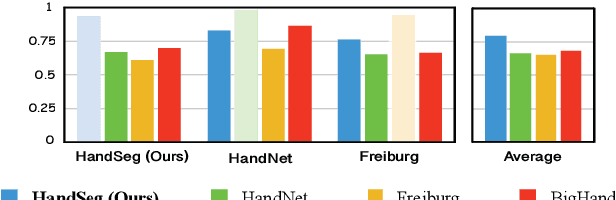

Abstract:We propose an automatic method for generating high-quality annotations for depth-based hand segmentation, and introduce a large-scale hand segmentation dataset. Existing datasets are typically limited to a single hand. By exploiting the visual cues given by an RGBD sensor and a pair of colored gloves, we automatically generate dense annotations for two hand segmentation. This lowers the cost/complexity of creating high quality datasets, and makes it easy to expand the dataset in the future. We further show that existing datasets, even with data augmentation, are not sufficient to train a hand segmentation algorithm that can distinguish two hands. Source and datasets will be made publicly available.

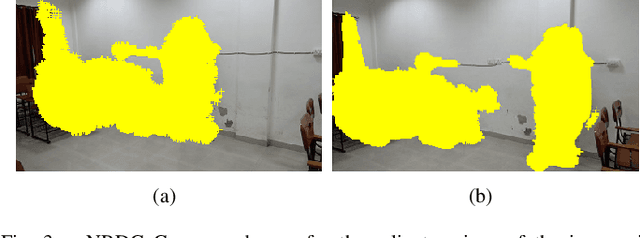

Automatic Segmentation of Dynamic Objects from an Image Pair

Apr 16, 2016

Abstract:Automatic segmentation of objects from a single image is a challenging problem which generally requires training on large number of images. We consider the problem of automatically segmenting only the dynamic objects from a given pair of images of a scene captured from different positions. We exploit dense correspondences along with saliency measures in order to first localize the interest points on the dynamic objects from the two images. We propose a novel approach based on techniques from computational geometry in order to automatically segment the dynamic objects from both the images using a top-down segmentation strategy. We discuss how the proposed approach is unique in novelty compared to other state-of-the-art segmentation algorithms. We show that the proposed approach for segmentation is efficient in handling large motions and is able to achieve very good segmentation of the objects for different scenes. We analyse the results with respect to the manually marked ground truth segmentation masks created using our own dataset and provide key observations in order to improve the work in future.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge