Shuohao Zhang

Self-Supervised Video Desmoking for Laparoscopic Surgery

Mar 17, 2024Abstract:Due to the difficulty of collecting real paired data, most existing desmoking methods train the models by synthesizing smoke, generalizing poorly to real surgical scenarios. Although a few works have explored single-image real-world desmoking in unpaired learning manners, they still encounter challenges in handling dense smoke. In this work, we address these issues together by introducing the self-supervised surgery video desmoking (SelfSVD). On the one hand, we observe that the frame captured before the activation of high-energy devices is generally clear (named pre-smoke frame, PS frame), thus it can serve as supervision for other smoky frames, making real-world self-supervised video desmoking practically feasible. On the other hand, in order to enhance the desmoking performance, we further feed the valuable information from PS frame into models, where a masking strategy and a regularization term are presented to avoid trivial solutions. In addition, we construct a real surgery video dataset for desmoking, which covers a variety of smoky scenes. Extensive experiments on the dataset show that our SelfSVD can remove smoke more effectively and efficiently while recovering more photo-realistic details than the state-of-the-art methods. The dataset, codes, and pre-trained models are available at \url{https://github.com/ZcsrenlongZ/SelfSVD}.

Bracketing is All You Need: Unifying Image Restoration and Enhancement Tasks with Multi-Exposure Images

Jan 01, 2024

Abstract:It is challenging but highly desired to acquire high-quality photos with clear content in low-light environments. Although multi-image processing methods (using burst, dual-exposure, or multi-exposure images) have made significant progress in addressing this issue, they typically focus exclusively on specific restoration or enhancement tasks, being insufficient in exploiting multi-image. Motivated by that multi-exposure images are complementary in denoising, deblurring, high dynamic range imaging, and super-resolution, we propose to utilize bracketing photography to unify restoration and enhancement tasks in this work. Due to the difficulty in collecting real-world pairs, we suggest a solution that first pre-trains the model with synthetic paired data and then adapts it to real-world unlabeled images. In particular, a temporally modulated recurrent network (TMRNet) and self-supervised adaptation method are proposed. Moreover, we construct a data simulation pipeline to synthesize pairs and collect real-world images from 200 nighttime scenarios. Experiments on both datasets show that our method performs favorably against the state-of-the-art multi-image processing ones. The dataset, code, and pre-trained models are available at https://github.com/cszhilu1998/BracketIRE.

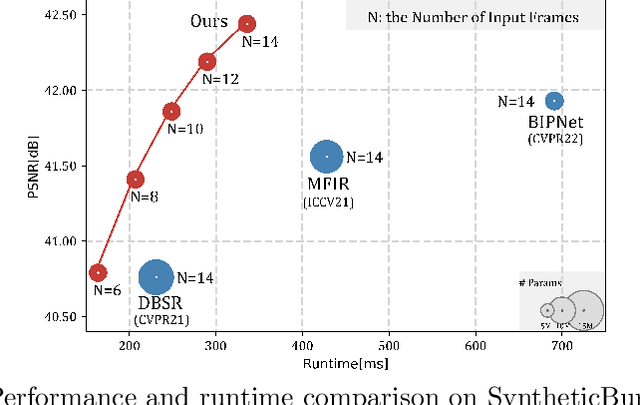

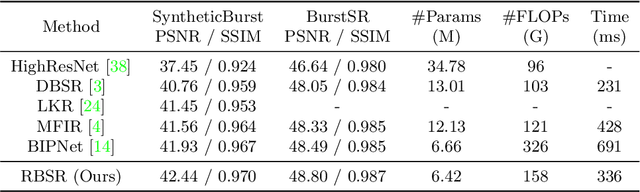

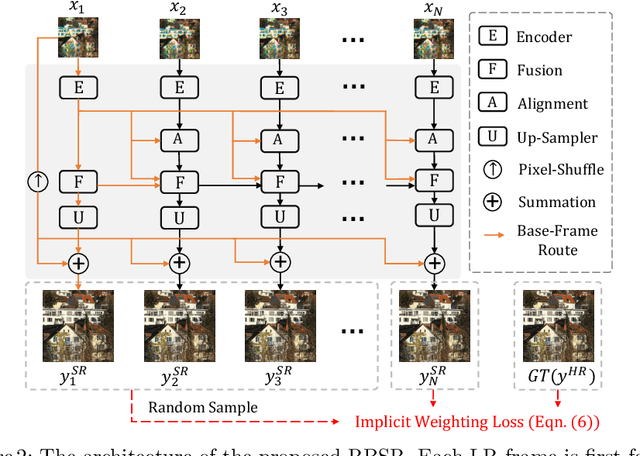

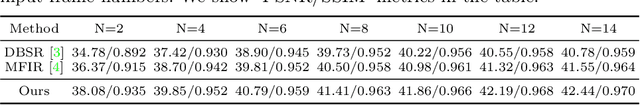

RBSR: Efficient and Flexible Recurrent Network for Burst Super-Resolution

Jun 30, 2023

Abstract:Burst super-resolution (BurstSR) aims at reconstructing a high-resolution (HR) image from a sequence of low-resolution (LR) and noisy images, which is conducive to enhancing the imaging effects of smartphones with limited sensors. The main challenge of BurstSR is to effectively combine the complementary information from input frames, while existing methods still struggle with it. In this paper, we suggest fusing cues frame-by-frame with an efficient and flexible recurrent network. In particular, we emphasize the role of the base-frame and utilize it as a key prompt to guide the knowledge acquisition from other frames in every recurrence. Moreover, we introduce an implicit weighting loss to improve the model's flexibility in facing input frames with variable numbers. Extensive experiments on both synthetic and real-world datasets demonstrate that our method achieves better results than state-of-the-art ones. Codes and pre-trained models are available at https://github.com/ZcsrenlongZ/RBSR.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge